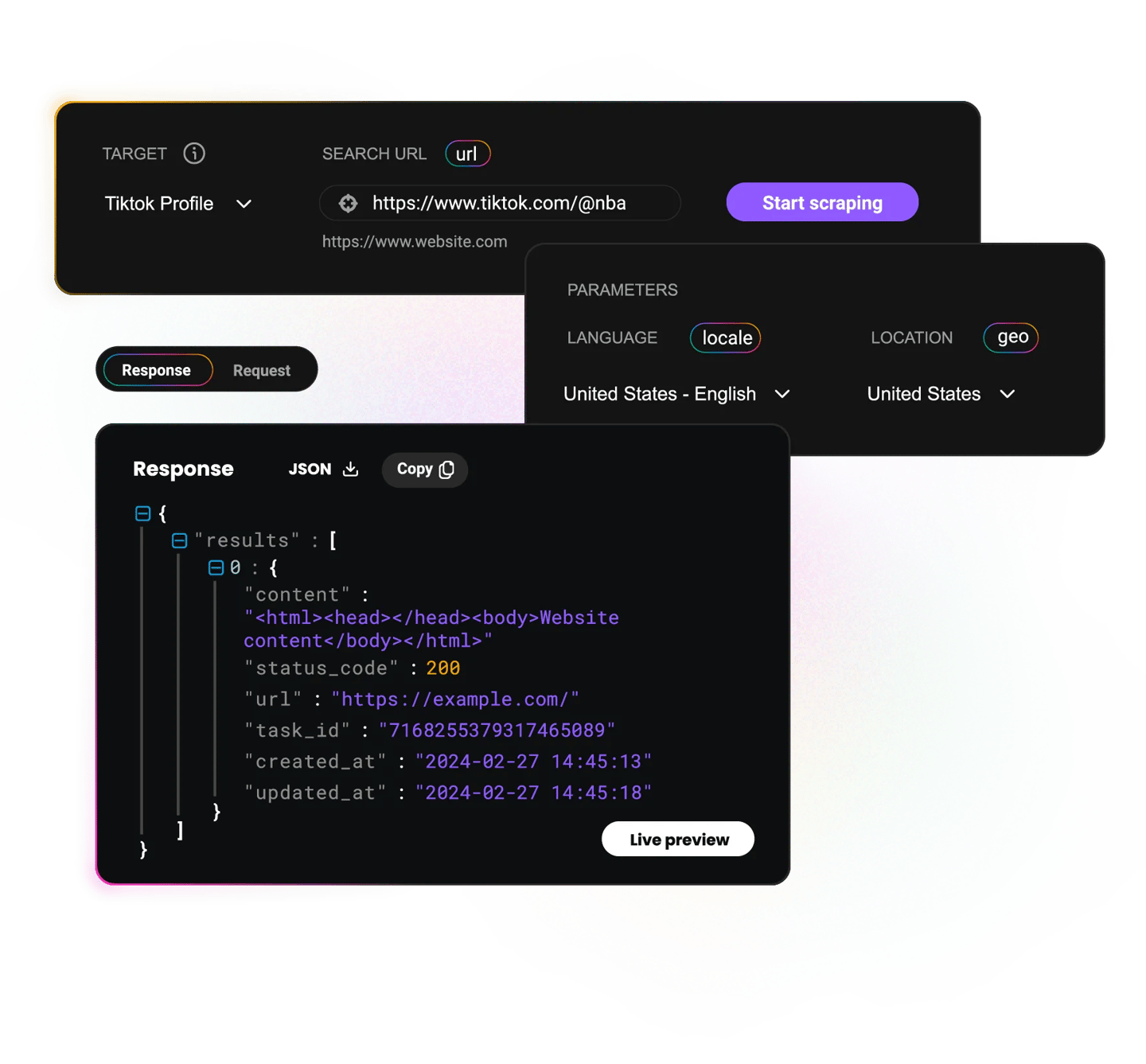

Social Media Scraper API

Collect structured, ready-to-use social media data through our Social Media Scraping API*. Built for developers, optimized for speed, and handy when you need to avoid CAPTCHAs, geo-restrictions, or IP bans.

*This scraper is now a part of Web Scraping API.

200

requests per second

100+

ready-made templates

100%

success rate

195+

locations worldwide

Free

starter plan

Trusted by:

Ready-made templates for social media data extraction

Speed up your projects with pre-built scraping templates designed for structured publicly available social data, fully customizable for your targets.

- All

- Bing

- Google

- AI

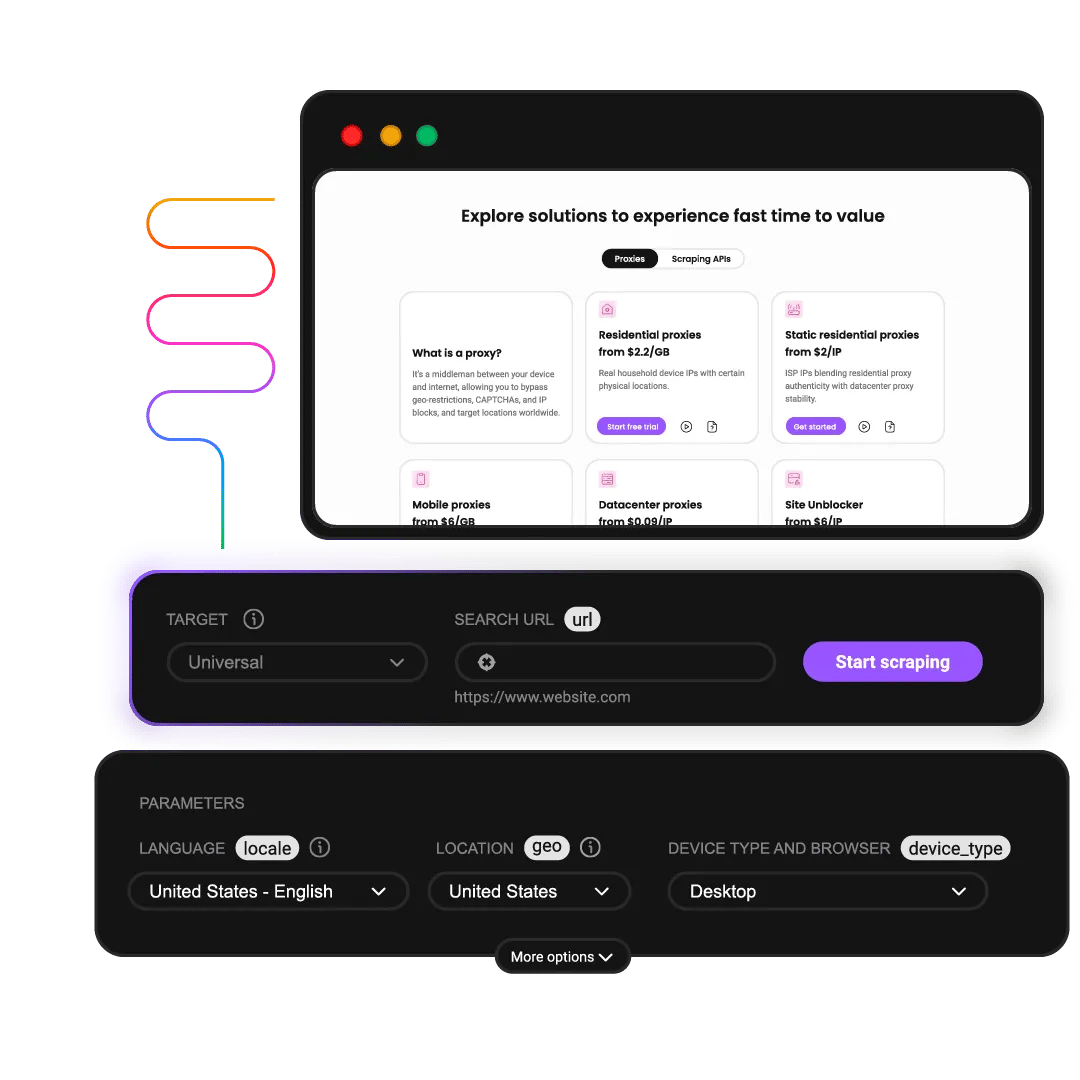

What does Web Scraping API cost?

Choose a plan based on your scraping volume. All plans include the same powerful features – you only pay for what you use. Start with the free plan to test before committing.

Plan prices

+VAT / Billed monthly

Rate limit

All prices shown are per 1K req.

Plan price

$0

+VAT / Billed monthly

Request type

Price per 1k req.

2K req.

$0.50

1K req.

$0.75

1K req.

$1.00

667 req.

$1.50

Rate limit

10 req/s

Plan price

$19

+VAT / Billed monthly

Request type

Price per 1k req.

38K req.

$0.50

25K req.

$0.75

19K req.

$1.00

12K req.

$1.50

Rate limit

10 req/s

Plan price

$49

+VAT / Billed monthly

Request type

Price per 1k req.

163K req.

$0.30

75K req.

$0.65

54K req.

$0.90

39K req.

$1.25

Rate limit

25 req/s

Plan price

$99

+VAT / Billed monthly

Request type

Price per 1k req.

707K req.

$0.14

165K req.

$0.60

116K req.

$0.85

82K req.

$1.20

Rate limit

50 req/s

Need more?

Request type

Price per 1k req.

Custom

Custom

Custom

Custom

Rate limit

Custom

For low-security sites and simple access

For accessing guarded or sensitive pages

With each plan, you access:

99.99% success rate

Results in HTML, JSON, CSV, XHR or PNG

MCP server

JavaScript rendering

AI integrations

100+ pre-built templates

Supports search, pagination, and filtering

LLM-ready markdown format

24/7 tech support

14-day money-back

SSL Secure Payment

Your information is protected by 256-bit SSL

Flexible output options

Get data in JSON, CSV, Markdown, PNG, XHR, HTML output, depending on your target.

Global location targeting

Target specific markets to capture region-relevant feeds, trends, and creators.

Easy integration

Set up with code examples on GitHub, Postman collections, and our quick start guide.

Real-time or on demand results

Choose between synchronous and asynchronous requests for your targets.

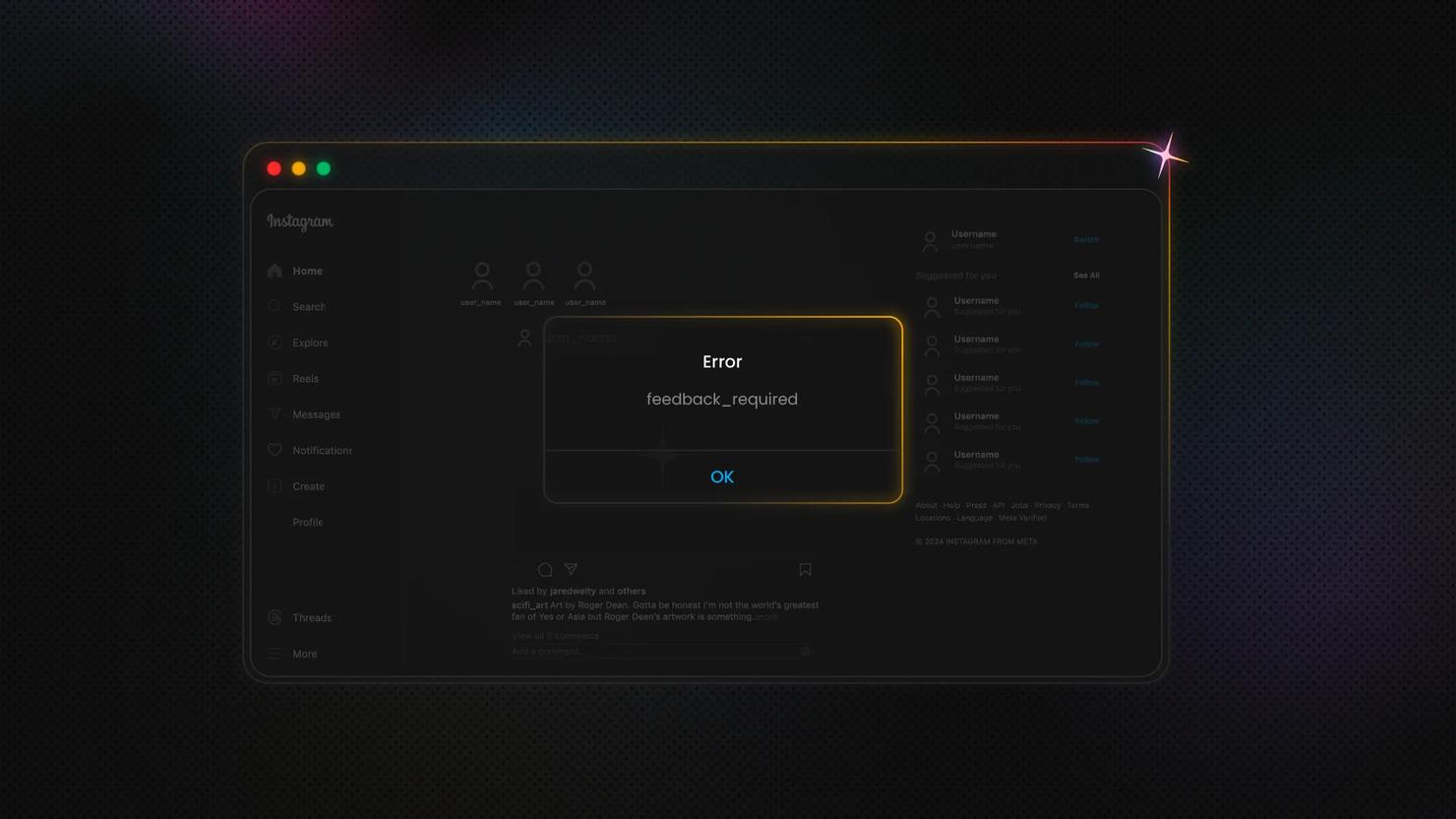

Advanced anti-bot protection

Our scraping API integrates browser fingerprints for seamless data collection.

Ready-made scraping templates

Get fast access to real-time data with the help of our customizable ready-made scrapers.

Why scraping community chooses Decodo

Manual scraping

Other APIs

Decodo

Manage proxy rotation yourself

Limited proxy pools

125M+ IPs with global coverage

Build CAPTCHA solvers

Frequent CAPTCHA blocks

Advanced browser fingerprinting

Handle retries manually

Pay for failed requests

Only pay for successful requests

Maintenance overhead

Complex documentation

100+ ready-made templates

Days to implement

Limited output formats

JSON, CSV, Markdown, PNG, XHR, HTML output

Ready-made scrapers

Save setup time with pre-configured scrapers designed for efficient publicly available data extraction. Modify parameters, launch, and collect data in seconds.

Resources for a quick start

Streamline your development with detailed code samples in popular programming languages like Python, PHP, and Node.js via our Github, or check out our quick start guides for setup tips. Want to make it even easier? Our customizable ready-made scrapers with pre-configured parameters will do all the heavy lifting for you.

Stop overpaying for scraping plans

The new Web Scraping API lets you enable JS rendering and premium proxies only when needed.

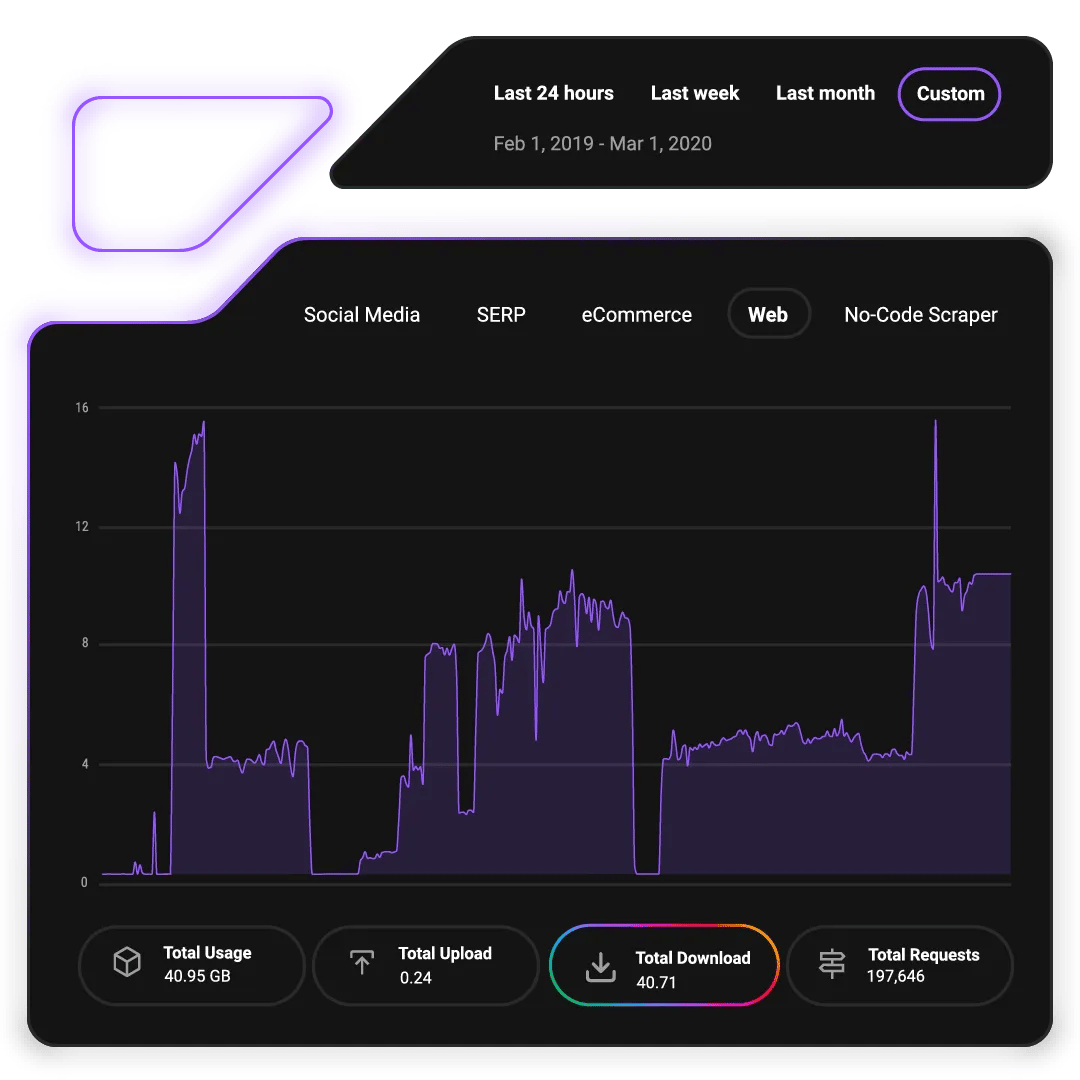

Real-time & callback requests

Want the data now or prefer things a little more planned out? No problem. Pick real-time or on-demand data updates with our synchronous or asynchronous requests.

Find out what people are saying about us

We're thrilled to have the support of our 135K+ clients and the industry's best

Attentive service

The professional expertise of the Decodo solution has significantly boosted our business growth while enhancing overall efficiency and effectiveness.

N

Novabeyond

Easy to get things done

Decodo provides great service with a simple setup and friendly support team.

R

RoiDynamic

A key to our work

Decodo enables us to develop and test applications in varied environments while supporting precise data collection for research and audience profiling.

C

Cybereg

Decodo blog

Expand your knowledge on web data extraction, automation, and structured scraping.

Most recent

Open WebUI tools: how to give your local LLM real-time internet access with a scraping API

Justinas Tamasevicius

Last updated: May 28, 2026

12 min read

Frequently Asked Questions

What is a Social Media Scraping API?

A Social Media Scraping API is an automated tool that extracts publicly available data from social media platforms without manual effort. It handles the technical complexities of data collection, including managing proxies, bypassing anti-bot measures, and returning structured data in formats like JSON or CSV. This allows you to gather data that’s before available before the login wall.

What use cases does Social Media Scraping API work for?

Our Web Scraping API supports various use cases, including:

- Brand monitoring. Track mentions, sentiment, and brand reputation across platforms.

- Competitive analysis. Monitor competitors' content strategies, engagement rates, and audience growth.

- Market research. Analyze trends, consumer preferences, and emerging topics in your industry.

- Influencer identification. Find relevant influencers based on engagement metrics and audience demographics.

- Content performance analysis. Track which content types generate the most engagement.

- Lead generation. Identify potential customers based on interests and activity patterns.

- Crisis management. Monitor for negative sentiment or PR issues in real time.

Is data collection through the Social Media Scraper APIs compliant with legal regulations?

Publicly available data can be scraped. Our Web Scraping API collects only publicly accessible information and can’t access information behind the login wall. However, compliance depends on how you use the collected data. You should ensure your data collection and usage practices align with relevant regulations in your jurisdiction and respect platform terms of service. When in doubt, consult a legal professional.

What targets can I scrape with Social Media Scraping API?

Depending on the platform you’re accessing, you can scrape various data publicly available datapoints, including URLs, posts, comments, engagement stats, and other information.

What categories of data can be collected?

What data points can be collected depend on the platform you’re trying to access. In most cases, you’ll be able to get these publicly available data categories:

- User data. Usernames, bio information, profile pictures, verification status, follower counts.

- Content data. Post text, captions, URLs.

- Engagement data. Like counts, comment counts, share counts, view counts.

- Metadata. Post IDs and geolocation tags.

- Interaction data. Comments, replies, mentions, hashtags.

- Account metrics. Following counts, post frequency, account age.

What are ready-made scrapers?

Ready-made scrapers are pre-configured tools within that are available with our Advanced Web Scraping API subscription, designed for easy and quick data collection. They eliminate the need for extensive technical knowledge, custom scraper development, and proxy management, making them ideal for users seeking a low/no-code solution. By using ready-made scrapers, you can access and structure large data sets efficiently.

Start Extracting Structured Social Media Data Today

Launch your first API call in minutes. Scale effortlessly with Decodo’s infrastructure and focus on insights, not maintenance.

14-day money-back option