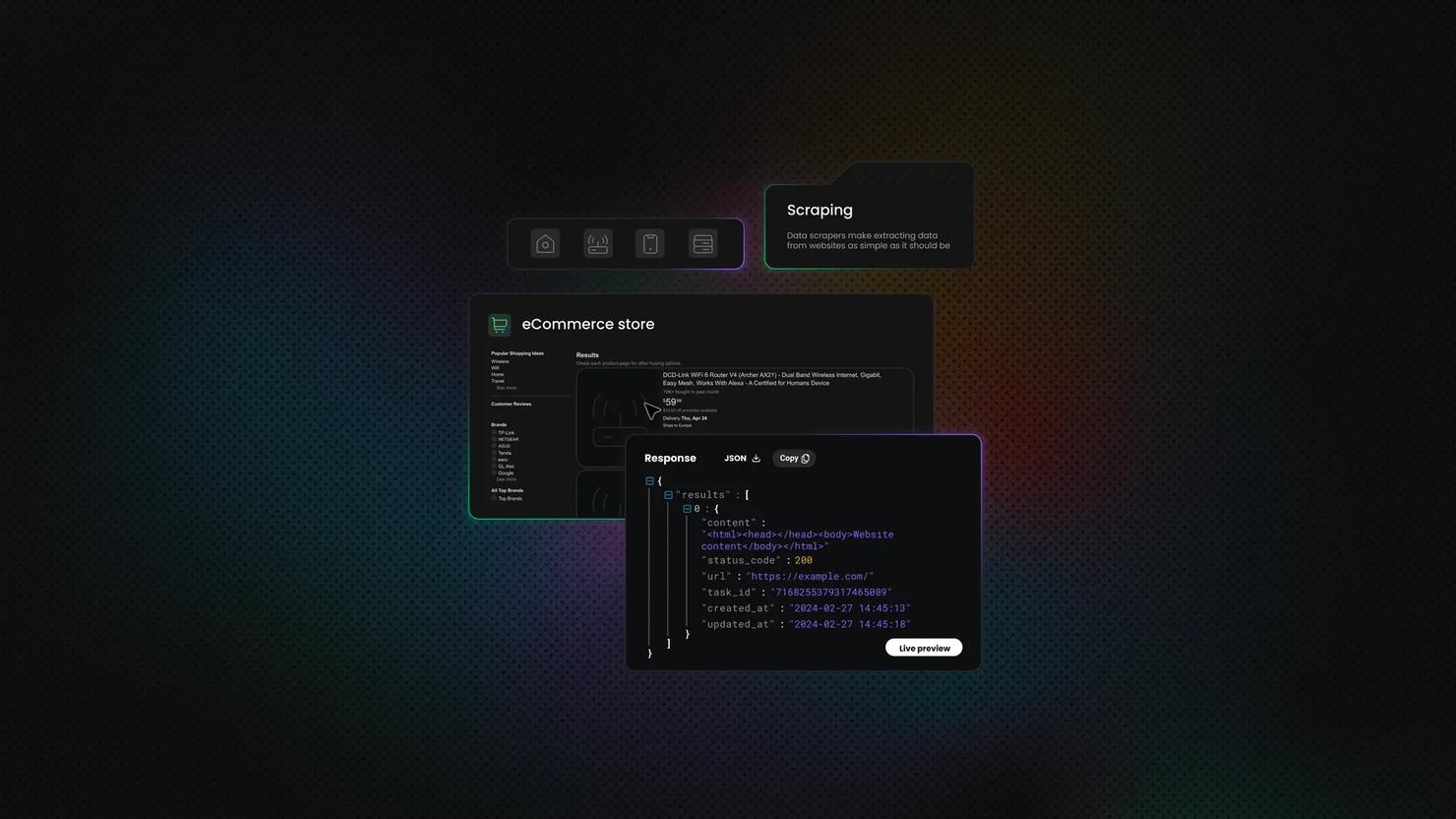

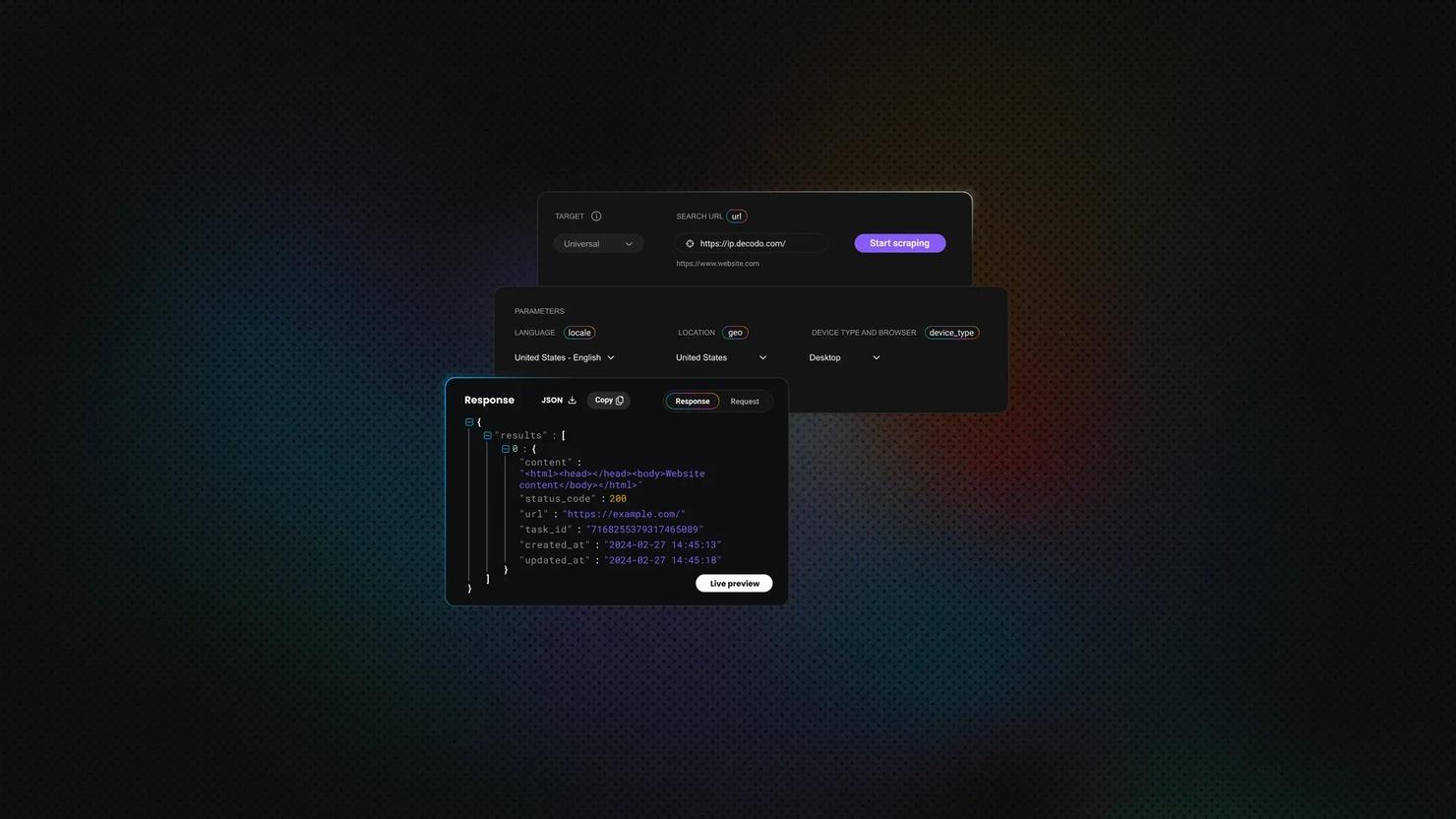

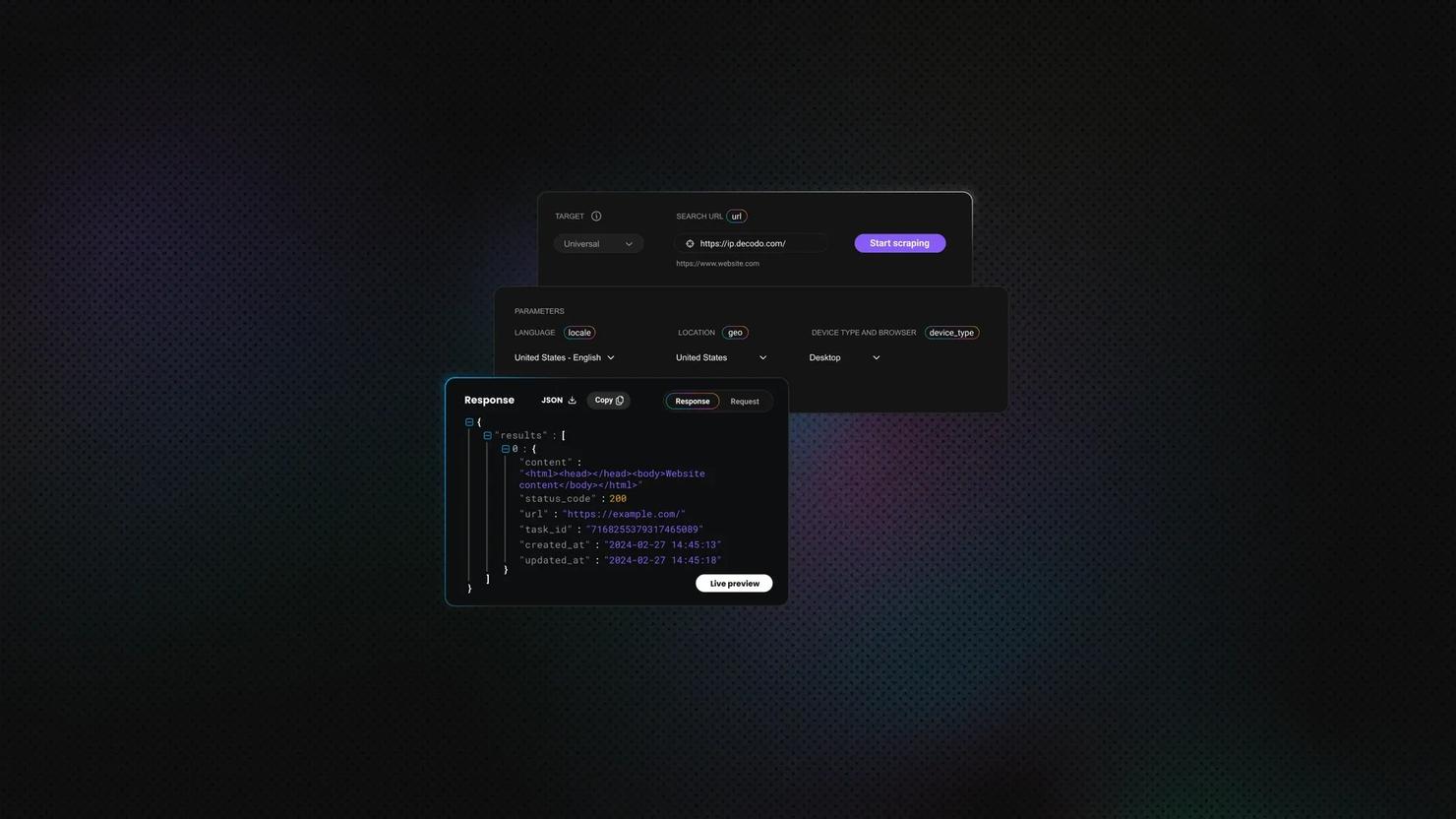

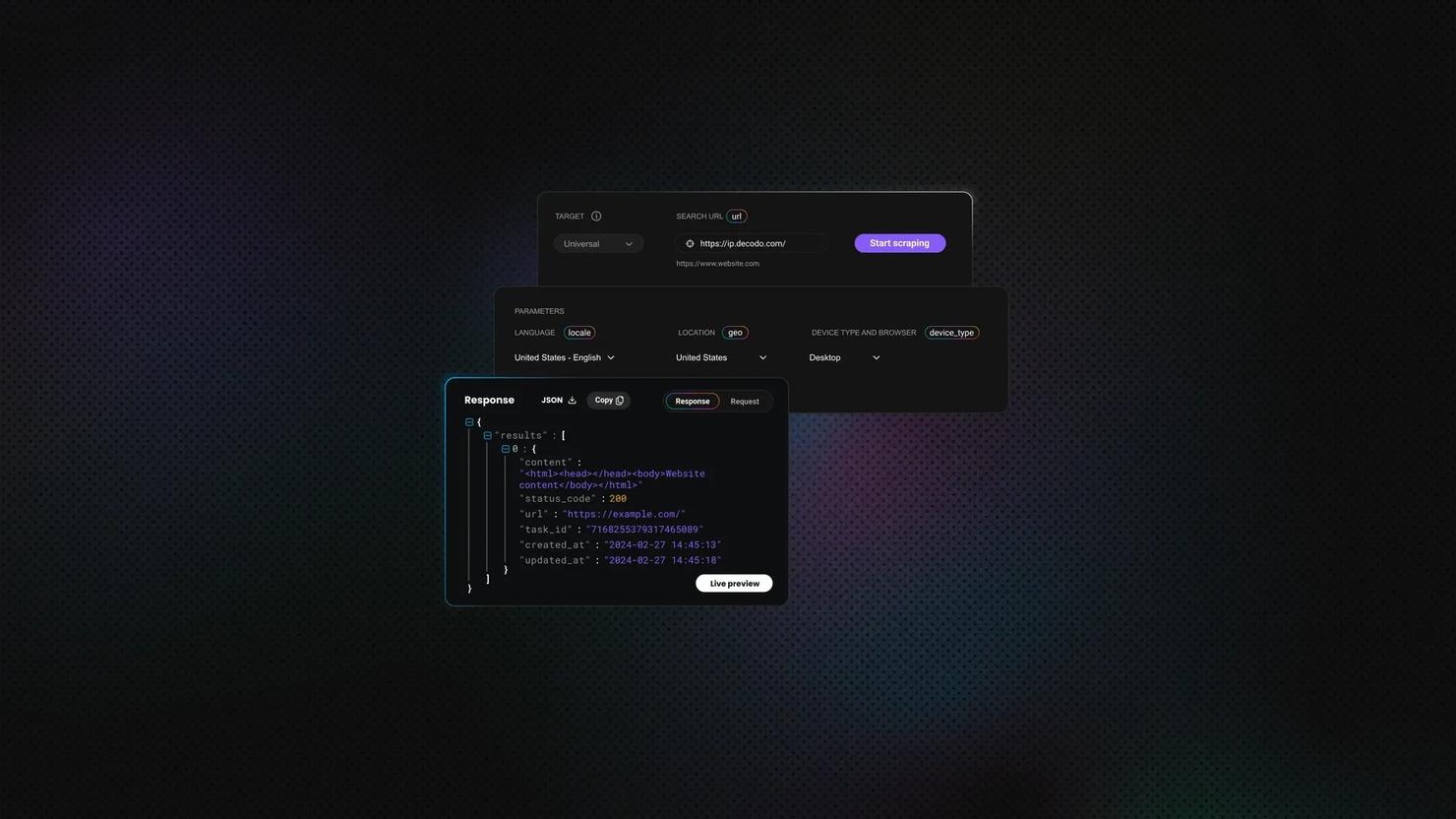

Data Collection

The process of data collection is vital in all kinds of industries. It helps businesses learn about the market, know their customers better and adapt to their needs. Data collection can be automated by scraping a set target. It’s extra useful for analyzing business competition, records, trends, and other data.

14-day money-back option

XPath Using Text: How to Select Elements by Text Value

HTML structure shifts constantly, but the visible text on a page tends to remain more stable. That stability is what makes text-based selectors useful in web scraping. This guide covers the core functions you need to work with text in XPath: text(), contains(), starts-with(), normalize-space(), and translate(), including where each one breaks and how to combine them to build selectors that survive page updates.

Lukas Mikelionis

Last updated: May 15, 2026

10 min read

Playwright XPath: How to Locate and Interact With Elements

If you're building a Playwright scraper and not using Xpath, you're probably leaving your most precise location strategy on the table. Think of the DOM as a tree of nodes, and an XPath expression as the specific zip code to reach any node. In this article, we'll explain XPath fundamentals, how to construct XPath expressions, and how to interact with elements, including real-world examples.

Vilius Sakutis

Last updated: May 13, 2026

20 min read

JSON.parse() in JavaScript: A Complete Guide

Mykolas Juodis

Last updated: May 12, 2026

8 min read

Puppeteer vs. Selenium: Which Tool Should You Use for Web Scraping?

Mykolas Juodis

Last updated: May 07, 2026

12 min read

What Is a Mobile Proxy? How It Works, Uses, and When to Use One

Robertas Lisickis

Last updated: May 07, 2026

10 min read

jQuery Web Scraping: How To Extract Data From Web Pages

Zilvinas Tamulis

Last updated: May 07, 2026

12 min read

How to Send Basic Auth Credentials Using cURL

Vilius Sakutis

Last updated: May 05, 2026

11 min read

How to Scrape Websites with PowerShell: A Complete Guide

Justinas Tamasevicius

Last updated: May 04, 2026

12 min read

Top Python Scraping Libraries: Overview, Comparison, and How to Choose the Right One

Vilius Sakutis

Last updated: Apr 30, 2026

20 min read

How To Use ScrapeGraph AI for Web Scraping in 2026

Kipras Kalzanauskas

Last updated: Apr 30, 2026

20 min read

Golang Headless Browser: Complete chromedp Tutorial

Justinas Tamasevicius

Last updated: Apr 30, 2026

16 min read

Java Web Scraping Libraries: How to Choose and Use the Best Tools for Your Project

Vilius Sakutis

Last updated: Apr 30, 2026

17 min read

Wait for Page to Load in Beautiful Soup: Why It Fails and How to Fix It

Lukas Mikelionis

Last updated: Apr 30, 2026

9 min read

Puppeteer vs. Playwright: Which Tool Is Better for Web Scraping?

Puppeteer vs. Playwright is a real architectural decision for any production scraping project. The two libraries share a common origin: Playwright was built at Microsoft by engineers who previously worked on Puppeteer at Google. Yet they're different on browser coverage, language bindings, and scraping ergonomics. Performance, stealth, proxy integration, and parallel execution decide which tool fits your pipeline.

Justinas Tamasevicius

Last updated: Apr 24, 2026

8 min read

Apache Nutch Tutorial: Install, Crawl, Index, and Automate

Lukas Mikelionis

Last updated: Apr 24, 2026

15 min read

How to Use a Cloudflare Scraper for Data Extraction

Cloudflare protects over 20% of all websites, and its anti-bot system can shut your scraper down in seconds. A Cloudflare scraper is any tool or script that gets past those defenses to pull data from protected sites. This guide breaks down how Cloudflare spots bots, why most scrapers fail, and how to scrape with Decodo's Web Scraping API.

Mykolas Juodis

Last updated: Apr 23, 2026

7 min read

Web Scraping Without Getting Blocked: A Practical Guide for 2026

Web scraping without getting blocked is one of the hardest challenges you might face. Whether you’re a business conducting market research or a solopreneur working on your next big thing, most scrapers fail not because the code is wrong, but because websites now run layered detection that flags bots before a single byte of HTML is returned. This guide breaks down all the detection layers, including network, TLS, browser, and behavioral, and delivers the best techniques on how to overcome each.

Benediktas Kazlauskas

Last updated: Apr 23, 2026

12 min read

Wait for Page to Load in Playwright: A Practical Guide to Every Waiting Method

Dominykas Niaura

Last updated: Apr 23, 2026

6 min read