Web Scraping Without Getting Blocked: A Practical Guide for 2026

Web scraping without getting blocked is one of the hardest challenges you might face. Whether you’re a business conducting market research or a solopreneur working on your next big thing, most scrapers fail not because the code is wrong, but because websites now run layered detection that flags bots before a single byte of HTML is returned. This guide breaks down all the detection layers, including network, TLS, browser, and behavioral, and delivers the best techniques on how to overcome each.

Benediktas Kazlauskas

Last updated: Apr 23, 2026

12 min read

TL;DR

Modern anti-bot systems are multi-layered and continuously updated. Here are the key takeaways:

- Modern websites block scrapers across 4 layers, network, TLS, browser, and behavioral

- Residential proxies defeat IP-level blocks_,_ datacenter IPs are flagged by default on serious targets

- Header consistency and TLS fingerprint matching address the majority of detections before any browser emulation is needed

- Headless browsers should be a last resort, not a starting point – they're slow and resource-intensive

- ReCAPTCHA v3 is a risk scoring system, and good session hygiene can keep scores high without CAPTCHA solvers

- Always check robots.txt and Terms of Service before scraping, and avoid collecting personal data without a legal basis

How websites detect scrapers: the four-layer model

Before covering any technique, it's worth building a clear mental map of how detection actually works. Most competitor articles skip straight to tips. Understanding the detection architecture first helps explain why each countermeasure is necessary – and which layer to debug when your scraper gets blocked.

Layer #1: Network

The first check happens at the IP level, before the server reads a single header. Anti-bot systems query IP reputation databases to score incoming requests. Autonomous System Numbers (ASNs) belonging to cloud providers – AWS, GCP, Azure – are blocklisted by default on high-security sites because legitimate end users almost never browse from datacenter ranges. Request volume thresholds also trigger blocks at this layer, regardless of how well-crafted the request looks.

Layer #2: TLS

The TLS fingerprint is emitted during the TLS handshake, before any HTTP header is transmitted. The ClientHello message – used to negotiate the encryption session – contains a unique fingerprint known as a JA3 or JA4 hash. Python's requests library, curl, and other non-browser HTTP clients each produce recognizable JA3 values that anti-bot systems identify in milliseconds. A scraper with a perfect Chrome User-Agent can still be blocked at this layer because its TLS handshake signature doesn't match Chrome's.

Layer #3: Browser

Once a request passes the network and TLS checks, JavaScript challenges probe the browser environment. Key signals include the exposure of the navigator.webdriver (set to true in automation contexts), canvas and WebGL rendering signatures that identify GPU configurations, AudioContext behavior, font enumeration, plugin lists, and screen resolution anomalies. Real browsers leave consistent, device-specific fingerprints. Headless browsers don't – unless specifically patched.

Layer #4: Behavioral

Behavioral analysis operates at the session level. Systems like PerimeterX/HUMAN track inter-request timing patterns, scroll depth, mouse trajectory, navigation linearity, and session duration across an entire browsing session. A scraper that requests pages at perfectly uniform 2-second intervals may pass all other checks and still be flagged here.

The key implication – a scraper blocked despite good proxies and realistic headers is likely failing at the TLS or browser layer, not the network layer. That difference shapes which to apply first.

IP rotation and proxy usage

The network layer is the first line of defense. Getting past it requires understanding not just proxy types, but how IP reputation scoring works and how rotation strategy affects detection risk.

Why a single IP fails

A single IP accumulates rate-limit counters and reputation scores with every request it makes to a target domain. Datacenter IP ranges from AWS, GCP, and Azure are on prebuilt blocklists used by anti-bot vendors. A request from an AWS IP to a high-security target is often blocked before the server reads any content. Even residential IPs can accumulate a negative reputation from previous scraping activity by other users sharing the same address, so you need to choose a reputable provider that carefully checks the IPs’ quality.

Proxy type trade-offs

Not all proxies carry the same trust score with anti-bot systems. The main types:

- Datacenter proxies are fast and cheap, but ASN ranges are trivially identifiable. Use only for low-security targets

- Residential proxies come from real devices connected to local networks, so the ASN is indistinguishable from organic traffic, making the detection risk significantly lower

- Static residential (ISP) proxies originate from ISPs but have high stability. They’re useful for account-based scraping that requires a consistent identity across sessions

- Mobile proxies operate through carrier NAT (CGNAT), where many real users share a single public IP. These carry the highest trust scores but come at a premium

There are also solutions like Site Unblocker that combine multiple proxy types with built-in fingerprint management, automatic retry logic, and CAPTCHA solving – waving away the complexity so you don't have to manage rotation manually.

A failure mode competitors rarely discuss – shared residential proxy pools can contain previously flagged IPs. Pool size and IP freshness matter as much as proxy type. Smaller, exhausted pools increase the chance of cycling onto a compromised address.

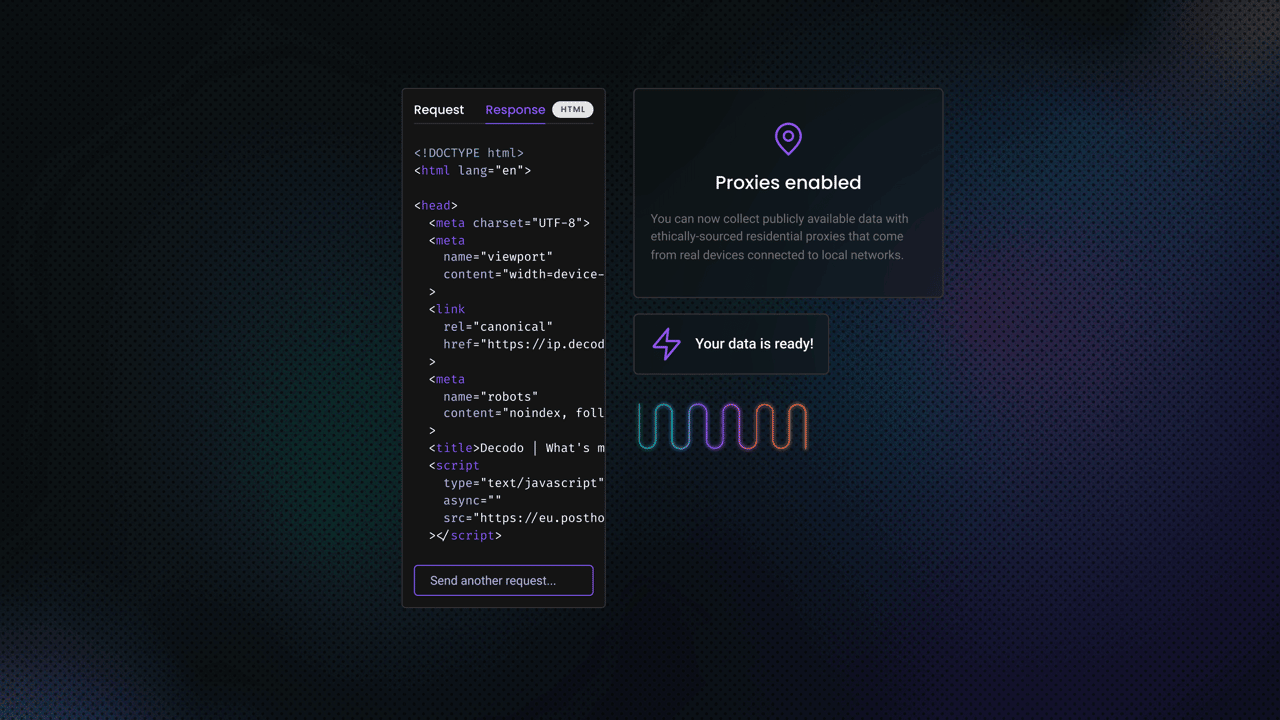

Implementing rotation in Python

Rotation through a residential proxy endpoint handles most scenarios. The Decodo gateway rotates the exit IP automatically on each request, so no client-side cycle pool is needed.

Install requests first with pip install requests. Explore our Python Requests guide for a step-by-step guide for this part. When a target requires a consistent identity across paginated requests, append a session ID to the username to hold the same IP across that flow.

For sticky sessions, append a session identifier to the username. The same session ID returns the same exit IP for up to 10 minutes:

Geographic context matching is another detection vector that's easy to overlook. Routing through US residential IPs while sending a mismatched Accept-Language: fr-FR header is a detectable inconsistency. Always match the language and locale headers to the proxy country.

At the point where plain datacenter proxies are blocked on targets like Product Hunt or similar high-security sites, Decodo’s residential proxies provide geo-targeted rotation across a large ethically-sourced pool, significantly reducing the chance of cycling onto flagged IPs.

User-agent and request header management

After the IP check, the next line of inspection is header consistency. Default HTTP client headers are immediately identifiable, and a mismatched header set can expose a scraper even if the IP looks clean.

What default headers reveal

A default requests call sends a User-Agent header of python-requests/2.x.x, which is flagged on sight. Even after replacing the User-Agent with a Chrome string, most scrapers omit the full header set that Chrome sends, and the absence of those headers is itself a fingerprint.

The full Chrome header set

A realistic Chrome request includes these headers, in the correct order (header order is itself part of the fingerprint):

- User-Agent: a current Chrome version string, matched to the correct OS

- Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8

- Accept-Language: matched to the proxy country, e.g., en-US,en;q=0.5

- Accept-Encoding: gzip, deflate, br

- Sec-Fetch-* headers: Sec-Fetch-Dest, Sec-Fetch-Mode, Sec-Fetch-Site, Sec-Fetch-User

- Upgrade-Insecure-Requests: 1

- Referer: a plausible upstream URL, such as a Google search results page for entry to deep URLs

A Chrome UA paired with a macOS Sec-CH-UA value is internally consistent. A Chrome UA paired with a Windows Sec-CH-UA is a mismatch. Internal header consistency matters as much as the individual header values.

Practical code: Building a realistic header dict

Use httpbin.org/headers to verify exactly what your scraper is sending. The endpoint echoes the request headers back as JSON, which makes it easy to confirm the set is complete before targeting a real site.

Run the script with python check_headers.py. The output shows what the target server receives:

Rotate User-Agents across realistic OS/browser combinations. A Chrome 2021 UA string in 2026 is a red flag in itself. Keep the pool current by checking the latest Chrome release versions.

Request timing and behavior randomization

Timing is a behavioral signal. A scraper that fires requests at exactly uniform intervals is statistically distinguishable from human browsing, even if every other signal looks clean.

Why fixed delays fail

Statistical analysis of inter-request timing over a full session exposes mechanical patterns. A human browsing a site will spend varying amounts of time reading each page. Delays cluster around a realistic mean but occasionally run long when the content is dense. A uniform delay of exactly 2 seconds on every request produces a variance of zero, which is statistically impossible for organic traffic.

Gaussian delays vs. uniform random

A Gaussian (normal) distribution centered around a realistic mean, say, 3 to 5 seconds, produces human-like variance where most delays cluster near the mean but occasionally run longer. Uniform random delays still produce a recognizable distribution. Gaussian is closer to actual human reading patterns.

Intra-page behavior with Playwright

When using a headless browser, simulate realistic intra-page behavior before extracting data. Scroll the page incrementally, move the mouse to interactive elements before clicking, and vary the entry point of each crawl rather than navigating directly to the target URL every time. Install Playwright with pip install playwright && playwright install chromium – the Playwright web scraping guide covers setup in detail.

Run the script with python scroll_scrape.py. Sample output shows the first 3 extracted quotes from the page:

Off-peak crawling is a supplementary strategy: for non-time-sensitive data, scheduling crawls during midnight local time to the server reduces competition for shared resources and often means lower detection thresholds.

Headless browsers and browser fingerprinting

When a target runs JavaScript challenges, plain HTTP clients fail. A real browser engine is required. However, default headless Chrome leaks identifying signals that anti-bot systems detect within the first script execution cycle.

What default headless Chrome leaks

Out of the box, headless Chrome exposes:

- navigator.webdriver = true: readable _b_y any JavaScript running on the page

- HeadlessChrome in the User-Agent string

- Missing chrome.runtime object, which real Chrome always exposes

- Abnormal screen dimensions (0x0 in some configurations)

- Empty plugin list, while real browsers expose several entries

playwright-stealth: what it patches and what it misses

The playwright-stealth library patches the most commonly probed signals: navigator.webdriver, the UA string, chrome.runtime, and plugin lists. This defeats most automated detection checks. Advanced fingerprinting systems that compare canvas rendering output, the WebGL GL_RENDERER string, and AudioContext oscillator signatures against device baselines can still identify patched headless browsers. These are signals that playwright-stealth doesn't address by default.

The full fingerprint surface

Signals that advanced systems probe beyond the basics:

- Canvas rendering output_:_ different GPU hardware renders identical canvases with subtle pixel-level differences

- WebGL GL_RENDERER string_:_ identifies the specific GPU, which in headless environments often reads as SwiftShader or llvmpipe (software rendering)

- AudioContext oscillator behavior: hardware-dependent acoustic fingerprinting

- Font enumeration: real devices have a specific set of installed fonts that varies by OS and configuration

- Screen color depth and pixel ratio: headless environments default to values that don't match common monitor configurations

Camoufox as an alternative

Camoufox is a Firefox-based headless browser tool that patches fingerprinting signals at a lower level than Playwright stealth plugins. It's compatible with the Playwright API, which means existing Playwright web scraping code can use it with minimal changes. Its key advantage is targeting CreepJS-class detection – a class of browser fingerprinting that Chromium-based tools struggle to defeat because Chrome's headless mode shares fingerprinting characteristics with its non-headless counterpart at the engine level.

When to use headless browsers and when not to

Headless browsers are slower and memory-intensive. Running more than 20 concurrent instances is impractical on most infrastructure. Plain HTTP clients with a clean TLS stack, using tools like curl_cffi or httpx with Chrome-impersonation mode, solve more detection cases than developers assume, at a fraction of the resource cost. Reach for a headless browser only when JavaScript rendering is genuinely required to load the target data.

CAPTCHA and anti-bot evasion

When collecting publicly available data, always remember – CAPTCHAs are a checkpoint, not a wall. Understanding the architecture of each CAPTCHA type determines the most efficient resolution path, and in many cases, good session hygiene avoids the CAPTCHA entirely.

CAPTCHA taxonomy

The 4 main CAPTCHA architectures work differently and require different approaches:

- reCAPTCHA v3: a silent risk scoring system with no visual challenge. A score between 0.0 (bot) and 1.0 (human) is calculated from session signals. Good headers, realistic timing, and a residential IP keep the score above the threshold without any solver intervention

- reCAPTCHA v2: the familiar image grid challenge, triggered when v3 scores are low or as a standalone check. Requires a CAPTCHA solver service

- hCaptcha: similar to reCAPTCHA v2 in architecture. Image selection challenges are compatible with most solver services

- Cloudflare Turnstile: a JavaScript probe that requires full browser execution. Plain HTTP clients cannot pass it. Switching to a headless browser or a managed solution is the only viable path

The reCAPTCHA v3 insight most articles miss

ReCAPTCHA v3 presents no visual challenge to solve. The score is a function of how human the entire session looks – headers, timing, IP reputation, navigation patterns. A scraper with residential proxies, realistic headers, and Gaussian timing can often maintain a score above 0.7 and avoid triggering any challenge at all. This is the cheapest and most scalable CAPTCHA solution available.

Automated CAPTCHA solver integration

When a visual CAPTCHA is unavoidable, third-party services like 2Captcha receive the CAPTCHA token, return a solved response, and charge per solution (typically $1 to $3 per 1,000 solves). The returned token is injected into the form before submission. Latency typically runs 10 to 30 seconds per solve – acceptable for low-volume scraping, expensive for high-volume pipelines.

The returned token is the value injected into the g-recaptcha-response form field before submission. The Google demo URL above is a live endpoint for testing solver integration end-to-end.

When CAPTCHA volume exceeds the economics of per-solve pricing, Decodo’s Site Unblocker abstracts CAPTCHA solving, TLS fingerprint matching, and anti-bot evasion into a single endpoint, removing per-solve overhead entirely.

Honeypot and trap avoidance

Honeypot links are traps designed to catch automated crawlers that follow every link without checking visibility. Following a honeypot immediately triggers a block or flags the IP. A comprehensive honeypot taxonomy goes well beyond the CSS-hidden links most articles describe.

Types of honeypot traps

The main honeypot patterns in production use:

- CSS-hidden links: display: none, visibility: hidden, opacity: 0, or positioned off-screen. Checking computed styles via Playwright's page.is_visible() is more reliable than checking raw attributes

- Color-camouflaged links: link text set to the page background color (white-on-white). Present in the DOM and followable, but invisible to human users

- Hidden form fields: an input field that a human never sees or fills in, but an automated form submitter will. Touching it triggers an immediate block. Any input with display: none or a name like website, url, or phone in a non-contact-form context should be skipped

- Canary tokens and tarpit pages: links that resolve to monitored endpoints, or pages that serve artificially slow responses to drain bot resources. Compare link href patterns to the site's known URL structure before following

Practical filter: is_safe_link()

A reusable function that checks computed visibility, color contrast, and href relevance to the crawl scope before following any link:

Pair this filter with a check against robots.txt disallow patterns to skip paths the site has marked off-limits.

Identifying and respecting rate limits

Rate limiting is distinct from being blocked. It's a server-side signal sent before a harder block occurs. Most guides say to add delays without explaining how to read the rate-limit signals the server is broadcasting in its response headers.

Reading rate-limit headers

The HTTP standard provides several rate-limit response headers that tell a scraper exactly how to behave:

- Retry-After: the number of seconds to wait before retrying. Respect this value before attempting exponential backoff

- X-RateLimit-Limit: the total number of requests allowed in the current window

- X-RateLimit-Remaining: requests left in the current window

- X-RateLimit-Reset: Unix timestamp when the window resets

- RateLimit-* headers (RFC 6585 draft standard): a newer standardized format increasingly adopted by major APIs

Soft blocks disguised as 200 OK

Some sites return an HTTP 200 with a CAPTCHA page or an empty body instead of a 429. Checking the status code alone isn't enough. Always validate the response content by checking for expected selectors or a minimum content length before treating a response as successful.

Exponential backoff with jitter

Polite scraping is also a practical operational stance, not just an ethical one. Staying well within observed rate limits and citing the Crawl-Delay value in robots.txt directly reduces block rates, which lowers maintenance overhead.

Reverse engineering and anti-bot system analysis

This is the most advanced diagnostic tool in a scraper developer's kit. Reverse engineering anti-bot JavaScript isn't about circumventing every site – it's about diagnosing why a specific scraper is being detected. The methodology is systematic and analytical.

Identifying which vendor is protecting the target

Response headers and cookies reveal the anti-bot vendor:

- cf-ray header: Cloudflare

- x-datadome-requestid header: DataDome

- _abck cookie: Akamai Bot Manager

- _px cookie: PerimeterX/HUMAN

JavaScript injection patterns in the page source also indicate the vendor. Each vendor's script has a recognizable structure under browser DevTools.

Reading obfuscated anti-bot JavaScript

Use browser DevTools to set breakpoints on anti-bot script execution. The sensor data collection function, the one that assembles the fingerprint payload, is identifiable by the volume of signals it reads: canvas, fonts, timing arrays, and screen dimensions. Observing what it collects doesn't require defeating it. Understanding which signals matter informs which patches to apply.

Tracing the Akamai _abck cookie flow

The Akamai _abck cookie is generated client-side from sensor data and validated server-side. The server rejects a cookie that was generated by a different fingerprint than the current request. Simply copying the cookie from a valid browser session doesn't work because it's bound to the session's specific sensor fingerprint. Understanding this flow explains why the fix requires spoofing the sensor data collection, not the cookie value itself.

Network tab analysis for hidden API endpoints

Watching XHR and fetch calls in the Network tab during page interaction often reveals JSON endpoints the site uses internally – paths like /api/v2/products or /graphql. These endpoints often carry lower anti-bot scrutiny than the rendered HTML page and return structured data that's easier to parse.

When reverse engineering is the wrong approach

Some anti-bot systems update continuously. Cloudflare Turnstile, for example, receives frequent updates that can break reverse-engineered evasion logic overnight. Recognizing when the ongoing maintenance cost exceeds the cost of switching to a managed solution is a practical engineering judgment, not a failure.

Leveraging APIs and alternative data sources

Before building a complex scraper against a heavily protected target, check whether the target exposes an unofficial JSON API that its frontend already consumes. This is often the path of least resistance: no HTML parsing, lower anti-bot scrutiny, and structured data.

The discovery workflow

Open DevTools Network tab, interact with the page (scroll, trigger filters, click load-more buttons), and filter XHR/fetch calls. Most modern single-page applications load data from dedicated API paths. A GraphQL endpoint is particularly valuable – a single query can return deeply structured data that would require multiple scraped pages to reconstruct.

Extracting authentication tokens

Many internal APIs require a Bearer token or a CSRF token obtained from the initial page load. Playwright can capture this token before switching to direct API calls:

Official APIs and the hybrid approach

Before scraping at all, verify whether the target offers an official API. Twitter/X, Reddit, LinkedIn, and most major eCommerce platforms provide API access. Pre-scraped datasets cover many common use cases without any scraping overhead.

The hybrid approach works well when an official or unofficial API covers most fields but leaves gaps. Use the API for structured fields and fall back to HTML parsing only for data that the API doesn't expose.

For targets where direct scraping is impractical at scale, Decodo’s Web Scraping API is one of the best choices. It handles the entire anti-bot stack for you, proxy rotation, fingerprint management, JavaScript rendering, and CAPTCHA solving, so you only deal with clean, structured output. The free plan gives you access to over 100 ready-made scraping templates for popular targets like Google, Amazon, and real estate listings, plus a pool of 125M+ ethically sourced IPs across 195+ locations to keep block rates low.

Collect data at scale with Web Scraping API

Activate your free plan and get results in HTML, JSON, CSV, XHR, PNG, or Markdown.

Legal and ethical considerations

Web scraping isn't illegal by default, but there are no scraping-specific laws either. Instead, it falls under a patchwork of existing regulations, like copyright, data privacy, computer access, and contract law, that vary by jurisdiction. What matters is the context – what data you're collecting, how you're accessing it, and what you do with it afterward.

Public data doesn't mean free data

Just because information is publicly visible doesn't mean you can use it however you want. In many jurisdictions, personal data like names, emails, or behavioral patterns is still protected by privacy regulations regardless of visibility. The EU's GDPR, for example, treats any data that can identify an individual as personal data, no matter where it was found. U.S. state privacy laws like the CCPA take a different approach, generally excluding publicly available information from the personal data definition.

Terms of Service

Many websites restrict automated access through their ToS. The enforceability depends on the type of agreement. Browsewrap agreements (terms posted in a footer) are often unenforceable because users don't explicitly consent. Clickwrap agreements, where you actively click "I agree," are far more likely to hold up. Simply visiting a page doesn't create a binding contract, but logging in or clicking through a consent screen can.

Copyright and database protection

Raw facts aren't copyrightable, so scraping individual data points like prices, dates, or statistics is generally fine. However, creative expression of those facts, articles, images, and curated collections is protected. The EU's Database Directive adds another layer, protecting databases that require significant investment to compile. To stay safe, focus on extracting factual data rather than copying how someone presented it, and transform scraped data into something new rather than republishing it.

Practical guidelines

Before running a production scraper, it's worth following a few baseline practices:

- Read the target's ToS. Understand any restrictions on automated access before you start collecting data.

- Use official public APIs when available. They offer cleaner data, clearer usage terms, and more stable long-term access.

- Space out your requests. Avoid overwhelming the server, which also reduces your chance of triggering anti-bot measures.

- Identify your scraper. Set a proper User-Agent string with contact information so site owners can reach you if needed.

- Be cautious with personal data. Collect only what you need, and make sure you're complying with applicable privacy regulations.

- Consult a legal professional when in doubt. Especially for commercial projects, cross-border scraping, or work in regulated industries.

Industry-leading residential proxies

Access 115M+ residential IPs with fast response times and high success rates.

Bottom line

Most scraper failures are fixable if you know which layer is rejecting the request. The progression is logical – start with residential proxies and a realistic Chrome header set to address the network layer. Add TLS fingerprint matching, using curl_cffi or a similar tool for targets that inspect the ClientHello before reading headers. Reach for headless browsers only when JavaScript rendering is genuinely required, and patch the fingerprint surface thoroughly before using them in production.

Treat CAPTCHA solving and reverse engineering as specialist tools for specific situations, not default starting points. ReCAPTCHA v3 rarely requires a solver if session hygiene is maintained. Reserve reverse engineering for cases where all standard countermeasures have failed, and the target is worth the maintenance overhead.

Anti-bot systems update continuously. Session monitoring, selector validation, and API endpoint checks are ongoing maintenance tasks, not one-time setups. The scraper that works today may need adjustment next month – build monitoring in from the start.

About the author

Benediktas Kazlauskas

Content & PR Team Lead

Benediktas is a content professional with over 8 years of experience in B2C, B2B, and SaaS industries. He has worked with startups, marketing agencies, and fast-growing companies, helping brands turn complex topics into clear, useful content.

Connect with Benediktas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.