Comprehensive Guide to Web Scraping with PHP

PHP has been powering the server side of the web for decades, and all that HTTP handling experience makes it a surprisingly capable tool for web scraping. It's not the first language most people reach for – that's usually Python – but if PHP is already your daily driver, there's no reason to switch completely. In this article, you'll learn everything there is to know about web scraping with PHP.

Zilvinas Tamulis

Last updated: Mar 26, 2026

23 min read

TL;DR

- PHP handles HTTP requests, HTML parsing, and database storage natively, making it a practical choice for web scraping if it's already your stack.

- Your environment needs PHP 8.0+, Composer, and a few extensions (curl, xml/dom, mbstring) to get started.

- For HTTP requests, cURL covers most use cases; Guzzle adds async support and middleware for production workflows.

- The PHP ecosystem offers parsers (DomCrawler, DiDOM, Simple HTML DOM), frameworks (Roach PHP, BrowserKit), and headless browser tools (Panther, php-webdriver) for every scraping scenario.

- CSS selectors and XPath are your primary parsing tools – regex is fine for simple patterns, but never for complex HTML.

- Handle pagination by following "next" links, constructing URLs programmatically, or intercepting AJAX endpoints directly when content loads dynamically.

- Export scraped data to CSV, JSON, XML, or databases (SQLite, MySQL, PostgreSQL, MongoDB) depending on your project's scale and needs.

- Production scrapers need retry logic with exponential backoff, realistic browser headers, rate limiting, and proxy rotation to avoid blocks.

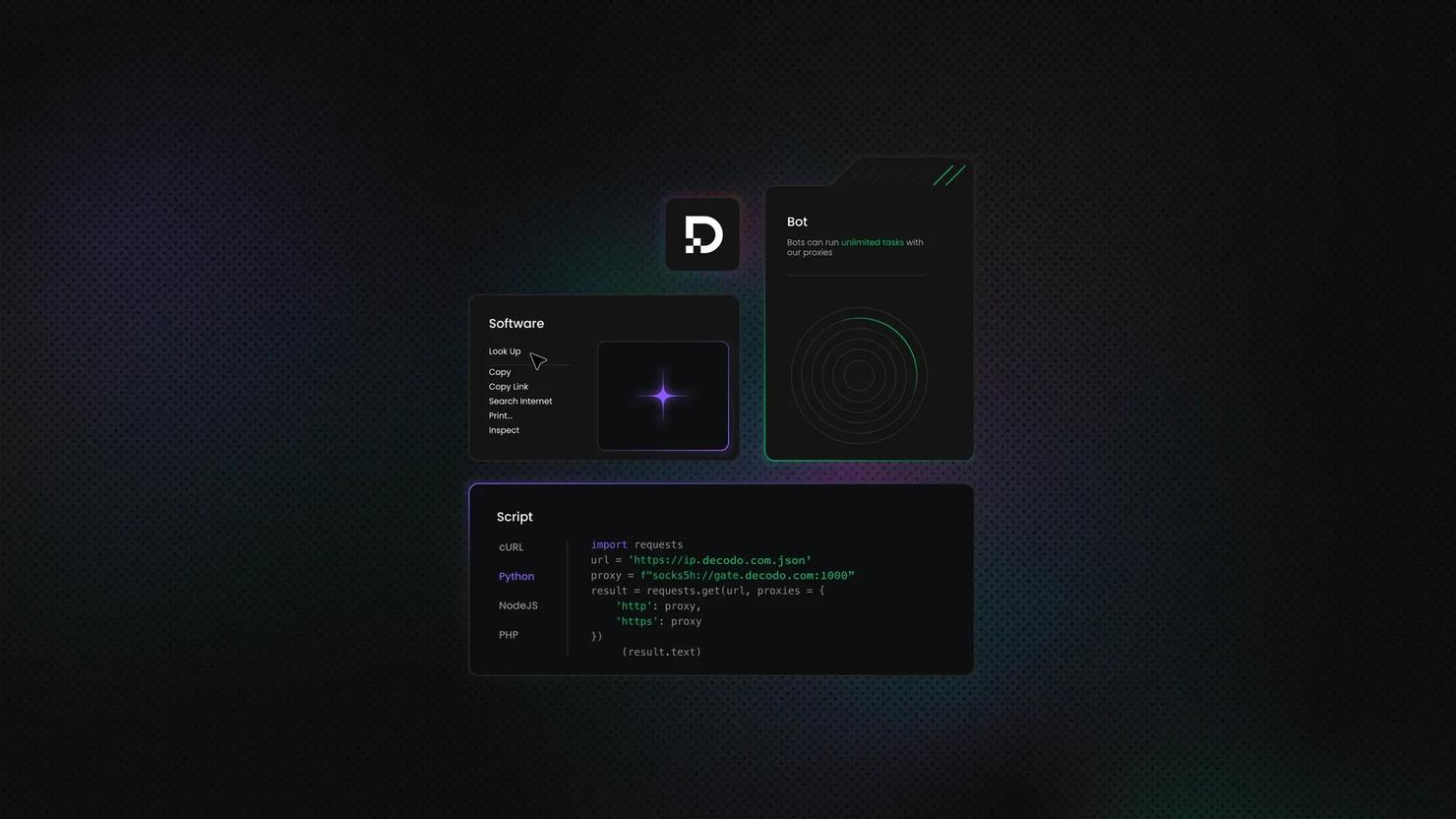

- Decodo's residential proxies and Web Scraping API handle IP rotation, fingerprint management, and anti-bot evasion so you can focus on the data.

Why PHP for web scraping?

PHP handles the fundamentals of scraping well out of the box. It can fire off HTTP requests, parse HTML, and send data straight into a database.

Here's where PHP genuinely shines for scraping work:

- Deployment is easy. Most shared hosting, VPS environments, and cloud platforms already run PHP.

- Database integration is native. PHP data objects (PDO) ship together with PHP. They provide a simple way to integrate with databases, removing the need to build any complex logic yourself.

- Hosting support is everywhere. Need a cron job to run your scraper on a $5/month server? PHP has been doing that since before "serverless" was a word.

- Composer makes dependency management painless. The PHP ecosystem has mature HTTP clients, DOM parsers, and even full scraping frameworks – all installable with a single command.

And the honest limitations:

- The scraping ecosystem is smaller. Python has Scrapy, Beautiful Soup, and a massive community around scraping. PHP's tooling is solid but less extensive – you'll find fewer tutorials and Stack Overflow answers when things get weird.

- JavaScript-rendered content needs extra work. Like any server-side language, PHP can't execute JavaScript natively. You'll need headless browser tools (covered later in this guide) for SPAs and dynamically loaded content.

If you're weighing which language to pick for your scraping project, PHP won't win every comparison. But for teams already invested in the PHP ecosystem, it's a pragmatic, capable choice.

Setting up your PHP environment for web scraping

Before writing any scraping logic, you need a working environment. Let's see what you'll have to install and set up.

Prerequisites

- PHP 8.0 or higher. Get the installation instructions from the official website or run php -v in your terminal tool to check what you have.

- Composer. This is a non-negotiable package manager for modern PHP. Install it in the current directory by following the steps in the download section of their website. You can also install it globally. macOS users also have the option to do it with Homebrew.

- A code editor. VS Code with the PHP Intelephense extension works great. PHPStorm is excellent if your team already has licenses. Anything with syntax highlighting and autocomplete will do.

Essential PHP extensions

Most PHP installations ship with these enabled, but it's worth checking. Run php -m to see your loaded modules.

- php-curl. Powers cURL requests – the backbone of most scrapers. If this isn't loaded, almost nothing in this tutorial will work.

- php-xml / php-dom. Required for DOM parsing. Without these, you can't use DOMDocument, DomCrawler, or most HTML parsing libraries.

- php-mbstring. Handles multi-byte string operations. Essential when scraping sites with non-ASCII characters.

- php-json. For encoding and decoding JSON. Enabled by default in PHP 8.0+, but verify it's there.

On Ubuntu/Debian, you can install any missing extensions with:

On macOS with Homebrew, most extensions should be bundled with the PHP formula. If something's missing, try to install again:

Project setup

Create a clean project directory and initialize Composer:

Composer will walk you through a few prompts – project name, description, and author. The defaults are fine for a scraping project. What matters is the composer.json it generates.

Next, set up PSR-4 autoloading so your classes load automatically. Add this to your composer.json (if it's not there by default):

Then run:

Now, create a basic project structure:

Finally, configure error reporting for development. This ensures you see every warning and notice while building. Add this at the top of your scrape.php entry point:

Your environment is ready. Next, we'll look at how to actually make HTTP requests – starting with PHP's built-in options and working up to production-grade clients.

HTTP request methods in PHP: From basics to production

Every web scraper starts with the same fundamental action: making an HTTP request and getting HTML back. PHP gives you several ways to do this, ranging from a one-liner for a quick test to a production-grade client with middleware and retries.

Let's work through them in order of complexity.

Built-in methods

file_get_contents()

The simplest way to fetch a web page in PHP:

Run the script with:

This will return the HTML content of the target page and print it in the terminal.

fsockopen()

This opens a raw TCP socket connection to a server. You can build HTTP requests on top of it manually:

This is included here because you'll see it in old tutorials. In practice, using fsockopen() for web scraping in 2025 is like hand-cranking a car engine – educational to understand, never the right call for actual work. You'd have to manually handle HTTP parsing, chunked transfer encoding, SSL/TLS, redirects, and cookies. Life's too short.

cURL (php-curl)

cURL is the industry standard for HTTP requests in PHP, and for good reason. It gives you full control over every aspect of the request while remaining reasonably concise:

The key options you'll use constantly in scraping:

- CURLOPT_RETURNTRANSFER. Without this, cURL dumps the response straight to stdout instead of returning it as a string. You almost always want this set to true.

- CURLOPT_FOLLOWLOCATION. Follows 301/302 redirects automatically. Most sites redirect at least once (HTTP → HTTPS, www → non-www).

- CURLOPT_TIMEOUT. Sets a maximum execution time. Without it, a hanging server will block your scraper indefinitely.

- CURLOPT_USERAGENT. Many sites block requests that arrive with PHP's default user agent or no user agent at all. Set something that looks like an actual browser.

cURL also has built-in proxy support, which becomes essential at scale:

Error handling is also straightforward with curl_errno() and curl_error():

Third-party HTTP clients

Guzzle

Guzzle is the most popular HTTP client in the PHP ecosystem, and it's what most production scrapers end up using. Install it via Composer:

Remember to include the autoloader at the top of your script file, so that the new library is "registered". A basic Guzzle request looks cleaner than raw cURL:

Where Guzzle really earns its place is in concurrent requests. Instead of scraping pages one at a time, you can fire off multiple requests simultaneously using promises:

Guzzle also offers a middleware system that lets you intercept and modify requests or responses – useful for adding retry logic, logging, or injecting headers globally.

Symfony HttpClient

If you're already in the Symfony ecosystem, the HttpClient component works beautifully as a standalone package. In case you don't have it yet, install it with:

Then, write the code:

Symfony HttpClient has excellent async support and handles streaming responses well – handy when you're downloading large pages or files.

When to skip the HTTP complexity entirely

Setting up proper headers, managing retries, rotating user agents, and handling proxy configuration is a lot of effort before you even get to the actual scraping logic. If your goal is getting data rather than building infrastructure, Decodo's Web Scraping API handles all of this for you – you send a request, and it returns the result in HTML, CSV, or even Markdown. No blocked requests, no proxy management, no headless browser orchestration.

Effortless web scraping

Decodo's Web Scraping API is the perfect solution for handling complex websites – leave the hard work to us and enjoy unrestricted data.

PHP libraries and tools for web scraping

HTTP clients

- cURL (built-in). Ships with PHP, no installation needed. The default choice for straightforward requests.

- Guzzle. The most widely used PHP HTTP client. PSR-7 compliant, supports async requests, and has a middleware system for customizing request/response pipelines.

- Symfony HttpClient. Standalone component with strong async and streaming support. A natural fit for Symfony projects, but it works independently too.

- Httpful. A lighter alternative with a chainable, readable API. Good when Guzzle feels like overkill.

HTML/DOM parsers

- Simple HTML DOM Parser. jQuery-like syntax (find('.class'), ->plaintext) that's easy to pick up. Popular with beginners, though it can struggle with memory on large documents.

- DiDOM. Fast, modern parser with CSS selector support. A good middle ground between simplicity and performance.

- DOMDocument (built-in). PHP's native DOM parser. Powerful and dependency-free, but the API is verbose enough to make simple tasks feel ceremonial.

- Symfony DomCrawler. Clean, expressive API with both CSS selector and XPath support. Pairs naturally with Guzzle or Symfony HttpClient.

Complete scraping frameworks

- Goutte / Symfony BrowserKit. Simulates a browser session – follows links, submits forms, maintains cookies. Ideal for scraping workflows that require navigation across multiple pages.

- Roach PHP. Inspired by Python's Scrapy. Offers spiders, item pipelines, and middleware for structured, large-scale scraping projects.

- PHP-Spider. A crawling library with configurable traversal strategies (breadth-first or depth-first). Useful when you need to discover and map URLs before scraping them.

Headless browser control

- Symfony Panther. Controls Chrome or Firefox using the same API as Goutte/BrowserKit. The easiest path to rendering JavaScript content in PHP.

- php-webdriver (Selenium). Full browser automation through Selenium WebDriver. Handles scrolling, clicking, form filling – anything a real user would do.

- PuPHPeteer. A PHP bridge to Node.js Puppeteer. Gives you access to Puppeteer's full API, but requires Node.js installed alongside PHP.

- Chrome PHP. Communicates directly with Chrome via the DevTools Protocol. Lower-level than Selenium, useful when you need fine-grained browser control.

Utility libraries

- Crawler-Detect. Detects whether a user-agent string belongs to a known bot or crawler. Helpful for testing how your own scraper appears to target sites.

- Embed. Extracts OEmbed, OpenGraph, and other metadata from URLs. Useful when you're scraping link previews or social media metadata rather than full page content.

Which combination should you use?

Scenario

Recommended stack

Simple static pages

cURL + Simple HTML DOM or DiDOM

Form submissions, link following

Goutte / BrowserKit

JavaScript-rendered content

Panther or php-webdriver

Large-scale crawling with data pipelines

Roach PHP

Quick prototype or one-off script

file_get_contents + Simple HTML DOM

Scale your PHP scraper

Pair your scraping code with Decodo's proxy and API infrastructure to go from prototype to production without rebuilding.

Extracting and parsing HTML data

Getting HTML is the easy part. The real work starts when you need to pull specific data out of a messy, inconsistent, occasionally hostile DOM structure.

Understanding HTML structure

Before writing a single line of parsing code, open the target page in your browser and inspect it with DevTools (right-click → Inspect). You're looking for:

- Unique selectors. IDs are ideal, specific class names are good, data attributes (data-product-id, data-price) are a gift from the developer gods.

- Patterns in repeated elements. Product listings, table rows, search results – these usually share a common parent container and consistent child structure.

- Inconsistencies. Some listings might be missing a price. Some rows might have an extra <span>. Some pages might use different markup for "featured" vs. regular items. Plan for this early; your parser will thank you.

You may also view the raw page source (Ctrl+U) alongside the DevTools inspector. If content appears in DevTools but not in the source, it's rendered by JavaScript – and you'll need a headless browser to get it.

Parsing approach 1: Regular expressions

Yes, the internet has strong opinions about parsing HTML with regex. They're mostly right. But for extracting simple, predictable patterns from raw text, regex is fast and dependency-free:

Parsing approach 2: CSS selectors

If you've written any CSS or jQuery, this will feel immediately familiar. CSS selectors are the most intuitive way to target elements, and most PHP parsing libraries support them. Here's the equivalent to extract the title:

Parsing approach 3: XPath expressions

XPath is more verbose than CSS selectors, but it can do things CSS simply can't – selecting elements by their text content, navigating to parent or sibling nodes, and combining conditions in ways that would require multiple CSS queries.

Some examples:

Parsing approach 4: DOMDocument (built-in PHP)

PHP's native DOM parser requires no dependencies. It's powerful, but the API is verbose:

Parsing approach 5: Symfony DomCrawler

DomCrawler offers the cleanest API of the bunch, with both CSS and XPath support. Install it with:

The code will look like this:

Which parser should you use?

- Regular expressions are best for fast, precise extractions of specific data like emails or IDs from raw text, though they're fragile and easily broken by minor HTML changes.

- CSS selectors provide the best balance of speed and readability for most projects, offering a familiar syntax for anyone who has styled a webpage.

- XPath expressions offer significantly more power and flexibility than CSS, allowing you to navigate "upwards" in the DOM tree or find elements based on their exact text content.

- DOMDocument is the reliable, built-in PHP fallback for when you cannot use external dependencies, though its syntax is more verbose and sensitive to malformed code.

- Symfony DomCrawler serves as a professional-grade wrapper that combines the performance of native PHP with a modern, fluent API, making it the industry standard for complex, robust scraping tasks.

Handling pagination and multi-page scraping

Most useful data doesn't live on a single page. Product listings, search results, blog archives – the stuff worth scraping is almost always spread across dozens (or hundreds) of pages. Your scraper needs to handle that gracefully.

Pagination patterns you'll encounter

Not all pagination looks the same. Here are some of the hurdles you might run into:

- "Next" button links. A simple <a> tag pointing to the next page. The most common pattern and the easiest to follow.

- Numbered page links. Page 1, 2, 3… or range-based (1–10, 11–20). Same underlying mechanic as "Next" buttons, just different UI.

- URL parameter pagination. The page changes via query strings like ?page=2 or ?offset=20. You can construct these URLs programmatically without parsing any links.

- Infinite scroll. Content loads via JavaScript as the user scrolls. Traditional HTTP scrapers won't see it – you'll need to either find the underlying API endpoint or use a headless browser.

- "Load more" buttons. Similar to infinite scroll, but triggered by a click instead of scrolling. Usually, it fires an AJAX request that you can intercept.

- Cursor-based pagination. Common in APIs. Each response includes a cursor token for the next batch. You keep passing the cursor until the response comes back empty.

The first three are straightforward with standard HTTP tools. The last three require either reverse-engineering the underlying API or reaching for a headless browser.

Pagination handling approaches

Let's take a look at how these work in practice. We'll use books.toscrape.com – a sandbox site built specifically for practicing web scraping. It has 1,000 books spread across 50 pages with a "next" button at the bottom of each page. The URL pattern is /catalogue/page-{n}.html.

Approach 1: Following "next" links

This is the safest approach because it doesn't assume anything about URL structure. You just find the next link, follow it, and stop when there isn't one:

Approach 2: Constructing URLs programmatically

When you know the URL pattern up front, you can skip link parsing entirely and generate all the page URLs yourself:

Approach 3: Using Symfony BrowserKit for link following

Symfony BrowserKit maintains session state automatically – cookies, referer headers, and relative URL resolution are handled for you:

Example code:

Approach 4: Intercepting AJAX requests directly

This is where things get interesting. Scrape This Site has a page listing Oscar-winning films by year. Click a year tab, and a table of films appears – but the initial HTML contains an empty table. The data loads via AJAX. If you try scraping with regular methods, you get nothing useful.

The instinct is to reach for a headless browser, but there's a faster approach: find the AJAX endpoint and call it directly.

Here's how you'd discover it:

- Open DevTools (F12).

- Navigate to Network.

- Select Fetch/XHR.

- Reload the page.

- Click the tab with 2015 in it, and watch the request that fires. You'll see a GET request to the same URL with query parameters ?ajax=true&year=2015 – and the response is clean JSON.

You can assume the same logic works for other years as well. Once you've identified the endpoint, you skip the DOM entirely:

Saving and exporting scraped data

You've got an array of Oscar-winning films from the previous section. Now what? Printing to the terminal is satisfying for about five seconds. After that, you need persistent storage.

Structuring your data

Before exporting anything, it's worth normalizing your data into a consistent shape. Scraped data is messy by nature – missing fields, inconsistent types, stray whitespace. A simple Data Transfer Object (DTO) class keeps things predictable:

Then, replace raw arrays in the scraping loop with:

The modified script will now look like this:

Exporting to CSV

CSV is the universal lowest common denominator. Every spreadsheet, database import tool, and data pipeline understands it.

Add this function to your code and execute it at the end of the script:

Exporting to JSON

JSON is cleaner for structured data and easier to consume programmatically:

Exporting to XML

XML is less common for new projects, but still relevant for data interchange with legacy systems or APIs that expect it:

Storing in SQLite

SQLite is ideal for local development and smaller scraping projects. No server to set up, no credentials to manage – it's just a file:

Storing in MySQL/MariaDB

For production scrapers that feed into larger applications, MySQL or MariaDB is the typical choice:

PostgreSQL

PostgreSQL offers some features that are particularly useful for scraping workflows:

MongoDB

When your scraped data varies significantly in structure across sources, a document store like MongoDB can be simpler than trying to normalize everything into relational tables:

Which storage should you pick?

For prototyping and small projects, start with CSV or SQLite – zero setup, easy to inspect. Move to MySQL/PostgreSQL when the data feeds into a production application. Reach for MongoDB when the schema is genuinely unpredictable. And regardless of your final storage choice, exporting a CSV or JSON backup alongside it costs almost nothing and saves you when things go sideways.

Best practices: Error handling, headers, and avoiding blocks

A scraper that works once is a script. A scraper that works reliably for weeks is a system. The difference comes down to how you handle the things that go wrong – and in web scraping, plenty goes wrong.

Robust error handling

HTTP-level errors

Not every request returns a clean 200. Your scraper needs to handle the common failure modes without crashing:

- 403 Forbidden. The server knows you're scraping and doesn't want you there. This might mean your headers are wrong, your IP is flagged, or the site actively blocks automated requests. Retrying the same request usually won't help – you need to change something (user agent, IP, request pattern).

- 404 Not Found. The page doesn't exist. This is especially common when scraping paginated content, where listings get removed between runs.

- 429 Too Many Requests. You're hitting the server too fast. The response often includes a Retry-After header telling you how long to wait. Respect it, or if there's no header, back off exponentially.

- 5xx Server Errors. The target server is having a bad day. These are usually transient – worth retrying after a delay.

- Timeouts and connection failures. Networks are unreliable. A request that fails once might succeed five seconds later.

For all of these, implement retry logic with exponential backoff. The pattern is simple: wait 1 second after the first failure, 2 seconds after the second, 4 seconds after the third, and cap it at some reasonable maximum (30-60 seconds). Add a small random jitter to each delay so concurrent scrapers don't all retry at the same moment. Guzzle supports this natively through its retry middleware, so you don't need to build it from scratch.

Parsing errors

Even when the HTTP request succeeds, the HTML might not contain what you expect. A product listing might be missing a price. A page layout might change overnight. A promotional banner might push your target element into a different position.

Wrap DOM operations in try-catch blocks, and check that elements exist before trying to extract text or attributes from them. The goal is to log the failure – which URL, which element was missing, what the page actually contained – and continue scraping the rest. One malformed listing shouldn't kill a run that's 10,000 pages deep.

Proper request headers

User-Agent

This is the single most common reason scrapers get blocked. PHP's default cURL user agent is either empty or something like curl/7.x, which might as well be a sign that reads "I am a bot." Set a realistic browser user agent on every request.

For larger scraping jobs, rotate between several user agents. Maintain a list of current Chrome, Firefox, and Safari user agent strings and pick one randomly per request (or per session, if you're maintaining cookies).

Accept headers

Real browsers send a specific set of Accept headers with every request. Your scraper should too:

- Accept. Tells the server what content types you'll handle.

- Accept-Language. Signals your preferred language. en-US,en;q=0.9 is a safe default for English-language sites.

- Accept-Encoding. gzip, deflate tells the server it can compress the response. This reduces bandwidth and often speeds up requests.

- Referer. Some sites check where the request came from. Setting a referer that matches the site's own domain (like the homepage or a search page) makes requests look like normal navigation.

- Cookies. Session-dependent sites require cookies to function. Guzzle and Symfony BrowserKit handle cookie jars automatically – let them persist across requests within a session.

Other headers that matter

- Referer. Some sites check where the request came from. Setting a referer that matches the site's own domain (like the homepage or a search page) makes requests look like normal navigation.

- Cookies. Session-dependent sites require cookies to function. Guzzle and Symfony BrowserKit handle cookie jars automatically – let them persist across requests within a session.

Avoiding blocks and anti-bot measures

Rate limiting

The simplest and most effective anti-blocking measure is just slowing down. Add a delay between requests – usleep() with a random value between 500 ms and 2 seconds mimics human browsing patterns far better than firing 50 requests per second.

If a server returns a Retry-After header (usually alongside a 429 status), honor it. Ignoring rate limit signals is the fastest way to get your IP permanently banned.

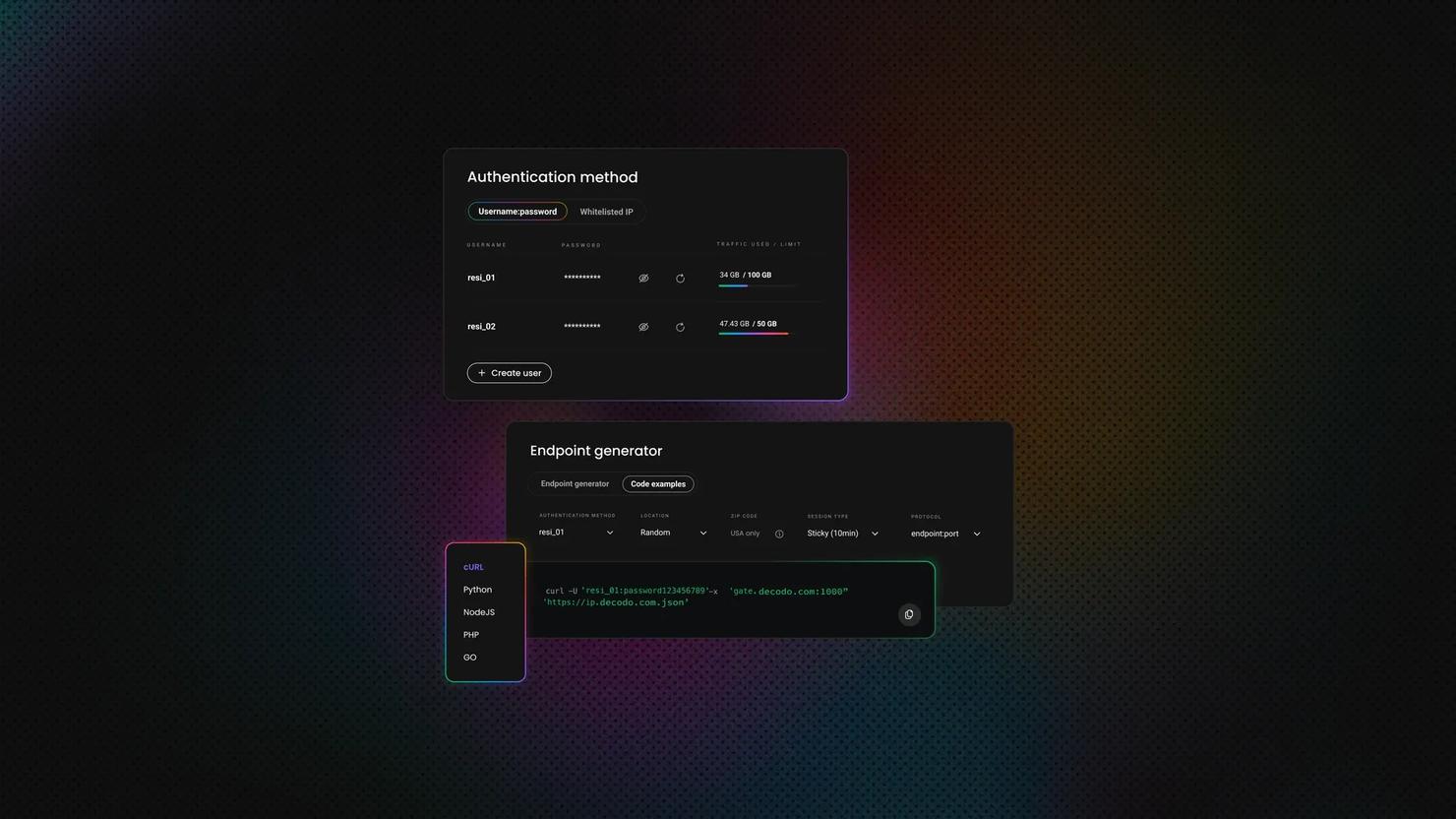

IP rotation with proxies

When rate limiting alone isn't enough, you need to distribute your requests across multiple IP addresses.

Datacenter proxies are cheap but increasingly easy for anti-bot systems to identify – the IP ranges are well-known and frequently blocklisted. Residential proxies route your traffic through real consumer IP addresses, making requests indistinguishable from regular users. They cost more per gigabyte but are dramatically more effective against sophisticated detection.

The rotation strategy depends on the target. Some sites track sessions by IP, so you want to maintain the same proxy for a full session. Others are fine with rotating per request. Handle proxy failures gracefully – if a proxy times out or returns an error, try the next one in the pool rather than failing the entire request.

Decodo's residential proxies integrate directly with cURL and Guzzle through standard proxy configuration. You point your HTTP client at the proxy endpoint, and Decodo handles IP rotation, geo-targeting, and session management on its side.

CAPTCHAs

CAPTCHAs usually appear when a site suspects automated traffic. If you're seeing them consistently, it's a signal that your request pattern is triggering detection – fixing your headers, rate limiting, and IP rotation will often make CAPTCHAs disappear entirely.

Third-party CAPTCHA-solving services exist, but relying on them adds cost, latency, and complexity. If your scraper needs constant CAPTCHA solving to function, the underlying approach probably needs rethinking, or a dedicated CAPTCHA bypass tool is required.

Browser fingerprinting

Modern anti-bot systems go beyond IP addresses and headers. They look at TLS fingerprints, JavaScript execution patterns, canvas rendering, and dozens of other browser-specific signals.

Standard HTTP clients like cURL and Guzzle have distinct TLS fingerprints that don't match real browsers. Headless browsers are closer, but tools like Selenium and Puppeteer have their own detectable tells – specific JavaScript properties, missing browser plugins, and viewport characteristics that flag them as automated.

This is one area where a managed service pays for itself. Decodo's Web Scraping API handles fingerprint management, header rotation, and anti-bot evasion at the infrastructure level – you send a URL, it returns the HTML, and the detection arms race is someone else's problem.

Caching during development

This is one of the most underrated best practices. While you're building and debugging your parser, you don't need to hit the target site for every test run. Save the HTML response locally the first time, then read from the cache on subsequent runs.

A simple approach: hash the URL, use it as a filename, and check if the cached file exists before making a request. This is faster, avoids wasting bandwidth, and prevents you from getting blocked during development.

Delete the cache when you're ready to do a production run, or set a TTL so cached files expire after a reasonable period.

Logging and monitoring

For any scraper that runs unattended, logging is essential. Track:

- Every request. URL, status code, response time. This is your audit trail when something breaks.

- Parsing failures. Which pages had missing elements, unexpected structures, or empty results.

- Progress. How many pages scraped, how many items extracted, estimated time remaining.

- Repeated failures. If the same URL fails three times in a row, or if your success rate drops below a threshold, something has changed – alert on it rather than letting the scraper churn through errors silently.

Write logs to a file (not just stdout) so you can review them after a long-running job completes. PHP's built-in error_log() works for simple setups; Monolog is the standard choice for anything more structured.

Final thoughts

PHP won't top any "best language for web scraping" listicle, and that's fine – it doesn't need to. You've got HTTP clients that handle anything cURL can throw at them, parsers that make DOM traversal painless, and a data pipeline that goes from raw HTML to a database row in fewer lines than most languages. If PHP is already your favorite elephant, you now have everything you need to build scrapers that actually hold up in production.

About the author

Zilvinas Tamulis

Technical Copywriter

A technical writer with over 4 years of experience, Žilvinas blends his studies in Multimedia & Computer Design with practical expertise in creating user manuals, guides, and technical documentation. His work includes developing web projects used by hundreds daily, drawing from hands-on experience with JavaScript, PHP, and Python.

Connect with Žilvinas via LinkedIn

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.