undetected_chromedriver: Guide to Avoid Detection Online

Standard Selenium ChromeDriver is blocked by most protected websites in the first few requests. Anti-bot services like Cloudflare, DataDome, and HUMAN (formerly PerimeterX) can detect automation flags, WebDriver properties, and browser fingerprint gaps before the first page finishes loading. The undetected_chromedriver library patches ChromeDriver to reduce these detection signals and works as a drop-in Selenium WebDriver replacement. This guide shows what actually gets flagged, how the patches work, and how to fill the gaps with proxies and behavioral techniques.

Justinas Tamasevicius

Last updated: Apr 23, 2026

18 min read

TL;DR

The undetected_chromedriver library patches ChromeDriver so you can replace webdriver.Chrome() with uc.Chrome() – the rest of your Selenium code stays the same.

- Your IP address is not masked. Pair with residential proxies for protected sites

- Randomized delays, varied viewports, and mouse movements reduce pattern-based detection beyond what the browser patches cover

- Chrome DevTools Protocol and behavioral detection are not patched. If your target uses advanced Cloudflare or DataDome, consider Nodriver, SeleniumBase UC Mode, or Camoufox as alternatives

What is undetected_chromedriver and how does it work

The undetected_chromedriver library is an open-source Python package built on top of Selenium. It automatically downloads, patches, and launches a ChromeDriver binary with modifications that reduce detection by anti-bot services.

How anti-bot systems detect standard Selenium

The navigator.webdriver property returns true in automated Chrome sessions. Anti-bot scripts typically read this property on page load and may flag the session.

Chrome DevTools Protocol (CDP) flags appear when ChromeDriver connects to Chrome. Services like Cloudflare and DataDome detect the Runtime.Enable CDP call, which automation libraries trigger during initialization.

ChromeDriver injects cdc_ prefixed variables into the browser's window scope (for example, cdc_adoQpoasnfa76pfcZLmcfl_Array). Many anti-bot scripts scan for variable names containing cdc to identify automated sessions.

Browser fingerprint gaps can also trigger detection. Automated Chrome sessions may report unusual screen dimensions, missing GPU renderer info, or inconsistent timezone/language settings compared to the IP address location.

Behavioral analysis adds another detection layer. Modern anti-bot systems analyze mouse movement patterns, click timing, scroll speed, and navigation flow. Cloudflare deploys machine learning (ML) models trained on each website's real visitor behavior. Our guide on anti-bot systems covers each detection method in more detail.

What undetected_chromedriver patches

The library applies several patches to the ChromeDriver binary before launching Chrome.

It renames and removes the cdc_ prefixed strings inside the ChromeDriver binary so the injected window variables no longer match known patterns. Anti-bot scripts that scan for cdc in variable names should find no matches after patching.

It modifies the ChromeDriver binary to remove automation indicators from Chrome's launch flags. The --enable-automation flag and the AutomationControlled feature flag are both stripped before Chrome starts.

It sets a standard user agent string and manages browser profile settings to resemble a regular browsing session. And it auto-downloads the correct ChromeDriver version for your installed Chrome, so you avoid manual version matching.

These patches cover API-level and binary-level detection signals. CDP protocol detection and behavioral analysis require different approaches – covered in the limitations section.

Set up Python and install undetected_chromedriver

Verify these requirements, then install.

System requirements

- Python 3.8 to 3.11 – the PyPI release (v3.5.5) depends on distutils, which Python removed in version 3.12. If you run Python 3.12 or higher, install from the GitHub master branch instead (see below)

- Google Chrome browser – install the latest stable version

- Operating system – Windows, macOS, or Linux all work. Linux servers require additional dependencies for headless Chrome (xvfb, libgconf, libnss3)

No manual ChromeDriver download is needed. The library automates the download and patching.

Set up a virtual environment

Create a virtual environment:

Activate the environment based on your operating system:

Install the package

Check your Python version first – the install command depends on it:

Python 3.8-3.11 – install from PyPI:

Python 3.12 or higher – the PyPI release fails with ModuleNotFoundError: No module named 'distutils'. Install from the GitHub master branch instead:

Both commands install Selenium as a dependency.

Verify the installation:

Expected output:

Organize your scraper files

Organize your scraping code into separate files for maintainability:

For Selenium scraping fundamentals, see the guide on web scraping with Selenium and Python.

Verify the installation against a real anti-bot test

With the environment ready, verify against an actual detection test. This script visits a headless Chrome detection test page and checks whether undetected_chromedriver passes each signal:

Expected output:

Most lines should show "passed" or a non-suspicious value. WebDriver: missing (passed) indicates the library patched navigator.webdriver to return undefined instead of true. Plugins Length: 5 indicates Chrome is reporting browser plugins (the exact count depends on your Chrome installation).

If you see a SessionNotCreatedException, your Chrome version and ChromeDriver version don't match – see the troubleshooting section below.

The fingerprint test above verifies browser properties. For a real-world Cloudflare bypass test with data extraction, see the combined example in the proxy section – it scrapes Trustpilot (a protected review site) through a residential proxy.

Build your first undetected scraper

Now use it for actual data extraction. Books to Scrape (https://books.toscrape.com) is a scraping sandbox. The following code demonstrates CSS selectors, pagination, and JSON export patterns that apply to any target site.

Initialize the browser

The uc.Chrome() constructor works identically to Selenium's webdriver.Chrome() after initialization:

Expected output:

You use the same Selenium API (driver.get(), find_element(), find_elements()) after initialization.

Extract data with CSS selectors and XPath

WebDriverWait waits for elements to load before extracting – required for any page with dynamic content:

Expected output:

Handle pagination

Most scraping tasks require collecting data across multiple pages. Add a loop that follows the "next" button:

Expected output:

The extraction and pagination examples above use with uc.Chrome() as driver: – this ensures Chrome closes even on errors, preventing orphaned processes.

For CSS vs XPath comparison, see how to choose the right selector.

These extraction patterns work on any site. For protected targets, the next sections cover proxy integration and behavioral techniques that reduce block rates.

Advanced configuration and customization

Targeting specific Chrome versions, configuring headless mode, and customizing browser options gives you more control when the defaults aren't enough.

Target a specific Chrome version

If your production environment runs a specific Chrome version, pin undetected_chromedriver to match. This reduces the risk of breakage when Chrome auto-updates:

Expected output:

Find your installed Chrome version at chrome://version in the address bar, or run google-chrome --version on Linux. Version pinning is useful when testing compatibility before upgrading Chrome across your scraping infrastructure.

Customize Chrome options

Pass a uc.ChromeOptions() object to configure the browser window, language, GPU rendering, and other flags:

Expected output (exact values depend on your display resolution and OS):

On macOS with Retina displays, the OS may constrain the window to fit the screen, returning smaller values like {'width': 1470, 'height': 847}.

Useful arguments for scraping:

- --window-size=1920,1080 – sets a realistic desktop viewport

- --lang=en-US – matches your proxy's geographic location

- --disable-notifications – suppresses browser notification popups

- --disable-popup-blocking – prevents popup-related errors

Specify a custom browser executable

If you have multiple Chrome installations (stable, beta, canary), point undetected_chromedriver to a specific binary:

On Linux, the path is typically /usr/bin/google-chrome-beta or /usr/bin/google-chrome-unstable for Canary builds.

Configure headless mode

Headless mode runs Chrome without a visible window, which reduces resource usage on servers. But headless mode increases detection risk because some anti-bot systems check for headless-specific browser properties:

Expected output:

The library patches headless mode but the library's maintainer marks it "unsupported". It may work for sites with moderate protection, but sites running advanced Cloudflare or DataDome configurations can still detect the headless environment. Use headed mode (the default) when stealth is the priority, and headless mode when running on servers where a display isn't available.

On Linux servers, run your script with xvfb-run python scraper.py to create a virtual display for headed Chrome without a physical monitor. This approach can reduce detection risk while maintaining server compatibility.

Persist sessions with custom user data directories

By default, undetected_chromedriver creates a fresh profile each run. To persist cookies, login sessions, and localStorage across runs, specify a user data directory:

Reusing the same profile across many scraping runs may accumulate tracking cookies that increase detection risk. Clear the profile directory periodically for clean sessions. On Windows, use a compatible path like C:\temp\uc_profile instead of /tmp/.

Use extended API methods

The library adds 2 methods beyond the standard Selenium API. Both are available on any element returned by find_element() when using uc.Chrome():

- element.click_safe() – briefly disconnects the driver before clicking to avoid detection, then reconnects automatically. Useful when standard .click() triggers anti-bot checks or fails on overlapping elements

- parent.children() – returns child elements without writing a separate CSS or XPath selector

Both methods are UC-specific – they don't exist in standard Selenium.

Reduce detection with proxies, user agents, and behavioral techniques

The undetected_chromedriver library patches browser-level signals. But it doesn't hide your IP address, rotate user agents, or add human-like behavior. For sites with moderate to strong protection, you need all 3 layers working together.

Why proxies are required for protected sites

The library's README states clearly: "THIS PACKAGE DOES NOT hide your IP address". Many anti-bot services maintain blocklists of known datacenter IP ranges. Even residential IPs with poor reputation scores can trigger blocks on some sites. Rate limiting and IP-based blocking typically remain active regardless of browser fingerprint quality.

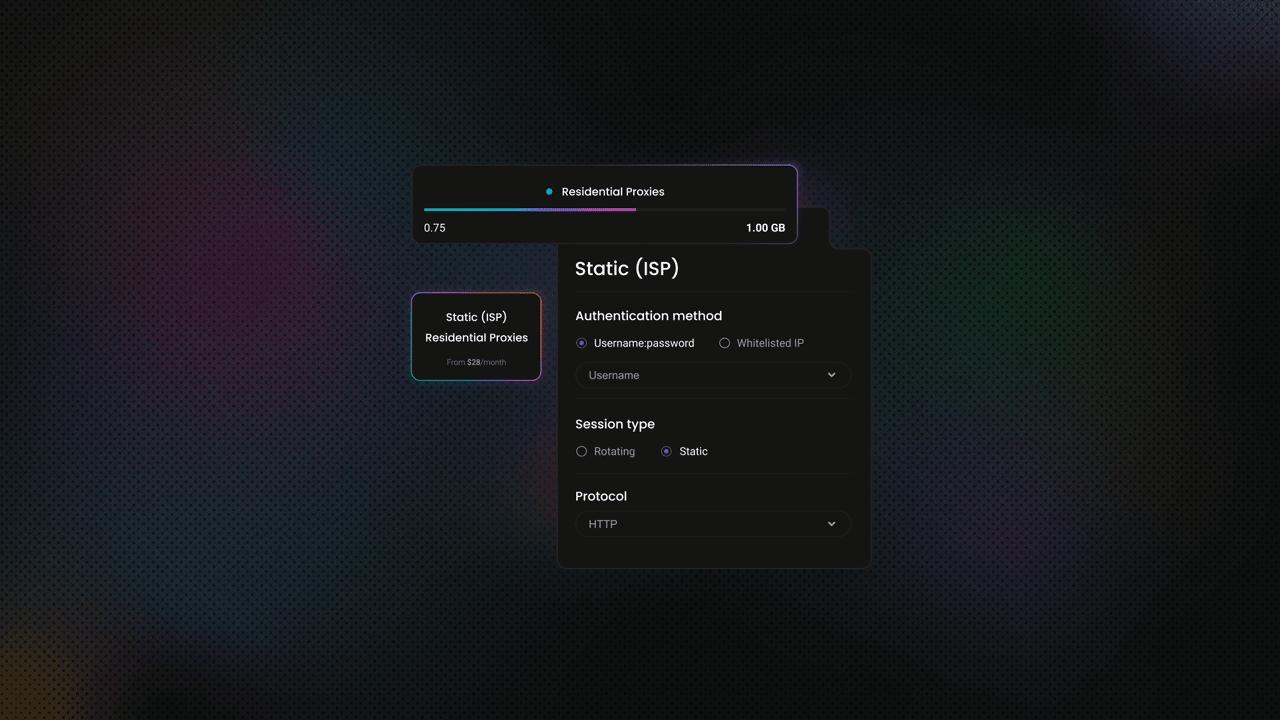

At Decodo, we offer residential proxies with a high success rate (99.86%), automatic rotation, a rapid response time (<0.6s), and extensive geo-targeting options (195+ worldwide locations). Here's how easy it is to get a plan and your proxy credentials:

- Create your account. Sign up at the Decodo dashboard.

- Select a proxy plan. Choose a subscription that suits your needs or start with a 3-day free trial.

- Configure proxy settings. Set up your proxies with rotating sessions for maximum effectiveness.

- Select locations. Target specific regions based on your data requirements or keep it set to Random.

- Copy your credentials. You'll need your proxy username, password, and server endpoint to integrate into your scraping script.

Get residential proxies

Unlock superior scraping performance with a free 3-day trial of Decodo's residential proxy network.

Configure a proxy with undetected_chromedriver

Pass the proxy address through Chrome's --proxy-server argument:

Expected output (with proxy active):

Replace PROXY_IP:PROXY_PORT with your actual proxy address. The --proxy-server flag works for IP-allowlisted proxies but doesn't support username:password authentication directly. Most proxy providers use authenticated access, so the next section covers that.

Use authenticated Decodo proxies with a Chrome extension

Chrome's --proxy-server flag doesn't accept a username:password@host:port format. The authentication limitation is a frequent obstacle developers encounter with proxy integration. One solution is a temporary Chrome extension that intercepts authentication requests and injects your credentials automatically. This approach works with any proxy provider that uses username:password auth:

The extension uses Manifest V2, which Chrome has been deprecating since 2024. As of early 2026, MV2 extensions loaded via ChromeDriver still work. If Chrome stops loading the extension in a future version, use IP allowlisting as a fallback.

The code above works with any proxy provider. Decodo residential proxies are one option – see the product page for pricing and account setup.

All requests go through port 7000 on gate.decodo.com. By default, each request is assigned a different IP from the pool. To keep the same IP across multiple requests (a sticky session), add a -session-XXXX parameter to the username string.

Sticky sessions last 10 minutes by default, configurable up to 1,440 minutes with the -sessionduration- parameter. For details, see the sticky session documentation.

Expected output (IP and ISP vary based on the proxy endpoint):

The Chrome version and IP address in the output depend on your installed Chrome and the proxy endpoint.

The session and geo-targeting parameters below use the user- prefix format (for curl and endpoint strings). The Chrome extension code above uses your raw username because it authenticates at a different level.

For sticky sessions, add -session-XXXX to the username (for example, user-YOUR_USERNAME-session-scraper1). For location-specific scraping, append -country-us-city-new_york. Combine both: user-YOUR_USERNAME-country-us-session-scraper1-sessionduration-90.

The password stays unchanged. For the full parameter reference, see the username authentication documentation.

To verify your proxy credentials before integrating, test with curl:

If the proxy is working, the response shows a JSON object with browser, ISP, and location data from the proxy IP, not your real IP.

Combined example: proxy + Cloudflare bypass + data extraction

The previous subsections covered proxy auth and detection bypass separately. This example combines them into one script using the create_proxy_auth_extension function from above:

Copy the create_proxy_auth_extension function from the previous section into your script, then add:

Expected output:

One browser session, 2 steps: verify the proxy is active, then scrape real review data from a protected page. The same selectors work for any company on Trustpilot – replace amazon.com in the URL with your target.

Rotate user agents

Anti-bot systems compare your user agent string against other browser fingerprint signals. A mismatched user agent (claiming an older Chrome version while your browser reports current features) can trigger detection. Keep user agent strings current and consistent with your Chrome version:

Expected output:

Update the Chrome version number in these strings to match your installed Chrome. Find your version at chrome://version or at whatismybrowser.com.

Add behavioral delays and patterns

User agent rotation covers one fingerprint signal. Behavioral patterns are the other major detection layer. Modern anti-bot systems weigh behavioral signals as heavily as technical fingerprints. Fixed delays and linear mouse movements can create detectable patterns. Use randomized timing and vary your interaction patterns:

The key patterns to vary across sessions:

- Delay ranges – use random.uniform(2, 6) instead of fixed time.sleep(3)

- Viewport sizes – alternate between common resolutions (1920x1080, 1366x768, 1536x864)

- Scroll behavior – vary scroll distance and pause duration

- Session timing – avoid running at the exact same time daily

Manage cookies between sessions

Session behavior also includes cookie handling. For sites that require login or track session state, save and restore cookies:

Expected output (cookie count depends on the target site):

Books to Scrape doesn't set cookies. On sites that use session tracking, login forms, or analytics, the cookie count varies by site.

Clear saved cookies periodically. Accumulating tracking cookies over many sessions may increase the chance that an anti-bot system identifies your scraping pattern.

For strategies on handling CAPTCHAs when they appear, see how to bypass CAPTCHAs.

Limitations and where undetected_chromedriver fails

The library has real limitations. Understanding them before you start saves hours of debugging.

Detection by advanced anti-bot systems

Advanced Cloudflare configurations (Bot Fight Mode, Managed Challenge) can still detect the library. Enterprise-grade solutions like Akamai Bot Manager and advanced DataDome setups analyze signals that undetected_chromedriver doesn't currently patch. These signals include TLS fingerprints (unique signatures in the TLS handshake that identify the client), CDP connection patterns, and behavioral anomalies.

Detection results vary by IP reputation, geographic location, and the target site's current protection level. The same code may pass on one run and fail on the next. This variability is itself a limitation – production scrapers need consistent results.

When detection does trigger, the most common failures are: NoSuchWindowException (browser window closed by the anti-bot system), CAPTCHA challenge pages, or HTTP 403 responses. If you see any of these after adding proxies and behavioral techniques, the target site likely uses CDP-level or behavioral detection that UC can't patch.

The ongoing detection vs. evasion cycle

Anti-bot companies actively study open-source bypass tools. The specific patches that undetected_chromedriver applies are publicly visible in its GitHub repository. What works against a site's anti-bot system today may become ineffective after the next update, which can happen at any time without notice.

A useful way to categorize current detection techniques is by what they target:

- Generation 1 – API-level checks like navigator.webdriver (undetected_chromedriver patches these)

- Generation 2 – CDP protocol detection via Runtime.Enable calls (undetected_chromedriver doesn't patch these)

- Generation 3 – TLS fingerprinting, behavioral analysis, and per-customer ML models (client-side libraries generally can't patch these)

The library patches Generation 1 signals. But Generation 2 and 3 detection requires different architectural approaches (Nodriver eliminates the WebDriver protocol layer) or managed solutions that handle detection at the network level.

Stability and resource usage

Each undetected_chromedriver instance runs a full Chrome browser.

Browser crashes can occur during long-running scraping sessions, especially when memory accumulates across hundreds of page loads. Memory leaks from non-closed tabs and JavaScript-heavy pages add up. Version mismatches between Chrome and the auto-downloaded ChromeDriver often cause SessionNotCreatedException errors after Chrome auto-updates.

On Linux servers, missing GUI dependencies (libgconf, libnss3, xvfb) can cause Chrome to fail at launch. Windows and macOS typically have fewer dependency issues but still face memory limits. Pin your Chrome version with version_main and use context managers (with uc.Chrome()) to prevent memory accumulation – both are covered in the configuration section.

Headless mode increases detection risk

Headless Chrome exposes different browser properties than headed Chrome. Some JavaScript APIs return different values, and the rendering pipeline produces detectable fingerprint differences. Anti-bot systems check for these headless-specific signals.

The trade-off: headless mode uses less memory and doesn't require a display server, but headed mode provides better stealth. On production servers without displays, consider xvfb-run to run headed Chrome in a virtual frame buffer.

IP address exposure

The library patches browser-level signals but doesn't modify your network connection. Running from a datacenter IP, a VPS, or a cloud instance with known hosting provider IP ranges often increases block rates significantly. Even home IPs with poor reputation (due to previous abuse from the same ISP range) may trigger blocks on sensitive sites.

IP exposure is a primary reason proxies are strongly recommended for production scraping.

Troubleshoot common errors

Each entry below addresses an error message pattern you may encounter.

ModuleNotFoundError: no module named 'undetected_chromedriver'

This error means the package isn't installed in your active Python environment. If the error mentions distutils instead of undetected_chromedriver, you're on Python 3.12+ – install from the GitHub master branch as described in the install section.

Otherwise, verify you have activated your virtual environment, then reinstall:

If you use poetry or conda, install with the corresponding package manager:

"This version of ChromeDriver only supports Chrome version X"

This mismatch typically happens when Chrome auto-updates but the cached ChromeDriver binary doesn't match. Update Chrome to the latest version, or pin undetected_chromedriver to your installed Chrome version:

Alternatively, specify a different Chrome binary path if you have multiple versions installed.

SessionNotCreatedException: could not start a new session

ChromeDriver failed to launch Chrome. Common causes and fixes:

On Linux, install the required dependencies:

If Chrome can't start, check that the Chrome binary exists and has execute permissions. Also verify that no other ChromeDriver process is locked on the same port.

TimeoutException when loading pages

Page elements didn't load in the expected time. The timeout often indicates a slow proxy connection, network issue, or changed page structure:

Always use explicit waits (WebDriverWait) instead of implicit waits (driver.implicitly_wait()). Explicit waits target specific elements and give better error messages.

WebDriverException: unknown error – cannot connect to chrome

Chrome crashed or failed to start. Reduce concurrent instances, increase system resources, or add crash recovery:

Expected output:

For a production-grade version with exponential backoff and block detection, see the retry pattern in the best practices section. For general retry patterns, see retry failed Python requests.

Alternatives to undetected_chromedriver

When UC doesn't meet your requirements, consider these alternatives.

Nodriver – the same author's async successor

Nodriver is built by the same developer (ultrafunkamsterdam) as a ground-up replacement for undetected_chromedriver. It uses CDP to communicate with Chrome but eliminates the ChromeDriver binary and the WebDriver protocol layer. This avoids the Runtime.Enable detection vector because Nodriver controls which CDP commands are sent.

Nodriver is under active development. It requires Python 3.9+. Install with pip install nodriver.

Expected output:

The async API requires rewriting existing Selenium-based code. Nodriver isn't a Selenium-compatible wrapper.

For a full setup guide with proxy integration and concurrency patterns, see our guide on Nodriver for web scraping.

SeleniumBase UC Mode

SeleniumBase includes a UC Mode that adds the disconnect/reconnect pattern to undetected_chromedriver. During sensitive actions like page loads and clicks, UC Mode disconnects ChromeDriver from Chrome entirely, then reconnects after the action completes. Anti-bot systems that scan for active CDP connections during page load may find no active connection to flag.

UC Mode also provides built-in methods for Cloudflare Turnstile and reCAPTCHA: uc_gui_click_captcha() attempts to detect the CAPTCHA type and solve it using PyAutoGUI-based clicking. On headless Linux servers, combine with xvfb=True to enable GUI automation without a physical display.

SeleniumBase UC Mode is actively maintained, compared to undetected_chromedriver which hasn't had a PyPI release since early 2024. For UC users considering migration, the Selenium API (find_element, get, quit) stays the same – the uc_* methods are additions, not replacements.

Playwright with stealth plugins

Playwright supports Chromium, Firefox, and WebKit browsers. The playwright-stealth Python package applies evasion techniques like navigator property spoofing and WebDriver detection bypasses.

The multi-browser support in Playwright is useful when Firefox produces lower detection rates than Chromium for a specific target site. But stealth plugins come from the community, not from Microsoft. Updates may not keep pace with anti-bot changes.

Camoufox – Firefox-based anti-detection

The alternatives above primarily target Chromium, and their stealth patches work at the JavaScript or protocol level. Camoufox takes a different approach: it modifies Firefox at the binary level to spoof browser fingerprints, making automated sessions harder for anti-bot systems to identify. Because the patches operate below the JavaScript execution layer, they may be more resistant to detection than JS-level stealth plugins.

Camoufox is useful when Chromium-based tools consistently fail on a target site – switching to a Firefox fingerprint presents a different detection profile. Our guide on web scraping with Camoufox covers proxy configuration, session handling, and testing against protected sites.

Puppeteer with stealth plugins (Node.js)

For Node.js-based projects, puppeteer-extra-plugin-stealth adds evasion techniques to Puppeteer. It has a large npm download base and active community, but the package hasn't published a new version since 2023. Modern anti-bot systems may have adapted to some of its techniques, given the time since the last update.

Managed web scraping APIs

When self-managed browser automation becomes too resource-intensive or block rates stay high, managed APIs handle anti-bot bypass, proxy rotation, and CAPTCHA solving on the provider's infrastructure. The Decodo Web Scraping API is one option – see the API parameters documentation for details.

The API accepts POST requests to https://scraper-api.decodo.com/v2/scrape with Basic authentication. This Python example scrapes a page with JavaScript rendering enabled:

Expected output:

Set headless to "html" for JavaScript-rendered pages, or omit it for static HTML. The proxy_pool parameter accepts "standard" for general pages or "premium" for sites with anti-bot protection. For location-specific results, add the "geo" parameter (for example, "United States"). See the localization documentation for supported regions.

Managed APIs provide higher reliability but offer less control over browser behavior.

Tool comparison at a glance

Tool

Language

Stealth

Maintenance

Best for

Undetected ChromeDriver

Python

Medium

Infrequent

Quick prototypes, moderate protection

Nodriver

Python

High

Active

Async workflows, stronger stealth

SeleniumBase UC Mode

Python

Medium-High

Active

Full-featured scraping frameworks

Camoufox

Python

High

Active

Firefox fingerprint, binary-level stealth

Playwright + stealth

Python, Node.js

Medium

Community

Multi-browser needs

Puppeteer + stealth

Node.js

Medium

Inactive

JavaScript-heavy scraping

Decodo Web Scraping API

Any

Very High

Managed

Production reliability

How to choose: If your target uses basic Cloudflare protection, UC may be sufficient. If you encounter CAPTCHAs or CDP-level detection, try SeleniumBase UC Mode. If Chromium-based tools consistently fail, try Camoufox (Firefox) or a managed API.

For a deeper comparison of the Playwright approach, see our guide on Playwright web scraping.

Best practices for production scraping

These patterns apply when running scrapers on a schedule or at scale.

Rate limiting and polite scraping

Aggressive request rates often trigger blocks and can disrupt target sites. Implement delays that respect the target site's capacity:

Also check robots.txt before scraping. Respecting it is standard practice and can reduce the chance of IP-level blocks.

Error handling and retries with exponential backoff

Network errors, temporary blocks, and page load failures are expected in production scraping. Implement retry logic with exponential backoff to handle transient failures:

Expected output:

Resource management

Each Chrome instance consumes several hundred MB of RAM. Close browser instances explicitly, and use context managers to prevent orphaned processes:

For long-running scrapers, restart the browser instance periodically to prevent memory accumulation. Start with a restart every few dozen pages and adjust based on your memory usage patterns.

Monitor success rates

Track your scraping success rate to detect when target sites update their anti-bot configuration:

Expected output:

If your success rate drops significantly from your baseline, check whether the target site updated its anti-bot configuration. Switching proxy types (datacenter to residential) or upgrading to a managed scraping API can help reduce persistent block rate increases.

Bottom line

The undetected_chromedriver library modifies browser-level detection markers, which can help reduce detection on sites with moderate protection. For production use, add residential proxies for IP-level protection and behavioral randomization for pattern-based detection. Retry logic handles transient failures.

For sites where the library's block rates stay too high, the next step depends on your scale. For async workflows with better stealth, migrate to Nodriver. For a full-featured Python framework with built-in CAPTCHA handling, evaluate SeleniumBase UC Mode.

For production workloads where uptime matters more than browser control, managed scraping APIs handle anti-bot bypass at the infrastructure level.

Avoid detection with residential proxies

Extract data undetected with Decodo's residential proxy network.

About the author

Justinas Tamasevicius

Director of Engineering

Justinas Tamaševičius is Director of Engineering with over two decades of expertise in software development. What started as a self-taught passion during his school years has evolved into a distinguished career spanning backend engineering, system architecture, and infrastructure development.

Connect with Justinas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.