How to Scrape Websites with PowerShell: A Complete Guide

PowerShell is already where many Windows admins, DevOps teams, and automation-minded developers handle repetitive work. That makes web scraping a natural next step when you need product prices, uptime signals, public data for reports, or quick checks from the terminal. PowerShell works well here because output is pipeable, objects are native, and CSV and JSON exports are built in. In this guide, you'll build a scraper that fetches pages, parses HTML, handles pagination and errors, uses proxies when needed, and exports structured data.

Justinas Tamasevicius

Last updated: May 04, 2026

12 min read

TL;DR

- Use PowerShell 7 for new scraping projects. It runs on Windows, macOS, and Linux, and its web cmdlets use the modern .NET HTTP stack

- Use Invoke-WebRequest when you need the full response object, headers, status code, and HTML content

- Use Invoke-RestMethod when the target is a JSON or XML API, and you want PowerShell to deserialize the response automatically

- Use PSParseHTML for CSS selector-based HTML parsing in PowerShell 7

- Use Selenium only when the page needs a browser-rendered DOM. It works, but the PowerShell module has maintenance and driver-version friction.

- Add retries, explicit timeouts, realistic headers, delays, and proxy support before you run a scraper repeatedly

Setting up PowerShell for web scraping

Before writing scraping code, get the runtime and modules right.

PowerShell Core 7 vs. Windows PowerShell 5

PowerShell 7 runs on Windows, macOS, and Linux, and it uses the newer .NET networking stack behind its web cmdlets. That matters when your scraper needs current TLS behavior, consistent HTTP handling, and the option to run the same job on a laptop, server, or CI runner.

PowerShell 7 also installs side by side with Windows PowerShell 5.1, so you can keep old automation scripts untouched while running new scraping scripts with pwsh.exe instead of powershell.exe.

Check your version:

If the command isn't found, install PowerShell 7. On Windows clients, use winget:

You can also download the MSI installer from the PowerShell GitHub releases page if winget isn't available on your machine.

The main scraping difference between the two versions is HTML parsing. Windows PowerShell 5.1 used Internet Explorer components for .ParsedHtml in Invoke-WebRequest. PowerShell 7 removed that dependency. Instead, fetch HTML with PowerShell's web cmdlets and parse it with a dedicated module such as PSParseHTML.

Fixing execution policy on Windows

Execution policy controls when PowerShell loads scripts and configuration files. It's a safety feature that only applies to Windows.

If Windows blocks your local .ps1 script, set the policy for your current user:

RemoteSigned allows scripts you write locally to run and requires downloaded scripts to be signed or unblocked. You usually don't need admin rights with -Scope CurrentUser. You don't need to do this on Linux and macOS.

Installing PSParseHTML

PSParseHTML gives you ConvertFrom-Html and related HTML utilities. The current module supports both AgilityPack and AngleSharp parsing engines. Use -Engine AngleSharp when you want browser-style DOM methods such as QuerySelector() and QuerySelectorAll(), which makes selectors feel familiar if you already inspect pages in DevTools.

Install PSParseHTML from the PowerShell Gallery:

PowerHTML is a practical alternative when you prefer an HtmlAgilityPack-based parser or XPath-heavy workflows. If you're choosing between them, PSParseHTML is usually more convenient for CSS selectors, while PowerHTML is worth considering when your team already thinks in XPath.

Project structure

Create a small project structure at first:

Put request and parsing logic in scraper.ps1, reusable object shapes in models.ps1, and output functions in export.ps1. You can merge them while learning, but separate files keep recurring jobs easier to maintain.

Don't hardcode proxy credentials, API keys, or account details in scripts. Instead, use environment variables in a .env file:

Then read them inside the script. That keeps secrets out of Git history and pasted code snippets.

Fetching and parsing HTML content

PowerShell gives you 2 built-in web cmdlets: Invoke-WebRequest and Invoke-RestMethod. They overlap, but they aren't interchangeable once you care about response metadata.

Invoke-WebRequest vs. Invoke-RestMethod: When to use which

Invoke-WebRequest sends HTTP(S) requests and returns a response object with properties such as StatusCode, Headers, Content, Links, and Images. Use it when you're scraping HTML or debugging how a server responds.

Invoke-RestMethod also sends HTTP(S) requests, but it's built for REST services that return structured data. For JSON and XML, PowerShell deserializes the response into objects for you.

Target type

Recommended cmdlet

Why

Static HTML page

Invoke-WebRequest

You get status, headers, raw content, and link metadata.

JSON API

Invoke-RestMethod

JSON is automatically converted into PowerShell objects.

XML API

Invoke-RestMethod

XML is parsed into usable nodes.

Raw HTML fetch only

Either

Invoke-WebRequest is still better when you need diagnostics.

Troubleshooting blocks

Invoke-WebRequest

Headers and status codes are easier to inspect.

Fetching a page with Invoke-WebRequest

Use Hacker News as the demo target because it serves stable static HTML and exposes obvious repeating story rows.

This should return status code 200 and a condensed preview of the response headers, which tells you the request worked, and the response object contains inspectable metadata:

It's very important to properly configure the custom user agent because PowerShell's default user agent identifies the PowerShell client. That's useful for normal automation, but it can be a weak fingerprint for scraping. Set a realistic user agent and keep your request volume sane.

Parsing HTML with PSParseHTML

Fetching the page gives you a string in $response.Content. Parsing turns that raw HTML into a DOM, a tree representation of an HTML page that lets you select elements by tag, class, ID, or relationship.

Convert the response content into a DOM:

Use QuerySelector() for a single element. It returns the first match or $null:

On the other hand, use QuerySelectorAll() for multiple elements. It returns an AngleSharp collection. Use its .Length property when you need the number of matches. Always null-check before reading .TextContent or attributes because sites change markup without asking your scraper for permission:

For JavaScript-rendered pages, this pattern won't be enough because Invoke-WebRequest doesn't execute JavaScript.

Selecting and extracting specific data

After fetching and parsing the page, the next step is turning that HTML into usable data. This is where you identify the right selectors, extract the fields you care about, and shape the results into structured PowerShell objects.

Inspecting the target with browser DevTools

Open the target page in a browser, right-click the data you want, and choose Inspect. Look for the smallest stable container that wraps 1 complete item.

On Hacker News, each story row is a table row with the class athing. The title link sits under .titleline > a. The points, author, age, and comments live in the next row under .subtext.

Before writing PowerShell, test selectors in the browser console:

If a selector fails in the browser, it won't magically work in PowerShell. Fix it before you code around it.

Extracting multiple data points from a listing

This example extracts the title, URL, points, author, comment count, and scrape timestamp from Hacker News:

There are 2 important details worth mentioning here:

- Optional fields must be null-checked. Some listings won't have points, authors, prices, ratings, or comments.

- Numeric values are cast to integers. The raw page says "994 points." Your output should store 994 as a number, not as a sentence fragment.

Modeling data with PSCustomObject

PSCustomObject is the standard lightweight shape for structured PowerShell output. You define explicit field names, cast values where useful, and let PowerShell handle the rest.

Typed fields make exports cleaner. CSV columns stay predictable. JSON consumers get stable property names. Your downstream code can compare integers without parsing strings again.

For small pages, collecting objects with foreach is fine. For larger runs, use a generic list so appending stays efficient:

Avoid $array += $item inside large loops. It looks clean, but PowerShell creates a new array each time.

Handling pagination and multiple items

There are 2 common pagination patterns. Some sites expose predictable URLs, such as ?p=1 and ?p=2. Others expose a "More" or "Next" link, and your scraper needs to follow it until it disappears.

URL-pattern pagination

When the URL pattern is obvious, a for loop is usually enough:

The stop condition depends on the site. Use an empty result set, a "no results" message, or a known page count. Don't loop until failure if the page gives you a cleaner signal.

The delay matters too. Start-Sleep -Seconds 2 isn't a magic number. It's a reminder that a scraper shouldn't hammer a server just because a loop can run quickly.

Link-following pagination (next page detection)

Link-following pagination is a better fit when the next URL isn't predictable. Hacker News exposes a "More" link with class morelink.

This approach follows the site instead of assuming how the site works. The example caps the run at 5 pages, so a copied script doesn't crawl indefinitely; remove $maxPages when you intentionally want to continue until the next-page link disappears.

Collecting results across pages

For multi-page runs, use a list and log progress:

That basic visibility saves time. If a selector breaks on page 7, you want the terminal to tell you where the drop happened.

Scraping JavaScript-rendered content

Invoke-WebRequest sends HTTP requests and receives HTTP responses. It doesn't run JavaScript, wait for client-side data fetching, click buttons, or build the final rendered DOM.

Why Invoke-WebRequest fails on JS-heavy pages

Many modern pages return a thin HTML shell first. JavaScript then calls APIs with fetch() or XHR, receives data, and renders the UI in the browser.

When you run this:

PowerShell only sees the raw server response. If the browser shows quotes, but $response.Content doesn't contain rendered quote elements; the target is probably JavaScript-rendered.

Confirm that before changing code. Compare the raw HTML from PowerShell with the rendered DOM in DevTools. If the data appears only after JavaScript runs, use a browser automation tool or a managed scraping service.

Using the Selenium PowerShell module

Selenium controls a real browser through WebDriver. Install the Selenium PowerShell module:

Note that newer Selenium 4 builds for PowerShell can still be a little rough, and browser-driver compatibility can be an issue. If the browser updates and the bundled driver doesn't match, replace the driver manually or point the module at a matching driver directory.

A simple headless Firefox flow against a JavaScript-rendered demo page looks like this:

Use explicit waits such as Get-SeElement -Timeout 15 before selecting dynamic content. Blind sleeps are easy to write, but annoying to debug.

When to use a managed alternative

Local Selenium is fine for a small dynamic scrape or a debugging task. It becomes expensive when you need concurrency, browser lifecycle management, driver updates, JavaScript rendering, retries, and proxy rotation at the same time.

For those targets, Decodo's Web Scraping API can handle JavaScript rendering server-side, so your PowerShell script sends a request and receives rendered or structured output without running a browser locally.

If your Decodo setup exposes a proxy-style endpoint, you can keep the same PowerShell -Proxy pattern shown later. If you're using the API endpoint directly, call it with Invoke-RestMethod and keep browser infrastructure out of your script.

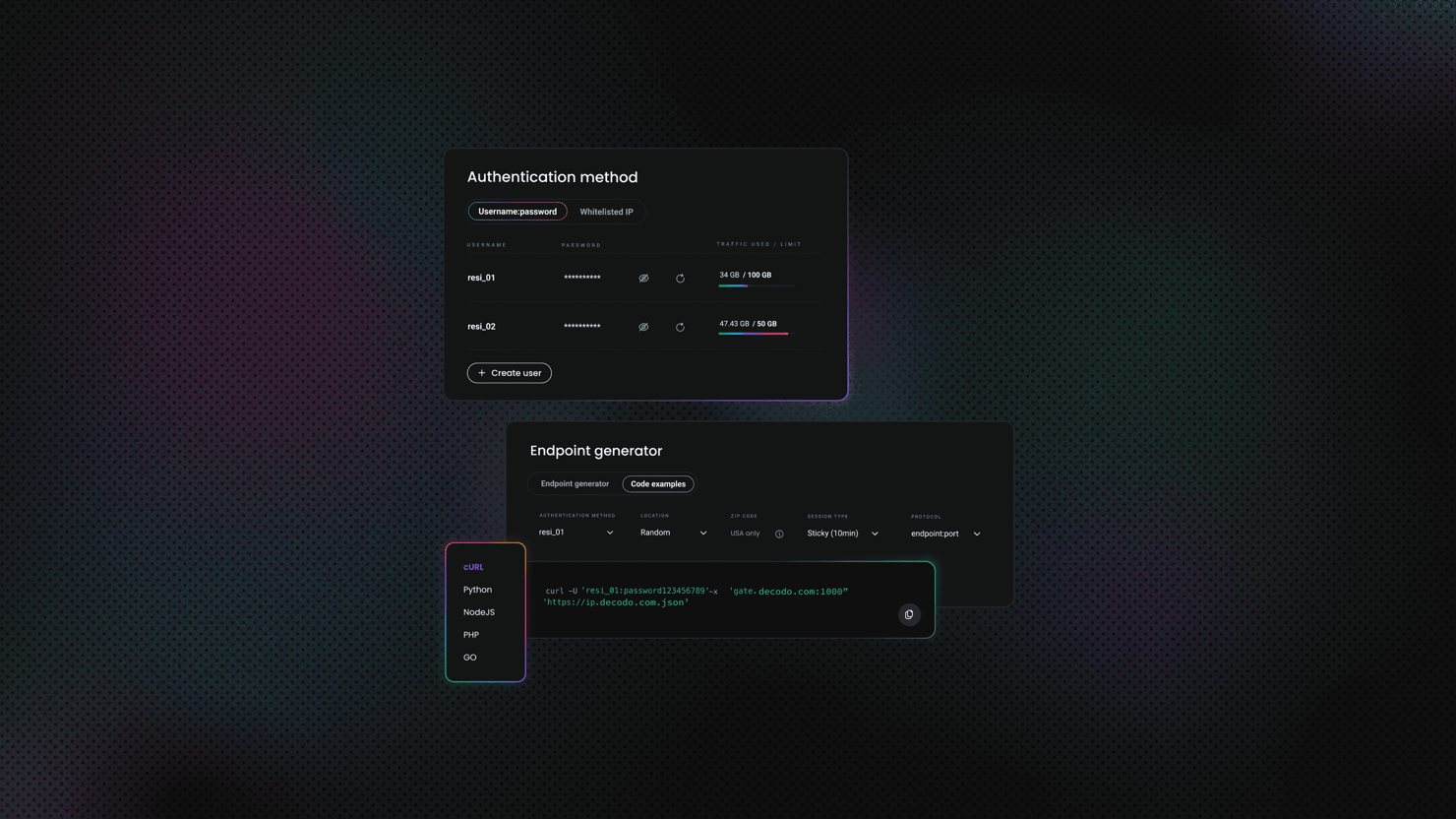

PowerShell hits, proxies rotate

Your script handles the logic. Decodo handles the part where websites pretend you don't exist. Residential proxies, anti-bot bypass, one API.

Error handling and troubleshooting

Timeouts, HTTP errors, empty responses, and broken selectors are just a normal part of scraping, so your script needs to handle them without falling apart.

Common errors and what causes them

HTTP 4xx and 5xx responses are the first category. Invoke-WebRequest throws a terminating error for non-success HTTP responses unless you use -SkipHttpErrorCheck. Catch those errors and inspect the response status code:

SSL and TLS errors usually come from self-signed certificates, corporate inspection proxies, old servers, or legacy Windows PowerShell 5.1 environments with outdated TLS defaults. In PowerShell 7, -SkipCertificateCheck skips certificate validation. Use it only against known test hosts with self-signed certificates.

In Windows PowerShell 5.1, older scripts sometimes change System.Net.ServicePointManager::SecurityProtocol or System.Net.ServicePointManager::ServerCertificateValidationCallback to get past TLS or certificate failures. But that's an outdated troubleshooting method. A global certificate-validation bypass can affect every web request in the session, which is too broad for production scraping.

Timeouts should be explicit. In PowerShell 7, use -ConnectionTimeoutSeconds and -OperationTimeoutSeconds instead of waiting indefinitely:

Older scripts may use -TimeoutSec, especially when written for Windows PowerShell 5.1. That single timeout is still useful when you maintain older scripts, but new PowerShell 7 scripts should separate connection timeout from operation timeout so a slow server doesn't freeze the whole run.

An empty response body with a 200 status usually means one of 3 things:

- The page renders with JavaScript

- The server returned different content to your client

- Your request lacks required headers or session cookies

Selector failures are the most common parsing issue. If $document.QuerySelector(".price") returns $null, the element doesn't exist in the parsed HTML. Null-check it before calling .TextContent:

Building a retry wrapper

Retry transient network errors, timeouts, and temporary server errors. Don't retry bad selectors 10 times and pretend something useful happened.

This wrapper accepts a script block, retries with exponential backoff, and rethrows the final error:

This is the same retry idea you'd use in other languages.

Structured error logging

Don't let failed URLs vanish into terminal scrollback. Save them:

Use -ErrorAction SilentlyContinue only for optional fields where failure is expected.

Using proxies and avoiding blocks

Your requests carry an IP address, a user agent, headers, timing patterns, and session behavior. Anti-bot systems look at those signals together to detect automated behavior.

Why scraping without a proxy gets you blocked

PowerShell's default request tells the server it came from PowerShell. Your real IP sends every request. If you run a tight loop against the same URL, you'll definitely face rate limiting, IP bans, and CAPTCHAs.

Good scraping behavior starts before proxies:

- Set a realistic user agent

- Add delays

- Cache where you can

- Avoid repeated requests for the same page in a short window

Proxies become necessary when IP reputation, geography, or volume becomes part of the problem.

Passing a proxy with Invoke-WebRequest and Invoke-RestMethod

Both Invoke-WebRequest and Invoke-RestMethod support -Proxy and -ProxyCredential. Use -Proxy for the proxy endpoint and -ProxyCredential for the username and password.

Use environment variables for credentials:

The same pattern works with APIs:

Realistic headers are still very important:

Just keep headers coherent. A strange mix of browser, language, platform, and request behavior can look worse than a simple default client.

Proxy type decision framework:

Proxy type

Best fit

Trade-off

Datacenter proxies

Public APIs, low-friction sites, fast checks

Cheaper and fast, but easier to flag by IP range

Protected targets, eCommerce pages, search pages, region-sensitive content

More natural IP reputation, usually higher cost

ISP proxies

High-volume jobs where a stable residential-looking identity matters

Faster than many residential pools, less flexible than rotating pools

Rotating IPs per request

With a rotating proxy endpoint, you don't need to manage a local pool of IPs. You send each request through the same proxy endpoint, and the provider can assign a fresh exit IP based on the endpoint or session configuration.

Geo-targeting works the same way. The country, state, city, or session detail usually lives in the proxy username, password, endpoint, or provider dashboard, not in your scraping loop. Keep those values in environment variables so you can switch from a US session to a German session without editing the script.

Your PowerShell code stays simple:

For session-based rotation, reuse the same session value when you need a stable identity for a short workflow. Drop or change that session value when each request can use a fresh exit IP. The PowerShell code stays the same, and the proxy configuration controls the rotation behavior.

Exporting and structuring scraped data

PowerShell makes it easy to save the output in a format that other tools can read because your scraped items are already objects.

Exporting to CSV

Use CSV for flat, tabular data:

Always use -NoTypeInformation, without it, older PowerShell versions add a type metadata row that spreadsheet and BI tools don't need.

For recurring jobs, append to an existing file:

UTF-8 is the safe default for international text, product names, author names, and symbols scraped from pages.

Exporting to JSON

Use JSON when records have nested structure or need to feed an API, pipeline, or document database:

For versioned run history, add a timestamp:

For production-style exports, wrap the data with metadata:

That wrapper makes the file self-describing. You can inspect it 3 months later and still know where it came from and when it was created.

Console summary

End each run with a short summary:

This is a form of operational feedback. A scraper that silently outputs an empty file can misleadingly look successful until someone checks the data.

Final thoughts

PowerShell can handle the full scraping pipeline: setup, fetch, parse, select, paginate, retry, proxy, and export. It's a great choice for static HTML and simple APIs, because the shell already treats structured data as objects.

Use PowerShell 7 for anything you expect to maintain. Windows PowerShell 5.1 can still fetch pages, but its old HTML parsing behavior belongs to a different era. In PowerShell 7, pair Invoke-WebRequest with PSParseHTML and keep the parsing model explicit.

If JavaScript rendering, browser maintenance, proxy rotation, and anti-bot handling become the project, a managed scraping layer can be cheaper than a local scraping code.

403 Forbidden? Shocking

Decodo's Web Scraping API returns actual data instead of error codes. Rendering, CAPTCHAs, proxy rotation, all handled before your script parses a thing.

About the author

Justinas Tamasevicius

Director of Engineering

Justinas Tamaševičius is Director of Engineering with over two decades of expertise in software development. What started as a self-taught passion during his school years has evolved into a distinguished career spanning backend engineering, system architecture, and infrastructure development.

Connect with Justinas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.