Anthropic Blocks OpenClaw From Claude: What Happened and What to Do Now

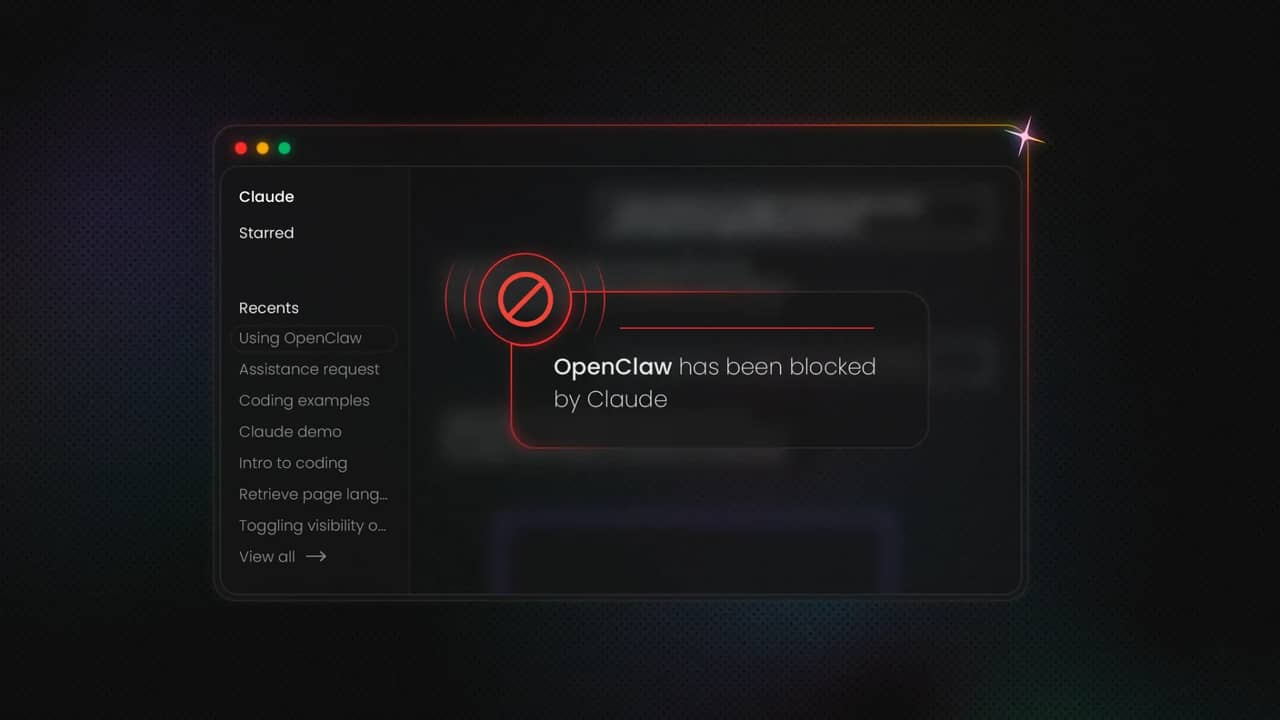

On 4 April 2026, Anthropic blocked Claude Pro and Max subscribers from using OpenClaw and other third-party AI agent frameworks under their flat-rate plans. The change forces affected users onto pay-as-you-go billing, with some facing cost increases of up to 50 times their previous monthly spend. Here's what happened and what you can do about it.

Benediktas Kazlauskas

Last updated: Apr 09, 2026

6 min read

What is OpenClaw and why did it become so popular?

Before diving into the ban itself, understanding what OpenClaw is and how it grew so fast helps explain why this decision sent shockwaves through the developer community.

OpenClaw is an open-source AI agent framework originally created by Austrian developer Peter Steinberger. The project launched in November 2025 under the name Clawdbot, and it started as a side project exploring what would happen if you gave a large language model persistent memory, access to tools, and the ability to communicate through messaging apps like WhatsApp and Telegram. The answer turned out to be that an enormous number of people wanted exactly that kind of setup.

The project went through a couple of name changes in quick succession during late January 2026. Anthropic raised trademark concerns about the phonetic similarity between “Clawdbot” and “Claude,” prompting a rename to Moltbot. A few days later, the project became OpenClaw. Despite the naming turbulence, growth was explosive. According to The Next Web, the repository had collected 247K GitHub stars and over 47K forks by early March 2026, making it one of the fastest-growing GitHub projects in history. At its peak, OpenClaw reached 100K stars in under 48 hours.

The framework supports more than 50 integrations and works across multiple LLM providers, including Claude, GPT-4o, Gemini, and DeepSeek. Tencent even built an enterprise platform directly on top of it, signaling that OpenClaw's influence had extended well beyond individual hobbyists and into serious commercial territory. For developers working with AI automation workflows, OpenClaw represented a flexible, model-agnostic way to build persistent agents without being locked into any single provider's ecosystem.

Providers like Decodo also introduced web scraping skills that allow the OpenClaw agent to access the web and collect publicly available data, eliminating the issue where the agent could only access information it was already trained on, which meant it was constantly working with outdated or incomplete data.

What exactly did Anthropic change?

The restriction that took effect on 4 April 2026 is straightforward in its mechanics, even if the consequences are far-reaching.

Claude Pro and Max subscribers can no longer pipe their subscription model usage allowances through OpenClaw or any other third-party agent framework. If you were running an OpenClaw instance powered by your $20/month Pro plan or $200/month Max plan, that setup no longer works under flat-rate billing. Anthropic has introduced a new "extra usage" pay-as-you-go system with its own API pricing structure, and that is now the only way to use Claude through external agent tools.

Boris Cherny, Head of Claude Code at Anthropic, framed the change as a capacity constraints issue. Anthropic's subscriptions were designed for the usage patterns of their own products and API, not for the high-volume, continuous operation that third-party harnesses like OpenClaw demand. In practice, these frameworks functioned as a shadow API layer, consuming compute at subscription rates far below what the actual token usage would cost at standard pricing.

Importantly, Claude Code, Anthropic's own developer environment, remains fully included in Pro and Max subscription plans and is not subject to the new restrictions. The message is clear – tools built inside Anthropic's ecosystem get favorable treatment, while tools built outside of it now carry a premium. For teams that rely on external data collection tools or third-party agent orchestration platforms, that's a signal to prepare bigger budgets.

The economics behind the ban

The financial logic behind Anthropic's decision becomes obvious once you look at the actual compute costs involved in agentic AI usage.

Claude's subscription plans were designed around a conversational model. A human opens a chat window, types a query, reads a response, and perhaps follows up with a few more messages. The token consumption in that scenario is modest and predictable. Agentic frameworks operate on an entirely different scale, often blowing past the token limits that subscriptions were implicitly built around. A single OpenClaw instance running autonomously for a full day, browsing the web, managing calendars, responding to messages, and executing code, can consume the equivalent of $1K to $5K in API costs depending on the task load. Under a $200/month Max subscription, that math simply doesn't work.

The scale of the compute problem was significant. More than 135K OpenClaw workflows were estimated to be running at the time of the announcement. Industry analysts had noted a price gap of more than 5 times between what heavy agentic users paid under flat subscriptions and what equivalent usage would cost at standard API rates. Anthropic's subscription revenue was quietly covering a type of usage it had never actually charged for. Without rate limiting or enforcement mechanisms in place, there was nothing stopping users from scaling their agents as far as the subscription allowed.

This mirrors a broader pattern across the AI industry. The race to acquire users throughout 2025 prioritized growth over unit economics. Now in 2026, the question of who actually pays for compute, and how much, is becoming impossible to defer.

Anthropic's $3B funding raise in early 2026 gave the company runway, but even that scale of capital can't absorb indefinite compute subsidies for agentic workloads it didn't anticipate.

The OpenAI connection and the question of timing

The timing of Anthropic's restriction has raised eyebrows across the AI community, and the reason is not hard to understand.

On 14 February 2026, Peter Steinberger announced he was leaving OpenClaw to join OpenAI. Sam Altman publicly posted that Steinberger would drive the next generation of personal agents at the company. OpenClaw would be moved to an open-source foundation with OpenAI's continued support. Steinberger wrote in a blog post that teaming up with OpenAI was the fastest way to bring the project's vision to everyone.

Anthropic's restrictions were announced and enforced within weeks of that move. Steinberger and fellow investor Dave Morin attempted to negotiate a softer transition, approaching Anthropic directly. They reportedly managed to delay enforcement by a single week. Steinberger's public response was pointed, accusing Anthropic of copying popular features into their closed harness and then locking out the open-source community.

Whether this reflects competitive calculation or coincidence is a question without a definitive answer. The economic rationale for the ban exists independently of the OpenAI connection. But the effect is the same regardless of motive: the most popular open-source agent framework, now loosely affiliated with Anthropic's biggest competitor, has been effectively priced off Claude's subscription tier.

The cost impact on users

For developers who had built their entire AI workflow around running OpenClaw on a flat Claude subscription, the new billing structure represents a serious financial disruption.

Under the previous setup, a Claude Max subscriber paying $200/month could run OpenClaw continuously with no additional charges. Under the new pay-as-you-go extra usage model, per-interaction costs are estimated at $0.50 to $2 per task. For users running agents that complete dozens or hundreds of tasks per day, the costs add up quickly. Some users are reporting projected monthly bills that are 10 to 50 times higher than their previous subscription cost.

Here's how the different billing options compare for agentic usage:

Usage tier

Old subscription cost

Claude API key

Extra usage (30% off)

Light (10–20 tasks/day)

$20/mo (Pro)

$30–$80/mo

$100–$200/mo

Moderate (50–100 tasks/day)

$200/mo (Max)

$200–$600/mo

$350–$700/mo

Heavy (200+ tasks/day)

$200/mo (Max)

$1K–$5K/mo

$700–$3K/mo

The full API pricing for reference – Claude Sonnet 4.6 costs $3/1M input tokens and $15/1M output tokens. Claude Opus 4.6 costs $15/1M input tokens and $75/1M output tokens. For teams running AI agents that consume large volumes of tokens through web scraping or data processing pipelines, these numbers translate into substantial monthly bills.

Anthropic has offered 2 transition options. Subscribers receive a one-time credit equal to their monthly plan cost, redeemable until 17 April 2026. Users who pre-purchase extra usage bundles can receive discounts of up to 30%.

Solutions and workarounds for OpenClaw users

The ban is real and expanding, but it doesn't mean the end of agentic AI workflows. Several paths forward exist, each with different trade-offs in cost, flexibility, and capability.

Use a Claude API key directly with OpenClaw

The most straightforward option is to bypass the subscription model entirely and connect OpenClaw to Claude through a direct API key. This approach works immediately, requires minimal configuration changes, and gives you access to the same Claude models, including Claude Opus 4.6 for complex reasoning tasks. The downside is cost: you pay at full API rates with no flat-rate cap. For light usage, this may actually be cheaper than a Max subscription, but for heavy agentic workloads, the bills can escalate quickly.

To set this up, generate an API key from your Anthropic dashboard and configure it in your OpenClaw settings. The framework already supports API key authentication as an alternative to subscription-based access.

Purchase extra usage bundles

If you prefer to keep your Claude Pro or Max subscription active, Anthropic's new extra usage bundles let you purchase additional compute at discounted rates. The maximum discount is 30% off standard Pay As You Go pricing, which is available for larger pre-purchases. This option makes the most sense for moderate agentic use, where you can reasonably predict your monthly consumption.

Switch OpenClaw to a different LLM provider

OpenClaw is model-agnostic and supports GPT-4o, Gemini, and DeepSeek alongside Claude. Switching providers is a configuration change rather than a code rewrite. Each alternative comes with its own trade-offs. GPT-4o offers strong general performance and competitive pricing. Gemini provides generous token allowances. DeepSeek is the most cost-effective option for high-volume workloads, though with some quality trade-offs on complex reasoning tasks.

"The model you choose matters less than most people think. What matters more is the data going in. A cheaper model with clean, structured, real-time data will outperform an expensive model working with stale or incomplete inputs every time," Gabriele Vitke, Product Marketing Team Lead at Decodo, noted.

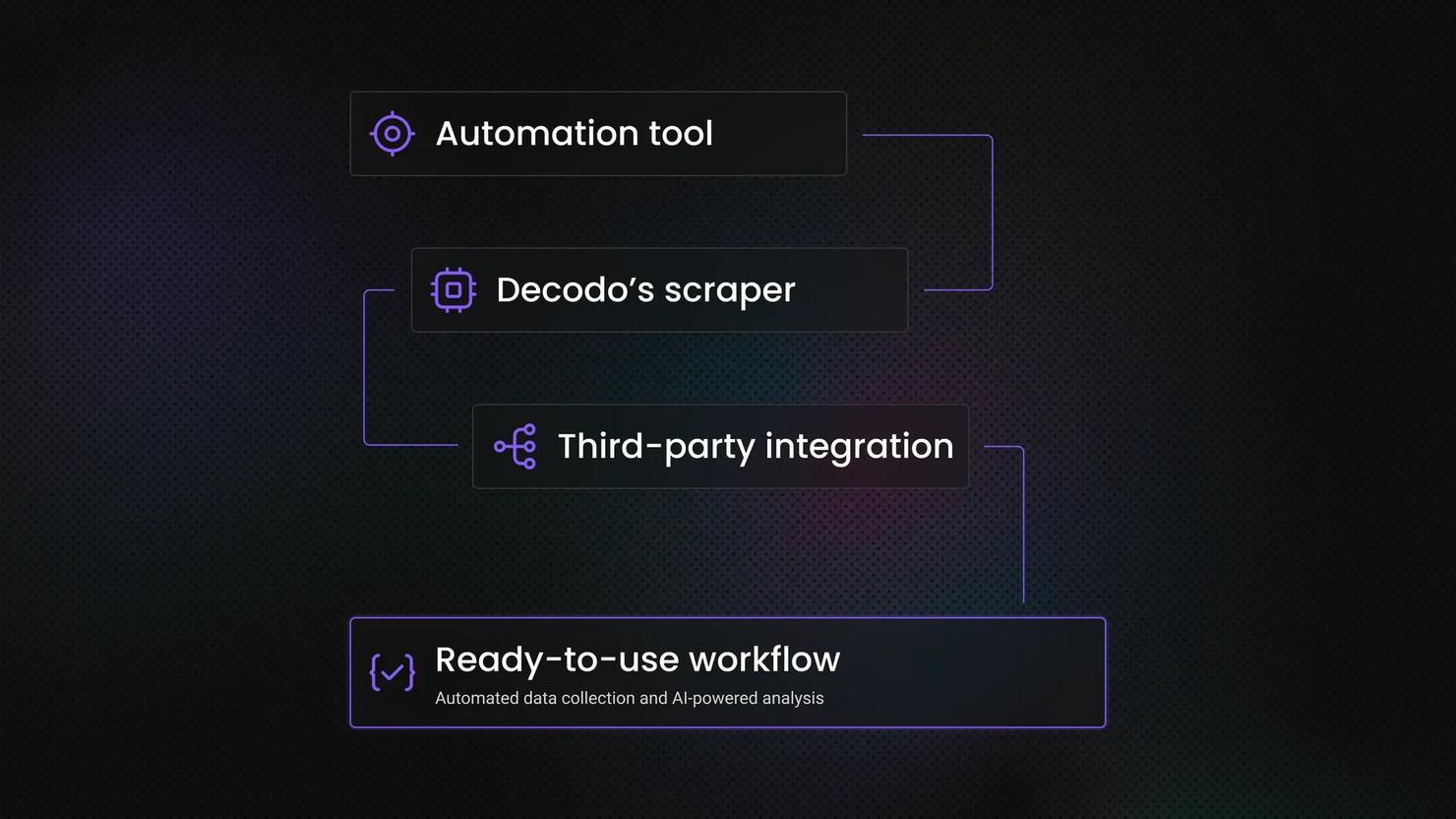

For teams that need their agents to interact with live web data, pairing any of these models with a dedicated Web Scraping API ensures your agents can still fetch real-time information regardless of which LLM powers the reasoning layer. Tools like Decodo's MCP Server let AI agents browse the web, retrieve search results, and process live data without building custom scraping infrastructure.

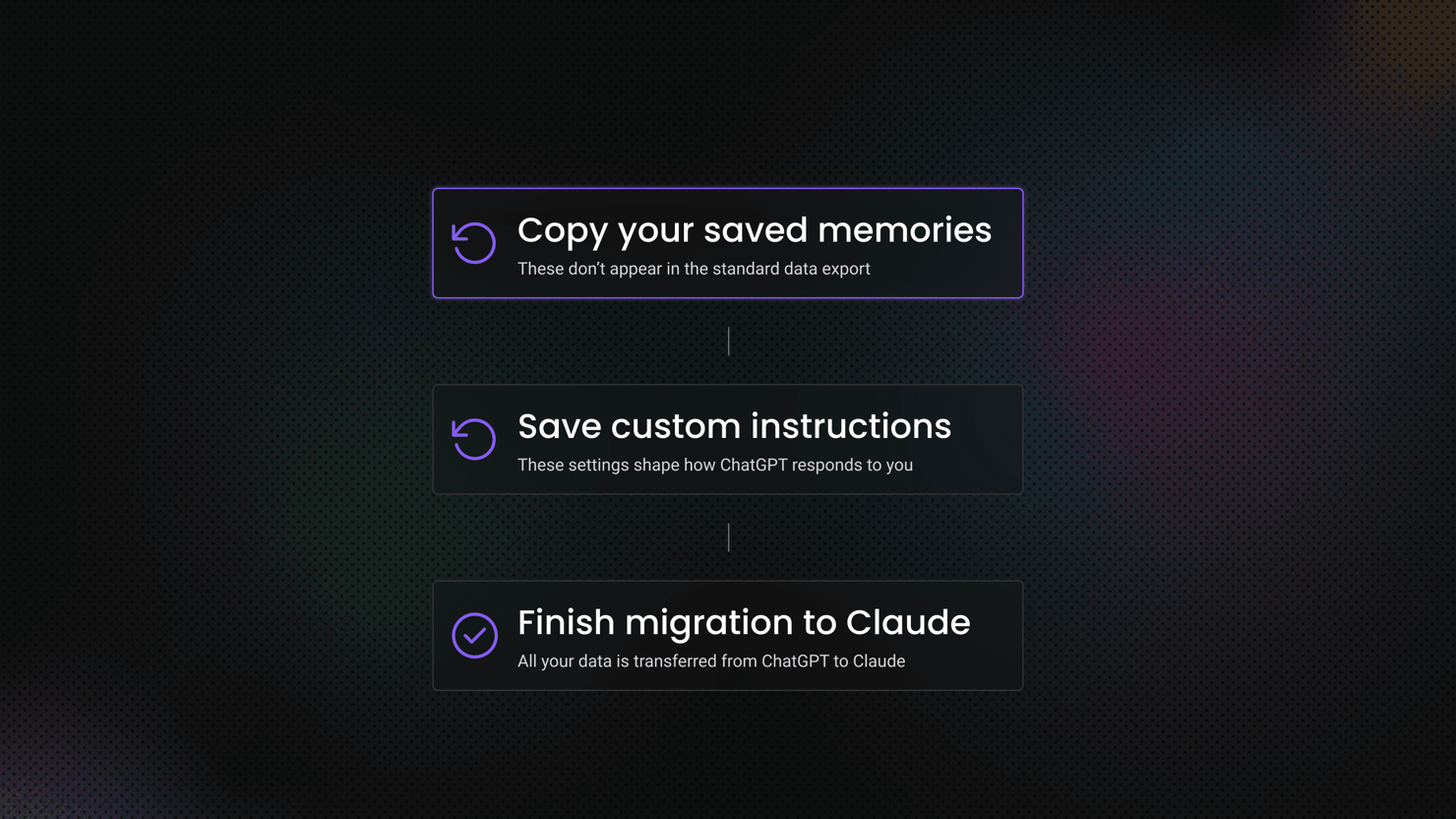

Migrate to Claude Code

Claude Code, Anthropic's own developer environment, remains included in Pro and Max subscription plans with no restrictions. If your OpenClaw usage was primarily focused on coding tasks, development workflows, and technical automation, Claude Code may cover a significant portion of your needs.

The gap between Claude Code and OpenClaw is real, though. OpenClaw offers messaging app integrations, persistent agent memory across sessions, more than 50 third-party tool connections, and the ability to run fully autonomous multi-day workflows. Claude Code is a powerful developer tool, but it doesn't replicate the full scope of what OpenClaw enables. For users whose primary use case was a persistent personal AI assistant handling communications, scheduling, and research, Claude Code is a partial substitute at best.

Optimize your agent usage patterns

Rather than running OpenClaw continuously, restructuring your workflows to use on-demand execution can dramatically reduce costs under the new billing model. Run agents for specific task batches rather than leaving them active 24/7. Use cheaper models for low-priority tasks and reserve Claude Opus for complex reasoning. Implement caching and retry logic to avoid redundant API calls for repeated queries, and add error handling so failed requests don't waste tokens on incomplete runs.

This approach works particularly well when combined with automation platforms like n8n, which can schedule agent tasks, manage retries, and orchestrate multi-step workflows without requiring a persistent LLM connection. The goal is to make every API call count rather than maintain an always-on agent that generates token consumption even during idle periods.

Build a hybrid architecture

The most resilient approach combines multiple strategies. Use Claude Code for tasks covered by your subscription. Route high-volume, lower-complexity tasks through a cheaper LLM provider via OpenClaw. Reserve Claude API access for tasks that specifically benefit from Opus-level reasoning capabilities. This layered architecture gives you cost control, resilience against future pricing changes, and continued access to the best model for each category of work.

For data-intensive agent workflows that involve gathering information from across the web, integrating a dedicated data access layer through residential proxies or scraping APIs reduces the load on your LLM. Instead of asking your agent to navigate, parse, and interpret raw web pages (which consumes enormous quantities of tokens), offload the data retrieval to a specialized tool and send only the clean, structured results to the LLM for analysis. This kind of architecture is becoming standard practice in production AI scraping pipelines where cost efficiency matters.

What this means for the AI industry

Anthropic's OpenClaw restriction is one data point in a much larger trend reshaping how AI products are priced, distributed, and controlled.

The decision fits a pattern of ecosystem consolidation at Anthropic. In March 2026, the company committed $100M to its Claude Partner Network, formalizing a web of enterprise consulting and integration relationships built around its own products. Separately, Anthropic launched a marketplace for Claude-powered software, allowing enterprise customers to purchase third-party applications through channels Anthropic controls. The overall direction is consistent – Anthropic wants the revenue, the data, and the governance layer that comes with owning the customer relationship.

This resembles the playbook used by major cloud providers over the past decade. AWS, Azure, and GCP all started with open, developer-friendly access before gradually introducing proprietary services, preferential pricing for first-party tools, and incentive structures that reward building inside the ecosystem. Anthropic is following a similar trajectory at compressed timescales.

Gabriele Vitke also commented, "This isn't the last pricing shakeup we'll see. Any team building AI workflows on a single provider's flat-rate plan is sitting on a ticking clock. The smart move is building provider-agnostic systems with independent data access, so the next pricing change is a configuration update, not a crisis."

For the broader AI agent ecosystem, the implications are significant. Flat subscription pricing for AI models was always a growth-stage tactic, not a sustainable business model for agentic workloads. The compute costs of running autonomous agents are fundamentally different from the costs of serving conversational queries. Every major AI provider, including OpenAI and Google, will eventually face the same economic pressure Anthropic is responding to now.

The developers and teams building AI-powered data pipelines and agent workflows should plan for a future where LLM access is priced by actual consumption rather than flat subscription tiers. Building cost-aware architectures now, with model routing, task prioritization, and efficient data retrieval layers, will pay dividends as the industry's pricing models continue to mature.

The era of subsidized agentic AI is ending. What replaces it'll be more expensive in raw terms, but also more transparent and more sustainable. The developers who adapt fastest, building efficient, hybrid, provider-agnostic systems, will be the ones best positioned for what comes next.

Connect your AI to live web

Plug our MCP Server into your AI tools and allow it to access real-time data from any website.

Bottom line

Anthropic's decision to block OpenClaw from Claude subscriptions is economically rational but operationally painful for the thousands of developers who built their workflows around flat-rate agentic access. The restriction is expanding to all third-party agent frameworks, and there is no indication it will be reversed. Your practical options include switching to API key billing, moving to an alternative LLM provider, migrating to Claude Code for tasks it covers, or building a hybrid architecture that distributes workloads across multiple models and specialized data tools.

The most important takeaway is to avoid single-provider dependency. The AI landscape is shifting fast, and pricing models are evolving alongside it. Building flexible, model-agnostic agent architectures with cost-efficient data access layers is the most resilient strategy for the road ahead.

About the author

Benediktas Kazlauskas

Content & PR Team Lead

Benediktas is a content professional with over 8 years of experience in B2C, B2B, and SaaS industries. He has worked with startups, marketing agencies, and fast-growing companies, helping brands turn complex topics into clear, useful content.

Connect with Benediktas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.