How to Leverage Claude for Effective Web Scraping

Web scraping has become increasingly complex as websites deploy sophisticated anti-bot measures and dynamic content loading. While traditional scraping approaches require extensive manual coding and maintenance, artificial intelligence offers a transformative solution. Claude, Anthropic's advanced language model, brings unique capabilities to the web scraping landscape that can dramatically improve both efficiency and effectiveness.

Dominykas Niaura

Last updated: Jan 06, 2026

10 min read

TL;DR

- Claude supports two scraping approaches. Use it as a coding assistant to build and refine traditional scrapers, or integrate it directly via API so it parses raw HTML in place of libraries like Beautiful Soup.

- In direct integration mode, your script handles fetching and proxy management while Claude extracts structured data from HTML without CSS selectors or DOM logic.

- Claude's large context window and reasoning capabilities let it understand nested structures and adapt to page layout changes automatically.

- Compared to ChatGPT, Claude excels at lateral thinking and comprehensive code but tends to over-engineer solutions and may introduce duplicate or inconsistent logic.

- ChatGPT produces cleaner, more minimal code but requires detailed prompting and struggles with open-ended or complex scraping problems.

- Regardless of which AI tool you use, residential proxies remain essential to avoid IP blocks and CAPTCHAs at scale.

Two approaches to using Claude for web scraping

Claude offers unique capabilities for web scraping through two distinct but equally powerful approaches: as an intelligent coding assistant for building traditional scrapers, and direct integration as a data extraction engine within your scripts.

Approach 1: Claude as your coding assistant

The first approach uses Claude as an intelligent development partner. You interact with Claude through its chat interface to design, build, and refine traditional web scrapers. You describe what you want to scrape, and Claude generates complete Python scripts using conventional tools like Scrapy, Playwright, Selenium, or Requests with Beautiful Soup.

This collaborative process involves iterative development where you copy Claude's generated code to your IDE, test it against real websites, and then return to Claude with specific issues or enhancement requests. Claude helps debug problems, optimize performance, add new features, and adapt to website changes.

Approach 2: Claude as your data extraction engine

The second approach transforms Claude into the actual scraping mechanism within your code. Instead of writing complex parsing logic with CSS selectors and DOM manipulation, your script sends raw HTML content directly to Claude via API calls, and Claude intelligently extracts the structured data you need.

This method essentially replaces traditional parsing libraries like Beautiful Soup or lxml with AI-powered analysis. Your Python script handles the web requests, proxy management, and data storage, while Claude becomes the brain that understands page structure and extracts meaningful information. The scraper runs autonomously, making API calls to Claude for each page it processes.

What makes Claude special for both approaches

Regardless of which approach you choose, Claude brings several key advantages to web scraping projects. Its large context window can process substantial amounts of HTML content, while its advanced reasoning capabilities allow it to understand complex page structures and semantic relationships between data elements.

In collaborative development mode, Claude can analyze HTML snippets you provide and automatically identify the correct selectors for traditional scraping tools. Instead of manually inspecting elements and figuring out complex CSS paths, you can paste HTML sections to Claude and ask it to generate the appropriate code with the right selectors already identified.

In direct integration mode, Claude eliminates the need for manual element inspection entirely. You simply send raw HTML to Claude and describe what data you want, and Claude intelligently extracts it without requiring CSS selectors, XPaths, or rigid parsing rules.

Collaborative development approach: Claude as a coding assistant

The first major approach involves using Claude as an intelligent development partner rather than integrating it directly into your scraping pipeline. This method leverages Claude's coding abilities to help you build, debug, and optimize traditional web scrapers.

Starting your scraper project

Begin by describing your scraping requirements to Claude through its chat interface. Be specific about your target website, the data you need, coding language, and any particular challenges you anticipate, for example:

Claude will generate a complete starter script that you can copy into your IDE or text editor and run in the terminal. This initial code typically includes proper imports, basic structure, error handling, and placeholder logic for your specific requirements.

Iterative development process

The collaborative approach shines through iterative refinement. After testing Claude's initial script, you can return with specific issues or enhancement requests. Here are some common follow-up interactions you’ll probably want to use:

- "The script isn't capturing the price correctly. Here's the HTML structure I'm seeing..."

- "Can you add proxy rotation to avoid getting blocked?"

- "I need to handle CAPTCHA detection and pause the scraper when encountered"

- "The pagination logic isn't working properly on this site structure"

Debugging and optimization

When your scraper encounters problems, paste the error messages and relevant code sections back to Claude for analysis. Claude excels at identifying issues in scraping logic, suggesting alternative approaches, and optimizing performance. Here’s an example of a debugging request:

Claude will analyze your code and provide specific improvements, often suggesting multiple approaches you can test.

Building complex features

As your scraping needs evolve, Claude can help add sophisticated functionality:

- Dynamic content handling. Converting from Requests-based scraping to Playwright when encountering JavaScript-heavy sites.

- Data validation. Adding checks to ensure extracted data meets quality standards.

- Monitoring and logging. Implementing comprehensive logging for production deployments.

- Scalability improvements. Transitioning from sequential processing to concurrent scraping.

Advantages of the collaborative approach

Using Claude as a coding assistant offers several key benefits:

- Complete control. You maintain full ownership of your code and can customize every aspect according to your needs.

- Learning opportunity. Each interaction with Claude helps you understand web scraping concepts and best practices more deeply.

- Flexibility. You can mix and match different tools and libraries based on Claude's recommendations and your specific requirements.

- Traditional deployment. The resulting scripts run independently without requiring ongoing API calls, reducing operational costs.

- Easier debugging. Standard Python debugging tools work normally with Claude-generated code.

To maximize effectiveness when using Claude as a coding assistant:

- Be specific with requirements. Detailed descriptions lead to better initial code generation.

- Test incrementally. Implement and test small changes rather than requesting large rewrites.

- Provide context. When reporting issues, include relevant HTML snippets, error messages, and current code sections.

- Ask for alternatives. Request multiple approaches when Claude's first suggestion doesn't work perfectly.

- Document your learnings. Keep notes on successful patterns and techniques for future projects.

Direct integration method: Claude as the data extraction engine

The direct integration approach involves your scraping script making API calls to Claude for each webpage that needs processing. Your code handles navigation, proxy management, and data storage, while Claude serves as an intelligent parser that understands content contextually rather than structurally.

Setting up Claude integration

To begin using Claude as your data extraction engine, you'll need to set up access to the Anthropic API (note that it’s a paid service, though new accounts receive initial credits that allow for limited testing before requiring payment):

- Create an account at Anthropic.

- Navigate to the API Keys section.

- Click the Get API Key button (remember to store this securely as it can’t be viewed again once created).

Make sure you have Python 3.7+ installed on your computer. Then, install the necessary Python packages with this command:

These libraries serve specific purposes: anthropic provides API access to Claude's language models for data extraction, while Requests handles HTTP requests to fetch webpage content that Claude will process.

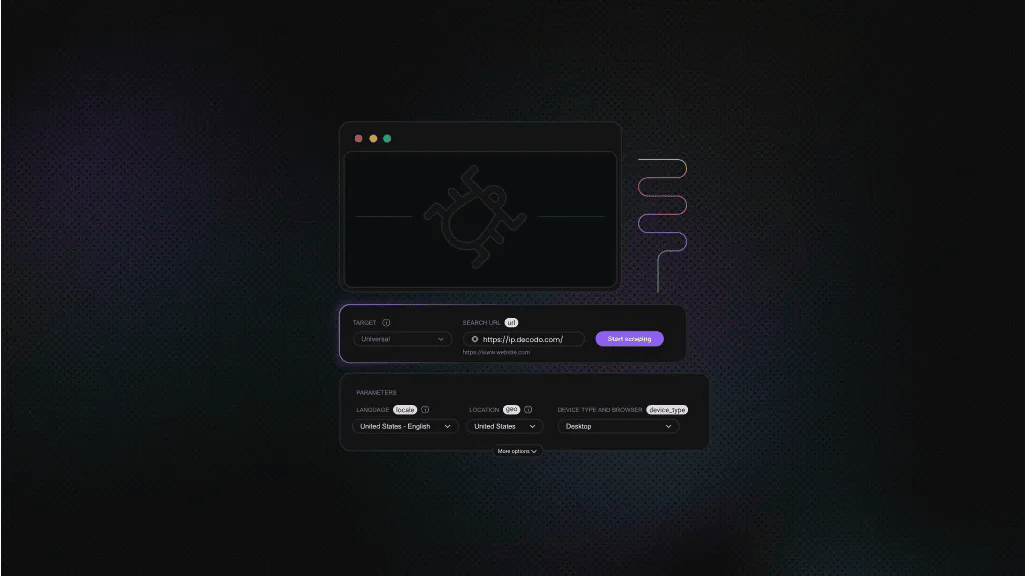

Integrating proxies

Before implementing Claude extraction, it's crucial to set up proper proxy infrastructure. Even with Claude's intelligent parsing capabilities, your scraper still needs to successfully fetch webpage content without being blocked. Residential proxies are essential for reliable data collection, especially when scraping at scale or targeting sites with anti-bot protection.

At Decodo, we offer high-performance residential proxies with a 99.86% success rate, <0.6s response time, and geo-targeting across 195+ locations. Here’s how to integrate them into your scraper:

- Create your account on the Decodo dashboard.

- Find residential proxies by choosing Residential on the left panel.

- Choose a subscription that suits your needs or opt for a 3-day free trial.

- In the Proxy setup tab, configure the location, session type, and protocol.

- Copy your proxy address, port, username, and password for later use. Alternatively, you can click the download icon in the lower right corner of the table to download the proxy endpoints (10 by default).

Get residential proxies for Claude

Claim your 3-day free trial of residential proxies and explore full features with unrestricted access.

Basic implementation

In the following code, Claude functions as the core intelligence of your scraper. Your script orchestrates the process: making HTTP requests, handling pagination, managing proxies, and storing results, while Claude handles the complex task of understanding page structure and extracting meaningful data.

This approach is particularly powerful because Claude adapts to different page layouts automatically. If the website changes its HTML structure, Claude can often continue extracting the same semantic data without requiring updates to CSS selectors or parsing logic.

Here's how to implement Claude as your primary data extraction mechanism:

After running this script in your terminal or IDE, here’s the JSON result you’ll receive:

In this integration method, Claude functions as the core intelligence of your scraper. This approach is particularly powerful because Claude adapts to different page layouts automatically. If the website changes its HTML structure, Claude can often continue extracting the same semantic data without requiring updates to CSS selectors or parsing logic.

Enhanced techniques with schema definitions

When using Claude as your data extraction engine, you can significantly improve results through structured prompting and schema definitions:

This enhanced approach differs from the basic implementation in several key ways: it uses structured schema definitions to ensure consistent output formats, includes robust JSON parsing with fallback handling, and provides more detailed prompting for Claude to follow specific requirements.

Adapting this script for your use case

To customize this script for your specific scraping needs, you can make simple adjustments to four key areas:

- Change the target URL to point to your website of interest. Replace the TARGET_URL variable with any page you want to scrape.

- Modify the schema structure to match your data requirements. Change "products" to the type of data you're extracting (articles, listings, reviews), and adjust the field names and types accordingly. For example, swap "title" for "headline" or add fields like "publish_date" or "category".

- Customize the prompt requirements within the advanced_claude_extraction function. Add specific instructions like "Convert dates to YYYY-MM-DD format" or "Clean up HTML entities in text" to handle your particular data cleaning needs.

- Add post-processing logic after Claude returns the results. Filter unwanted items, transform data formats, or validate specific fields before saving or using the extracted data.

The schema-based approach ensures Claude consistently returns data in your expected format, making it easier to integrate with databases, APIs, or further processing steps.

Get the Latest AI News, Features, and Deals First

Get updates that matter – product releases, special offers, great reads, and early access to new features delivered right to your inbox.

Claude vs. ChatGPT for web scraping

Claude and ChatGPT are the world’s most widely used LLM agents in coding, and both can supercharge scraping workflows. Having extensively tested both models, there are significant differences in their web scraping performance:

Claude's strengths and weaknesses

Strengths:

- Lateral thinking capabilities. Claude can find creative solutions when standard approaches fail, often suggesting alternative data sources or extraction methods.

- Comprehensive code expansion. When updating scripts, Claude tends to add extensive functionality and debugging capabilities, which can be beneficial for robust production systems.

- Better handling of complex structures. Superior at understanding nested data and maintaining relationships between elements.

Weaknesses:

- Code duplication. Methods may appear to be defined twice with conflicting implementations, requiring careful review.

- Syntax inconsistencies. Can leave behind outdated code when updating scripts, leading to malformed files.

- Over-engineering tendency. Frequently adds unnecessary functionality and extensive debugging that may complicate simple tasks.

- Library confusion. Often imports libraries that aren't actually used in the final implementation until specifically questioned.

- Context reset needs. Requires opening new conversations to reset its understanding when projects become complex.

During testing, Claude occasionally provided initial explanations that required clarification when pressed for more details, sometimes revealing that its first response oversimplified or mischaracterized technical concepts. This tendency to provide more accurate information under questioning suggests the need for careful verification of its suggestions.

ChatGPT’s strengths and weaknesses

Strengths:

- Simplistic, focused approach. Creates clean, minimal code that addresses specific requirements without unnecessary complexity.

- Consistent structure. Less likely to introduce conflicting implementations or duplicate code sections.

- Fast scaffolding. Produces runnable snippets quickly and adapts well to clear, step-by-step prompts.

Weaknesses:

- Requires extensive guidance. Needs significant back-and-forth interaction to develop comprehensive solutions.

- Limited creative problem-solving. Less capable of finding alternative approaches when initial methods fail.

- Prone to hallucinations and subtle mistakes. May introduce insecure patterns or incorrect APIs.

- Inconsistent on complex or long-context tasks. Can lose state or misread context.

During testing, ChatGPT consistently produced clean, well-structured code tailored to specific tasks, especially when given clear prompts. It refined outputs quickly through iteration but often needed detailed guidance for complex or open-ended problems. While initial solutions were generally accurate, they sometimes lacked context awareness or robustness, highlighting the need for precise prompts and user oversight.

Choosing the right tool

For web scraping projects, your choice should depend on your specific needs:

- Choose Claude when you need creative problem-solving, comprehensive functionality, and can invest time in reviewing and cleaning the generated code.

- Choose ChatGPT when you prefer starting with simple, clean implementations and are comfortable providing detailed guidance throughout the development process.

Don’t forget proxies when web scraping!

Regardless of which AI tool you choose, proxy infrastructure remains a critical component of any serious web scraping operation. Modern websites employ sophisticated detection mechanisms that can identify and block scraping attempts based on request patterns, IP addresses, and browser fingerprints.

Quality proxy services provide several essential benefits: massive, diverse IP pools and rotation to prevent pattern-based blocks; granular geo-targeting (down to city, ZIP, and ASN) for localized data; session control (sticky vs. rotating) for carts/logins; protocol flexibility (HTTP(S) & SOCKS5) to fit any stack; and high concurrency/reliability so your crawlers don’t stall.

Decodo’s residential network offers 115M+ ethically sourced IPs across 195+ locations, unlimited concurrent sessions, rotating and sticky options, country/state/city/ZIP/ASN targeting, <0.6s average response time, 99.86% success rate, 99.99% uptime, a live-stats dashboard, 24/7 support, a 3-day free trial, and a 14-day money-back option – precisely the kind of backbone your AI-assisted scrapers need.

Final thoughts

Claude offers two distinct approaches to web scraping: collaborative development for building traditional scrapers and direct integration as an intelligent extraction engine. Keep in mind that while Claude provides superior lateral thinking and comprehensive functionality compared to ChatGPT, it requires careful review of generated code due to occasional over-engineering and duplication issues.

Quality proxy infrastructure remains essential regardless of your chosen AI tool or approach. Combine Claude's intelligence with robust proxy rotation and systematic monitoring to build scrapers that will last you a long time and keep delivering results that pay off.

About the author

Dominykas Niaura

Technical Copywriter

Dominykas brings a unique blend of philosophical insight and technical expertise to his writing. Starting his career as a film critic and music industry copywriter, he's now an expert in making complex proxy and web scraping concepts accessible to everyone.

Connect with Dominykas via LinkedIn

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.