New Scraping API: Scraping that Adapts to Your Targets

Most scraping APIs treat every request the same – maximum power, maximum cost. But real workloads are mixed: simple HTML pages, JavaScript-heavy targets, and protected sites that need premium proxies. If your pipeline covers all three, you’re paying worst-case prices on every request. We built a scraping API that matches cost to complexity, one request at a time.

Gabriele Vitke

Last updated: Mar 09, 2026

4 min read

The problem with fixed scraping plans

Traditional scraping APIs are structured around rigid tiers that force you into a binary choice:

- Basic plan. Cheap, but limited in capability. These plans work fine for static HTML pages with minimal protection, but the moment your targets get more complex, you hit a ceiling. JavaScript rendering is either unavailable or severely throttled, and proxy options are restricted to datacenter IPs that get blocked on tougher sites.

- Advanced plan. Powerful, but expensive across the board. You get access to headless browsers, premium residential proxies, and anti-detection measures – but every request is priced as if it needs the full stack, even when it doesn’t.

The moment you need JavaScript rendering or stronger proxy infrastructure for even a handful of targets, you’re pushed into the higher tier, even if only a small fraction of your workload actually requires it.

In practice, this creates 2 problems:

- Overpaying for simple targets. Every lightweight request carries the cost overhead of advanced infrastructure you’re not using. A basic product page that could be scraped with a simple HTTP request ends up costing the same as a heavily protected, JavaScript-rendered dashboard. Across thousands of daily requests, this waste adds up fast.

- Hesitating to enable advanced features. Teams avoid turning on JS rendering or premium proxies because doing so raises costs across their entire workload – not just the requests that need it. This leads to scraping failures on tough targets, missed data, and manual workarounds that eat into engineering time.

Over time, this leads to inefficient scraping strategies. You either leave performance on the table by staying on the basic plan, or you blow through budget on the advanced plan for requests that didn’t need the extra firepower. Teams end up building fragile workarounds: separate pipelines for simple versus complex targets, custom retry logic to handle failures on the cheaper tier, or manual escalation processes that slow everything down.

Scraping shouldn’t force you to choose between underpowered and overpriced.

Scraping isn’t all or nothing

Most real-world scraping pipelines follow a predictable distribution. The vast majority of targets are straightforward – static product pages, directory listings, and public datasets. A smaller segment involves moderate complexity: pages with lazy-loaded content, client-side rendering, or light anti-bot measures. And a relatively small share of targets are genuinely difficult, heavily protected sites, single-page applications, or platforms with aggressive fingerprinting.

A typical breakdown looks something like this:

- ~70% simple, static pages. Standard HTML that can be fetched with a basic HTTP request and parsed with minimal overhead. No JavaScript execution needed, no special proxy requirements.

- ~20% moderately dynamic targets. Pages with some client-side rendering, lazy-loaded content, or light protection layers that require a headless browser or mid-tier proxy to access reliably.

- ~10% heavily protected or JS-heavy sites. Targets that demand full browser rendering, premium residential proxies, and sophisticated anti-detection measures to return usable data.

Yet pricing models rarely account for that distribution. Instead of adapting to what your targets actually require, they force every request into the same plan – and the same price bracket. The result is a structural mismatch between how scraping works in practice and how it’s billed. Teams running diverse pipelines end up subsidizing their simplest requests with infrastructure meant for their hardest ones.

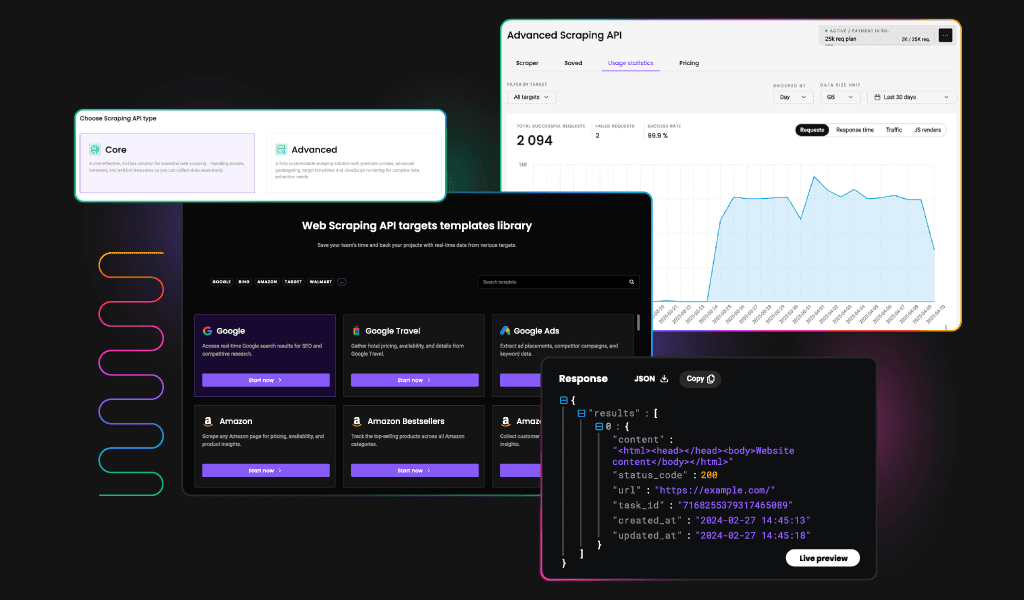

Introducing a new model

We’re launching a new scraping API built around a straightforward principle: scraping should adapt to your targets, not the other way around. Instead of choosing a plan that determines what infrastructure every request uses, you configure each request individually based on what the target actually needs.

With our upcoming modular scraping API, you’ll be able to:

- Enable JavaScript rendering only when required. Keep it off by default and activate it per request for targets that actually need it. This alone can dramatically reduce costs for pipelines where the majority of pages are static HTML. No more paying for headless browser sessions on targets that don’t use client-side rendering.

- Select proxy strength based on target complexity. Use lightweight datacenter proxies for easy sites and reserve premium residential infrastructure for the ones that demand it. Match your proxy spend to each target’s protection level instead of applying the most expensive option across the board.

- Configure request power at the individual level. Every request can carry its own settings – rendering mode, proxy type, retry behavior, and timeout thresholds. Nothing is over- or under-provisioned. Your configuration reflects the actual requirements of each target, not a one-size-fits-all plan.

- Use one API for both simple and advanced sites. No more juggling separate tools, providers, or configurations for different target types. A single integration handles everything from static product pages to JavaScript-heavy dashboards, with cost scaling to match.

Instead of committing to a fixed tier, the cost reflects the actual complexity of each request. Simple sites stay lightweight and cheap. Advanced features stay available when you need them. And mixed workloads – which represent the majority of real-world scraping pipelines – finally make economic sense.

What this means for you

If you’re currently dealing with any of the following, this model was designed with your workflow in mind:

- Scraping mostly simple targets but paying for an advanced plan to cover the occasional tough site. You’re spending premium rates on thousands of requests that could be handled at a fraction of the cost, just because a small percentage of your targets need stronger infrastructure.

- Avoiding JS rendering because enabling it increases cost across your entire workload. You know some targets would benefit from full browser rendering, but turning it on means every request – including the simple ones – gets more expensive. So you work around it, accept lower data quality, or skip those targets entirely.

- Juggling multiple scraping setups, providers, or configurations for different site types. You’ve built separate pipelines or stitched together different services to handle the range of complexity in your targets. It works, but it’s fragile, hard to maintain, and introduces unnecessary overhead.

The modular model is especially powerful for:

- Price monitoring and eCommerce tracking. Thousands of product pages with varying levels of protection, where per-request cost control compounds into significant savings. A retailer tracking competitor pricing across dozens of sites shouldn’t pay the same rate for a basic product listing page as for a heavily protected marketplace.

- Market intelligence workflows. Diverse source types ranging from static directories to dynamic dashboards, each with different scraping requirements. Analysts pulling from government databases, news sites, and proprietary platforms need infrastructure that flexes with the source, not a flat rate that ignores the difference.

- Agencies managing multiple client targets. Different clients mean different sites with different complexity profiles, all running through one pipeline. A single API that adapts to each target simplifies operations and makes client-level cost attribution straightforward.

- Any pipeline where target complexity varies. If your scraping isn’t uniform, your pricing shouldn’t be either. The modular approach aligns cost with effort across the full spectrum of your targets.

Ready for cost-efficient scraping?

Control scraping power and cost per request without overpaying on simple targets.

Bottom line

The new scraping API will replace our current fixed-plan model with a modular, request-based approach. Rather than selecting a tier and hoping it covers your needs, you’ll configure each request to match the target – and pay accordingly.

If you’ve ever felt like scraping pricing didn’t match your actual workload, this is your chance to influence something better.

About the author

Gabriele Vitke

Product Marketing Team Lead

Gabriele connects strategy, storytelling, and data to help products find their people. With over a decade of experience across SaaS, B2B, and biotech, she’s led rebrands, built go-to-market strategies, and turned complex tech into something clear and genuinely useful.

Connect with Gabrielė via LinkedIn

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.