Puppeteer vs. Playwright: Which Tool Is Better for Web Scraping?

Puppeteer vs. Playwright is a real architectural decision for any production scraping project. The two libraries share a common origin: Playwright was built at Microsoft by engineers who previously worked on Puppeteer at Google. Yet they're different on browser coverage, language bindings, and scraping ergonomics. Performance, stealth, proxy integration, and parallel execution decide which tool fits your pipeline.

Justinas Tamasevicius

Last updated: Apr 24, 2026

8 min read

TL;DR

- Use Puppeteer for Node.js pipelines on Chrome or Firefox. You get direct CDP access and a mature ecosystem.

- Use Playwright for Python, Java, or C# teams, for WebKit coverage, or for high-parallelism scraping. You get per-context proxies and auto-wait built in.

- Neither tool is enough alone against enterprise anti-bot stacks – plan for Decodo's residential proxies and a managed scraping API for those targets.

Overview and key features of Puppeteer

Google's Chrome DevTools team released Puppeteer in 2017 as a Node.js library for driving Chromium and Chrome. It talks to the browser directly over the Chrome DevTools Protocol (CDP) – the same channel Chrome's own DevTools UI uses. Running npm install puppeteer downloads a matching Chrome for Testing build, so you get a working headless browser with no separate driver installation.

What Puppeteer handles natively

Out of the box, Puppeteer handles the common scraping needs without extra dependencies:

- Page navigation and DOM interaction through page.goto(), selectors, keyboard, and mouse input

- Screenshot and PDF generation at full-page or element scope

- Network request interception via page.setRequestInterception(), useful for blocking images or mocking responses during scraping

- In-page JavaScript execution through page.evaluate() for DOM extraction and scripted interactions

- Chrome DevTools features include performance timelines, JavaScript coverage, and CPU/memory profiling

Why direct CDP access matters

Puppeteer calls CDP without an extra abstraction layer, so each method has less overhead per action, and you get direct access to Chromium's DevTools surface. For a scraping pipeline that also tracks page weight, memory footprint, or paint timings, direct CDP access is the shorter path. Playwright's higher-level API hides most of these knobs, and accessing them requires a CDPSession.

Where Puppeteer is narrower

Puppeteer v23 added production-ready Firefox support through WebDriver BiDi, the W3C bidirectional WebDriver protocol that adds event streaming to the classic request/response WebDriver. That narrows the Firefox gap against Playwright. WebKit remains unsupported. Teams that need to scrape Safari-specific rendering quirks, or that want to rotate across Chromium, Firefox, and WebKit to vary the detection surface, still hit this limit. By default, @puppeteer/browsers installs only Chrome for Testing – Firefox is opt-in.

The ecosystem worth knowing

3 packages worth knowing for anyone building a Puppeteer scraper:

- puppeteer-extra – a wrapper around Puppeteer that adds a plugin system

- puppeteer-extra-plugin-stealth – patches common fingerprint vectors (navigator.webdriver, WebGL vendor strings, plugin arrays, chrome runtime quirks) to cut the obvious automation markers

- puppeteer-cluster – manages pools of concurrent Puppeteer instances for parallel scraping

Overview and key features of Playwright

Microsoft released Playwright in January 2020. The team included engineers who had built Puppeteer at Google. Their design goal was broader: one unified API across Chromium, Firefox, and WebKit, with official client libraries for JavaScript, TypeScript, Python, C#, and Java. The shared lineage explains why the APIs feel familiar – most method names and core concepts transfer directly from Puppeteer.

What Playwright adds

Beyond the shared scraping API, Playwright adds capabilities aimed at multi-target and multi-language pipelines:

- Cross-browser support through a single API surface for Chromium, Firefox, and WebKit

- Browser contexts – isolated sessions with separate cookies, storage, and network state inside one browser instance. The context API is more polished than Puppeteer's incognito-context equivalent and supports per-context proxies, which matters for parallel scraping.

- Auto-wait – before interacting with an element, Playwright waits for it to be visible, stable, able to receive events, and enabled. This removes most manual waitForSelector() calls and reduces flaky scrapes on slow or dynamic pages.

- Route-based network interception – page.route() handles request mocking or blocking cleanly at the context level. You can drop images, stylesheets, or ad scripts per context without a browser-wide flag.

- Built-in tracing – the test runner ships with trace recording (screenshots, network logs, and DOM snapshots per action). The viewer is useful for debugging broken scrapes even outside a testing context.

- Playwright MCP – an integration pattern that exposes Playwright browser control to AI agents through the Model Context Protocol. Microsoft maintains an official server, and Decodo's Playwright MCP guide explains the Execute Automation variant for agent-driven scraping. Playwright 1.59 added browser.bind() for shared MCP/CLI sessions and page.screencast for annotated agent recordings.

For a practical walkthrough, Playwright Web Scraping: A Practical Tutorial covers the end-to-end pipeline.

What Puppeteer and Playwright share

Most scraping work looks identical in both tools. The shared lineage shows in the API shape. For anyone weighing migration cost or picking between them for the first time, the similarities outweigh the differences.

API shape

Both share the same conceptual model: launch a browser, create a page, navigate, interact, and extract. Most method names (page.goto, page.click, page.waitForSelector, page.evaluate) are either identical or near-identical. A developer reading either library's code can switch between them without looking up equivalents.

Node.js-first language support

JavaScript and TypeScript get first-class treatment in both. TypeScript definitions ship with each library, and the development experience inside a Node.js environment is comparable – same linting, same debugger attachment, same module resolution.

Headless and headful modes

Both tools run with or without a visible browser window. Both default to headless for production. Both support launching with DevTools open for debugging.

Dynamic content handling

Both render JavaScript and wait for DOM changes before extracting data. Single-page apps, React or Vue frontends, and sites that stream content on scroll work in either tool. How to scrape websites with dynamic content covers the general strategy that applies to both.

Core scraping primitives

Screenshot capture, PDF generation, form submission, cookie management, local storage access, and file download handling work in both libraries. API differences are largely cosmetic.

Proxy support

Both accept a proxy server URL at launch, and both handle authenticated proxies. Configuration syntax differs, but the basic capability is equivalent.

OS and container support

Either tool runs on Windows, Linux, and macOS. Both work inside Docker containers with the usual Chromium caveats, like –no-sandbox or a properly configured user namespace on containerized Linux.

Anti-bot plugin ecosystem

The puppeteer-extra-plugin-stealth package works with both tools – Playwright uses it via playwright-extra. When configured identically, stealth capabilities are broadly equivalent on Chromium.

Where Puppeteer and Playwright diverge

The shared foundation makes the differences easier to isolate. Each dimension has a practical scraping consequence.

Dimension

Puppeteer

Playwright

Developer

Google Chrome DevTools team

Microsoft

Initial release

2017

January 2020

Language support

JavaScript, TypeScript

JavaScript, TypeScript, Python, C#, Java

Browser support

Chrome + Firefox (via WebDriver BiDi, stable since v23)

Chromium, Firefox, WebKit

Auto-waiting

Manual – waitForSelector required

Built-in for actionable states

Parallel execution

Manual (puppeteer-cluster, multiple processes)

Native browser contexts, built-in test runner

Protocol depth

Direct CDP, full DevTools access

Higher-level API; CDP available through CDPSession

Default install footprint

Chrome for Testing (Firefox is opt-in)

Chromium, Firefox, and WebKit binaries

Single-page scrape speed

Comparable on Chrome

Comparable on Chrome; more efficient on multi-context workloads

Language support in practice

Python, Java, and C# teams get a first-class path with Playwright. The Python API matches the JavaScript version closely and supports both sync and async patterns. Puppeteer's only Python option is the Pyppeteer port, which the project itself now flags as unmaintained. Between these two libraries, Playwright is the only production-ready option for non-Node.js teams.

Browser support and scraping targets

Puppeteer v23 covers Chrome and Firefox, so the browser-coverage gap narrows to WebKit. That gap starts mattering when a target serves different content by User-Agent, applies different bot detection rules to WebKit traffic, or uses Safari-specific rendering quirks that appear only in WebKit. Playwright's ability to run across all three engines through a single API gives you a broader fallback without rewriting the rest of the pipeline.

Auto-waiting and flake reduction

Playwright waits for elements to be visible, stable, able to receive events, and enabled before acting. A Puppeteer script needs explicit waitForSelector calls and often extra waitForFunction checks for the same reliability. On a scraper working across hundreds of varied targets, auto-wait can reduce flaky runs caused by timing mismatches.

CDP access as a trade-off

Puppeteer's direct CDP access enables performance profiling, JavaScript coverage reporting, and fine-grained network control that Playwright exposes only through its lower-level CDPSession. Pure scraping rarely needs any of this. CDP depth matters in monitoring-heavy or quality-assurance hybrid workflows – for example, a scraper that also records Core Web Vitals, or one that tracks render regressions across deployments.

Parallel scraping efficiency

Playwright's browser contexts let you run isolated sessions with separate cookies, localStorage, and network routing inside one browser process. 30 contexts use far less memory than 30 separate browsers. Puppeteer's equivalent is either one incognito context per session (similar idea, less polished) or one browser process per worker through puppeteer-cluster. The latter carries a full browser's memory overhead per identity rather than sharing one process. For high-concurrency scraping, Playwright's model uses resources more efficiently.

Performance on single pages

Any performance difference between the two libraries is small in real-world runs. Proxy latency, anti-bot stalling, and page complexity dominate any library-level gap. Don't choose on raw speed alone.

Suitability for web scraping

Comparison tables help you pick a tool. They don't tell you how to survive a real target. Production scraping encounters CAPTCHAs, IP bans, and enterprise anti-bot systems – and each library leaves gaps there that external infrastructure usually closes.

Stealth and anti-bot handling

Neither tool is stealthy by default. Both set navigator.webdriver = true and leave enough automation markers that a competent anti-bot vendor flags them quickly.

Puppeteer approach. puppeteer-extra combined with puppeteer-extra-plugin-stealth patches the common fingerprint vectors – navigator.webdriver, WebGL vendor and renderer strings, plugin and mime-type arrays, chrome runtime properties. New detection methods sometimes appear before the plugin has a matching patch, which is normal for any volunteer-maintained project – detection techniques evolve quickly.

Playwright approach. playwright-extra reuses the same stealth plugin. Playwright also exposes addInitScript(), which overrides browser properties per context and gives more granular control than a browser-wide patch. Running on WebKit can bypass detection rules written specifically against Chromium signals.

Beyond stealth plugins. For targets that compare canvas hashes, WebGL parameters, AudioContext fingerprints, or enumerated fonts, stealth plugins alone aren't enough. Several alternatives address different detection vectors:

- Camoufox is a Firefox-based browser with deeper fingerprint spoofing built in

- nodriver automates Chrome without the Selenium/CDP markers that most detection rules rely on

- Patchright (a patched Playwright) and rebrowser-patches (for both Puppeteer and Playwright) close the Runtime.Enable CDP leak that enterprise anti-bot vendors like Cloudflare and DataDome specifically detect – a primary evasion vector for CDP-based automation

For the harder fingerprinting checks, how to bypass CreepJS covers the evasion steps. The broader anti-scraping overview covers the detection taxonomy.

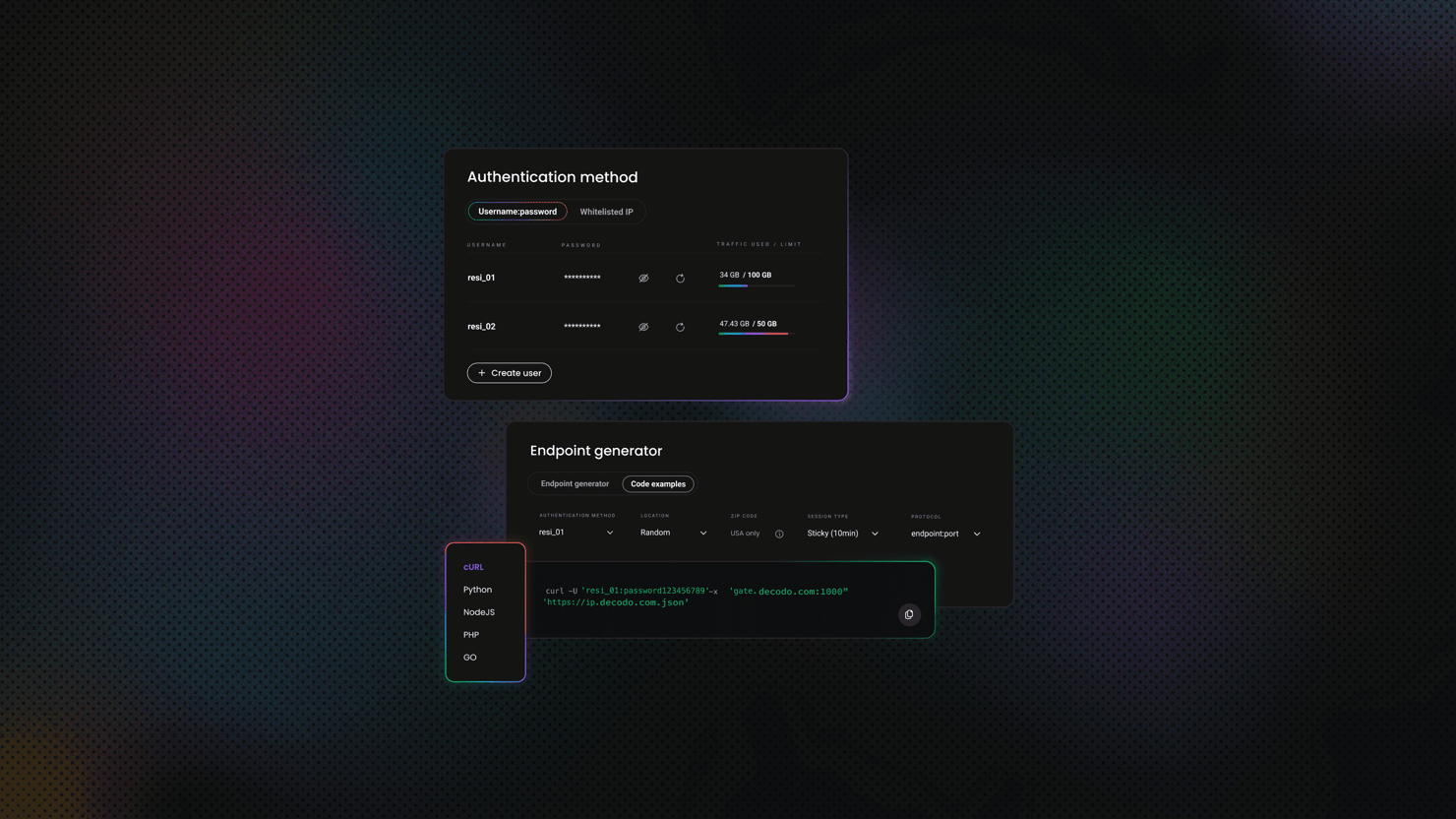

Proxy configuration

Both tools accept an upstream proxy. They differ in how authentication works and how many concurrent identities you can attach to one browser process.

Puppeteer – authenticated proxy, Hacker News scrape:

Puppeteer doesn't accept proxy credentials at launch, so you call page.authenticate() on each page before navigation. Every page in the browser shares the same upstream proxy set by –proxy-server, which means per-identity routing requires one browser per identity.

Playwright – per-context proxy, Hacker News scrape in Python:

Per-context proxy routing separates Playwright from Puppeteer in this dimension. Two contexts inside one browser process can route through different exit IPs – one scraper, many identities, one process. Multi-target pipelines often want exactly that. Playwright also supports a browser-level proxy that applies globally, so the per-context option is opt-in when isolation matters.

When to use residential IPs

Targets that rate-limit or block datacenter IP ranges – most eCommerce, travel, and financial data sites – usually need residential addresses. Decodo residential proxies work with both tools and support sticky sessions, which hold the same exit IP for up to 24 hours. The username follows a user-<username>-session-<id>-sessionduration-<minutes> pattern – the session ID pins the exit IP, and the duration sets how long the pool holds it (see the residential proxy quick start docs for plain and sticky session formats). For login-gated scraping, pairing sticky sessions with Playwright's per-context proxy routing helps keep the authentication cookie and exit IP aligned for the session's lifetime.

CAPTCHA handling

Neither tool solves CAPTCHAs natively. Both integrate with third-party solvers through the same pattern: detect the challenge, send the page or site key to a solving service, and inject the returned token.

- Puppeteer – puppeteer-extra-plugin-recaptcha automates the integration with 2Captcha and similar services. How To Bypass CAPTCHA with Puppeteer covers the full flow.

- Playwright – the same approach is available via playwright-extra, or you can inject a CAPTCHA token manually with page.evaluate().

- General reference – How To Bypass CAPTCHAs: The Ultimate Guide covers the trade-offs between solving services and detection strategies that apply to both tools.

Where both tools reach their limits

Targets protected by enterprise-grade anti-bot systems – Cloudflare Enterprise, Akamai Bot Manager, DataDome – use behavioral analysis and TLS fingerprinting that no amount of client-side patching can fully defeat. Stealth plugins can reduce the surface. Residential proxies can reduce the IP risk. Neither can reliably solve the JA3/JA4 TLS fingerprint problem, nor can they add the mouse-movement and keystroke-timing features these systems add.

At that point, the engineering cost of chasing each detection update shifts the build-versus-buy trade-off toward buying:

- Decodo Web Scraping API handles JavaScript rendering, proxy rotation, common CAPTCHA challenges, and browser fingerprint management as a managed service. The pipeline sends a URL and receives rendered HTML or structured data – the Web Scraping API quick start docs cover the request format and supported targets.

- Decodo Site Unblocker fits teams that want to keep their own scraping code but delegate the unblocking layer – the Site Unblocker quick start docs show the proxy-endpoint format

Both reduce the infrastructure burden of running, maintaining, and patching a stealth browser farm internally.

Which tool fits which scenario

The call depends on language stack, cross-browser needs, and pipeline complexity.

Use Puppeteer when:

- The pipeline is Node.js-only

- Targets render correctly in Chrome, and cross-browser coverage isn't a requirement

- You need direct CDP access for performance monitoring, coverage data, or fine-grained network control alongside scraping

- You are extending an existing Puppeteer codebase, and the migration cost outweighs the ergonomics gap

Use Playwright when:

- The team works in Python, Java, or C#

- The pipeline scrapes many targets in parallel and benefits from isolated browser contexts

- Cross-browser rendering differences need to be tested, or the target blocks Chromium specifically, and WebKit is the fallback

- You want better ergonomics – auto-wait, per-context proxies, trace viewer, without adding extra plugins

Collect data without limits

Equip residential proxies with #1 response time and start scraping today.

Bottom line

Puppeteer gives you a Chrome-first library with stable Firefox support, direct CDP access, and a mature Node.js ecosystem. Playwright gives you broader coverage across 3 browser engines and a wider set of language SDKs, plus better parallel execution ergonomics and more polished scraping tooling without extra plugins.

For most JavaScript-rendered targets on Chrome, either tool works. Playwright's auto-wait and per-context proxies matter more as pipeline complexity grows. Both tools require external components – stealth plugins, rotating residential proxies, CAPTCHA solvers – for anything beyond basic scraping. For enterprise-grade anti-bot targets, a managed scraping API removes most of the infrastructure overhead. Pick a language stack and concurrency first; add proxies, stealth, and managed infrastructure as the targets require.

Let the API handle it

Proxies, anti-bot bypass, rendering, and geo-targeting – Decodo's Web Scraping API does the hard part so your scraper doesn't have to.

About the author

Justinas Tamasevicius

Director of Engineering

Justinas Tamaševičius is Director of Engineering with over two decades of expertise in software development. What started as a self-taught passion during his school years has evolved into a distinguished career spanning backend engineering, system architecture, and infrastructure development.

Connect with Justinas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.