Puppeteer vs. Selenium: Which Tool Should You Use for Web Scraping?

Puppeteer and Selenium are the 2 most-used browser automation tools for scraping JavaScript-heavy pages. Comparing puppeteer vs selenium for web scraping isn't just about speed. Browser support, language support, and anti-bot handling all play a role. This guide covers what each tool does well, what it doesn't, and how to pick the right one.

Mykolas Juodis

Last updated: May 07, 2026

12 min read

TL;DR

- Puppeteer is Google's Node.js library that drives Chrome directly. It's faster and lighter, with Firefox support since v23.

- Selenium is a cross-language, cross-browser framework that supports Java, Python, C#, Ruby, Kotlin, and JavaScript.

- For Chrome-only scraping in a Node.js stack, Puppeteer wins on speed and setup.

- For polyglot teams, cross-browser scraping, or QA-plus-scraping work, Selenium is the better fit.

- Both struggle against serious anti-bot systems like Cloudflare, Akamai, or DataDome – that's where a managed Web Scraping API earns its keep.

What is Puppeteer?

Puppeteer is a Node.js library from Google's Chrome team that drives Chrome (and, since v23, Firefox) through the Chrome DevTools Protocol (CDP) or WebDriver BiDi. Put simply – it lets your script act like a real user clicking through pages, except it happens in milliseconds and nobody's touching the mouse.

By default, Puppeteer runs in headless mode (no visible UI), which is what you want for scraping. If you've never worked with one before, our primer on what is a headless browser covers the basics.

What Puppeteer does well:

- Navigating pages, filling forms, clicking buttons, waiting for elements to load.

- Capturing screenshots and PDFs of rendered pages.

- Intercepting network requests, which is handy for blocking ads or faking API responses.

- Running JavaScript inside the page, so you can pull data straight out of live app state.

Where it falls short:

- It's Node.js only. Python developers are out of luck here (more on that later).

- Built-in support is Chrome-first. Firefox works via WebDriver BiDi from v23, but Safari and Edge are not on the table.

- No built-in grid for running hundreds of scrapers in parallel across machines. You'll roll your own or bring something like Docker Swarm or Kubernetes.

The Puppeteer ecosystem also has puppeteer-extra, a plugin system that adds things like stealth mode (to hide automation fingerprints) and ad blocking. For scraping work where sites sniff for bots, puppeteer-extra-plugin-stealth is basically a must-have.

What is Selenium?

Selenium has been around since 2004, making it older than most junior engineers reading this post. It was built for cross-browser QA testing first, and scraping adoption came later.

Selenium has 3 components, and it helps to know what each one does:

- WebDriver – the core API that talks to browsers. This is the part you use for scraping.

- Selenium IDE – a record-and-replay tool that runs as a browser extension. Great for non-developers. Not really a scraping tool.

- Selenium Grid – lets you run tests (or scrapers) in parallel across many machines. Useful once you outgrow a single box.

Selenium’s biggest selling point is its broad language and browser support. It works with Java, Python, JavaScript, C#, Ruby, and Kotlin. It can also automate Chrome, Firefox, Safari, and Edge (plus Internet Explorer, if you're unlucky enough to still need it), something no other automation tool matches.

The honest trade-offs for scraping:

- You need a separate driver binary for each browser (chromedriver, geckodriver, etc.), and the driver version has to match your browser version. Selenium Manager (shipped with v4.6 and up) auto-downloads the right driver, which takes most of the pain out of this.

- Commands travel through the W3C WebDriver protocol over HTTP. That's more portable than Puppeteer's direct CDP connection, but also slower per command. We'll get into the numbers later.

- Setup feels heavier on day 1. Most of that complexity fades once you've done it twice.

If Selenium seems like the right fit for your project, our tutorial on scraping the web with Selenium and Python is a practical place to start.

Key differences between Puppeteer and Selenium

Here's the comparison at a glance, followed by explanations of the points that matter most for scraping work.

Dimension

Puppeteer

Selenium

Maintained by

Google Chrome team

Selenium Project (open source)

First released

2017

2004

Language support

Node.js only

Java, Python, JS, C#, Ruby, Kotlin

Browser support

Chrome, Chromium (Firefox experimental)

Chrome, Firefox, Safari, Edge

Protocol

CDP (direct)

W3C WebDriver (HTTP)

Driver setup

Chromium bundled, no separate driver

Requires driver binary (auto-managed v4.6+)

Speed on Chrome

Faster

Slower due to HTTP overhead

Distributed execution

DIY

Selenium Grid built in

Anti-bot tooling

puppeteer-extra-plugin-stealth

nodriver (community-maintained)

Best suited for

Chrome scraping, Node.js projects

Cross-browser, polyglot teams, QA + scraping

Protocol matters

Puppeteer talks to Chrome through CDP—a direct connection, like speaking a native language. Selenium sends commands over HTTP using the WebDriver protocol, which then communicates with the browser—more like speaking through a translator. The translator works everywhere, but each interaction adds a bit of overhead, which makes Puppeteer faster than Selenium.

Selenium's biggest downside – driver management

Install a new version of Chrome and, without intervention, your chromedriver breaks overnight. Selenium Manager, shipped with v4.6, now handles driver downloads automatically. Puppeteer avoids the problem entirely by bundling a compatible Chromium version with every release.

Anti-bot tooling isn't equal

Puppeteer has puppeteer-extra-plugin-stealth, which patches the automation fingerprints that systems like Cloudflare check for. Selenium has no official equivalent. The community workaround was undetected-chromedriver, which has since been replaced by nodriver from the same developer.

This comparison focuses on Puppeteer and Selenium. Playwright has emerged as a strong third option, but covering it properly would need its own article. For that comparison, see Playwright vs. Selenium in 2026.

Browser and language support

These 2 dimensions drive most tool decisions in practice. Let's look at them properly.

Browser support

Puppeteer is primarily a Chrome and Chromium tool. Firefox support has been added through WebDriver BiDi. As of mid-2026, it remains experimental and is not recommended for production scraping. Safari and Edge are not supported.

Selenium supports Chrome, Firefox, Safari, and Edge in production. Each uses its own driver binary. This matters for scraping more than it might seem. Some websites serve different content to different browsers. That could be for feature detection, analytics targeting, or other reasons. If you need the Firefox or Safari view of a page, Selenium is the only option between these 2 tools.

Language support

Puppeteer is Node.js only. Python, Java, Ruby, and other languages have no supported path to Puppeteer. There was a community port called pyppeteer. It's been unmaintained for several years. It's not a reliable option.

Selenium supports Java, Python, JavaScript, C#, Ruby, and Kotlin. For teams that already work in Python – common in data and scraping contexts – this is a clear advantage. Python's Selenium bindings are mature and well-documented. For teams with multiple languages across different functions, Selenium is the only tool that covers the whole group.

If your team writes Python and adding Node.js just for scraping isn't an option, Puppeteer is off the table. The choice narrows to Selenium or Playwright.

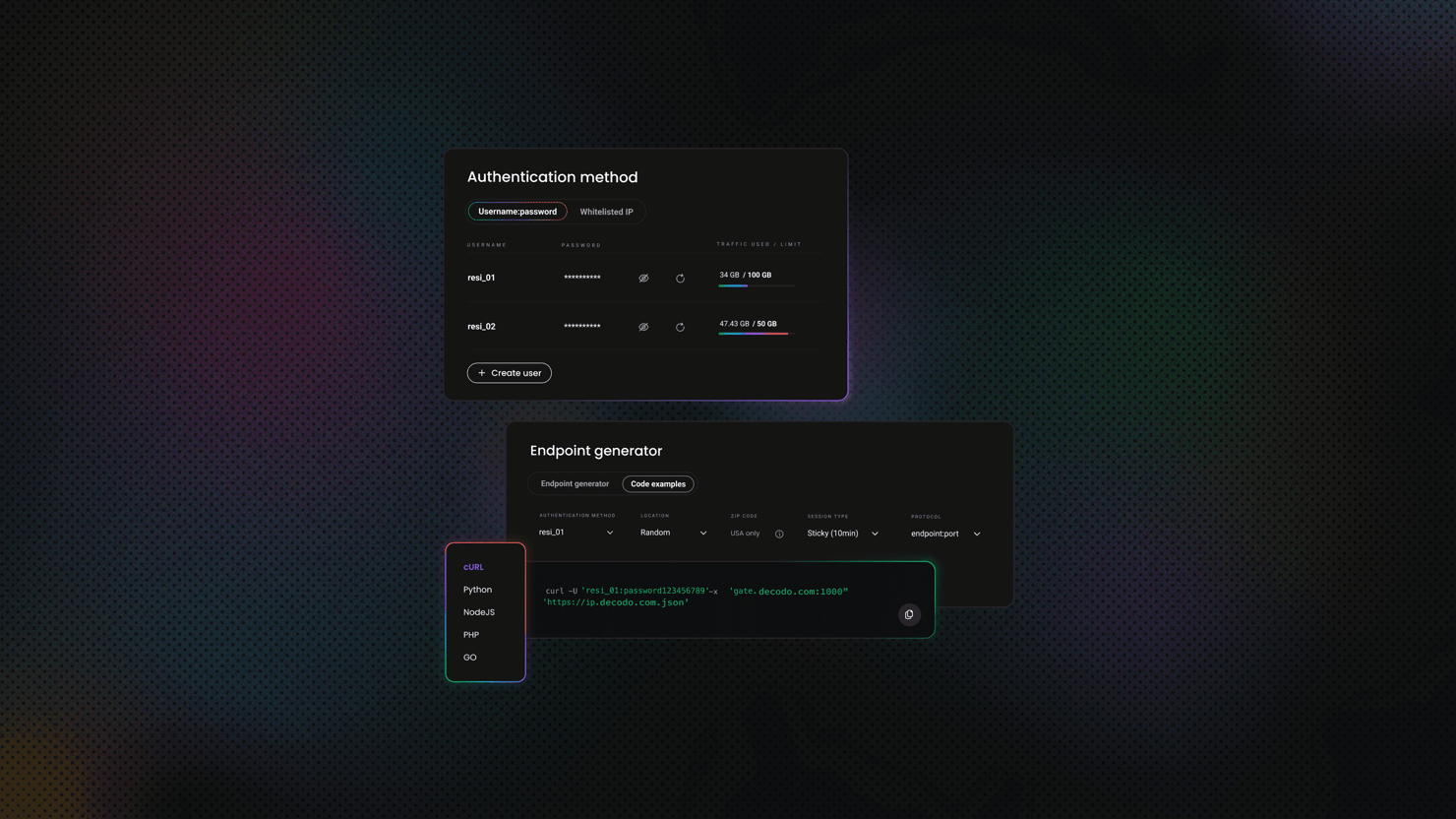

Setup and implementation

This section walks through installing both tools, writing a working scraper, and adding proxy support. The scraping target is books.toscrape.com – a site built specifically for scraping practice. Both examples are in Node.js so the comparison stays direct.

Puppeteer setup

A single command installs Puppeteer and downloads a compatible Chromium version:

If you're managing your own Chrome binary, puppeteer-core skips the Chromium download. Point it at your binary via the executablePath option on launch.

Here's a working script that pulls book titles and prices:

What's worth noting in the script:

- page.evaluate() runs JavaScript inside the browser's own context. You have full DOM access, exactly as if you were typing into the browser console.

- waitUntil: 'networkidle2' waits until the network is mostly quiet before extracting. This works well for pages that finish loading quickly.

- The --no-sandbox and --disable-setuid-sandbox flags keep the script portable. They're needed in Docker and most CI environments.

- The fallback to channel: 'chrome' covers cases where Puppeteer's bundled Chromium isn't installed. Useful in slim CI images or after a clean install.

- The error handler at the bottom catches the 2 most common launch failures and prints a fix. That saves a lot of debugging time on the first run.

Run either script with node your_file_name.js and you'll see output like this:

Selenium setup

Install the WebDriver bindings. Selenium Manager handles the driver download automatically from v4.6 onwards:

Here's the equivalent scraper:

Run the script with node your_file_name.js and you'll see output like this:

Here's how the Selenium version differs from the Puppeteer version:

- Each element lookup is a separate await call. Selenium's API is more verbose than Puppeteer's for this kind of extraction.

- The try/finally block is important. Selenium browser sessions don't clean themselves up. If driver.quit() doesn't run, the browser process stays open.

- Waiting is explicit. Puppeteer used waitUntil: 'networkidle2' on the goto call. Selenium uses until.elementLocated after navigation, which blocks until a target element appears in the DOM.

- The driver layer is configurable in ways Puppeteer doesn't expose. ServiceBuilder lets you point at a local chromedriver binary via CHROMEDRIVER_PATH or pin the hostname to 127.0.0.1. Both help in sandboxed CI environments. One covers the case where Selenium Manager can't reach the network. The other covers the case where Node can't read network interfaces.

- Timeouts are set via setTimeouts({ implicit, pageLoad, script }). Puppeteer used setDefaultTimeout at the page level. Same idea, different surface area.

Adding a proxy

For any real-world scraping, you'll want proxy support. Both tools accept a proxy at launch.

Puppeteer with a proxy:

Selenium with a proxy:

Both tools support HTTP and SOCKS5 proxies. For Selenium, the W3C protocol doesn't handle proxy authentication directly. The cleanest approach is using the proxy vendor's SDK or a browser extension.

If the target site blocks datacenter IPs, Decodo residential proxies are a practical fit. They also work well when you need requests from a specific country. Our Selenium proxy guide covers the Selenium-specific setup in more detail.

Scraping pages that load content through JavaScript? Our guide on how to web scrape dynamic content covers the timing and wait strategies worth knowing.

Performance and resource usage: Selenium vs. Puppeteer

Speed comparisons between these tools require some context. "Puppeteer is faster" is true – but only in specific ways.

Per-action speed

Puppeteer uses CDP, a binary protocol with minimal overhead per command. Each click, type, or navigation crosses 1 boundary – from your script to the browser.

Selenium adds an intermediate step. Commands go from your script to a driver process over HTTP. Then from the driver to the browser. That's 2 extra hops. In typical benchmarks, this difference runs between 50 and 200 milliseconds per command.

For a script that performs 10 actions, this is not noticeable. For a scraper running 10,000 actions, it adds up to several minutes.

Memory

Memory is where expectations sometimes don't match reality. Every headless browser session is a full Chromium process. That's true whether it was launched through Puppeteer or Selenium. The memory cost is around 300 to 500 MB per instance regardless of which tool you used.

The difference is in how sessions are managed. Puppeteer can open multiple pages within a single browser instance at low cost. Selenium typically uses 1 browser per session, which means more processes at the same level of concurrency. This gap is not relevant at 10 concurrent scrapers, but it becomes significant at 500.

Startup time

Puppeteer launches a bundled Chromium binary with no driver handshake. Cold start runs around 500 to 800 ms.

Selenium has to start a driver process first. Then wait for it to initialize. Then open a WebDriver session with the browser. That adds roughly 200 to 500 ms on top of the browser startup itself.

Concurrency

Puppeteer's strength at concurrency is running many pages within 1 browser instance using async/await. This is straightforward in Node.js and keeps memory use low.

Selenium's answer to concurrency is Selenium Grid, which distributes execution across multiple machines. If you need hundreds of parallel scrapers on a cluster, Grid gives you that without custom infrastructure work. With Puppeteer, you'd build that layer yourself.

For guidance on high-volume pipelines, see our post on web scraping at scale.

Common use cases

Both tools cover most scraping scenarios. The differences appear at the edges.

Where Puppeteer works best

- Scraping single-page applications that rely on Chrome-specific APIs or behaviour.

- Generating PDFs or screenshots of rendered pages as part of a pipeline.

- Projects where the stack is already Node.js and adding another runtime isn't warranted.

- Scenarios that need precise browser control – request interception, DevTools access, performance traces.

Where Selenium works best

- Teams working in Python, Java, or another language that Puppeteer doesn't support.

- Scraping targets that behave differently in Firefox or Safari.

- Projects that combine scraping with QA testing in the same codebase.

- Distributed scraping runs where Selenium Grid handles the parallel execution.

- Prototyping workflows using Selenium IDE before writing code.

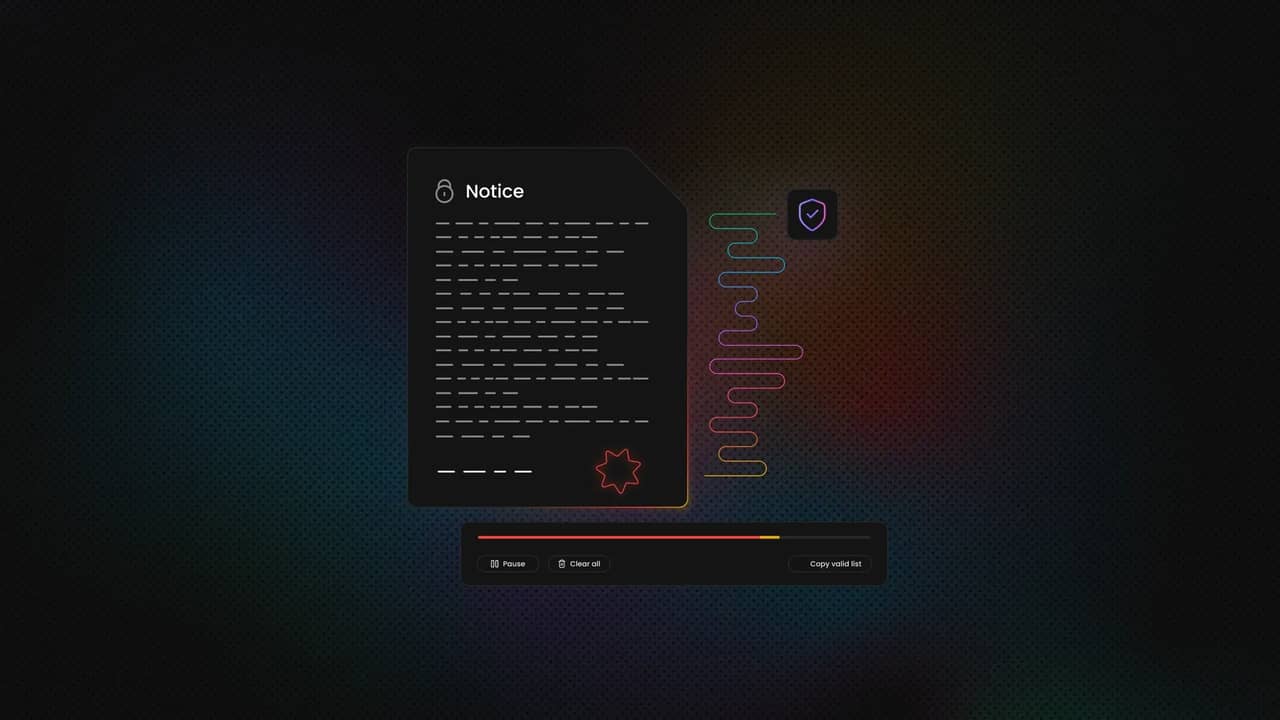

Where both tools reach their limits

Heavily bot-protected sites – Cloudflare, Akamai, DataDome – fingerprint browser automation at multiple levels. They check the TLS handshake, the JavaScript runtime, and behavioral patterns. Both Puppeteer and Selenium get blocked on these targets without additional tooling.

Puppeteer can be extended with puppeteer-extra-plugin-stealth, and Camoufox is a Firefox-based option built specifically for anti-detection scraping. These help, but they're in an ongoing arms race with detection systems that update frequently.

For targets that block reliably, a managed solution is the practical step. Decodo's Web Scraping API and Site Unblocker handle fingerprinting, CAPTCHA solving, and IP rotation on the server side. They return fully rendered HTML without requiring you to manage a browser. Our post on anti-scraping techniques and how to outsmart them explains why bot protection is as effective as it is.

Try Web Scraping API for free

Activate your free plan with 1K requests and scrape structured public data at scale.

Integration with third-party tools and services

Scrapers don't live in isolation. Here's how each tool fits into a broader data pipeline:

CI/CD – Both tools run headlessly in Docker containers. They work with GitHub Actions, Jenkins, and CircleCI. Puppeteer needs the --no-sandbox flag in Linux containers due to a sandboxing conflict. Selenium connects to remote WebDriver endpoints, making it straightforward to point a CI runner at a Selenium Grid.

Cloud execution – Puppeteer runs on AWS Lambda using chromium-lambda layers, and on Google Cloud Functions. This makes it a good fit for event-driven or serverless scraping. Selenium is typically deployed on persistent VMs or containers with a WebDriver server – not a natural fit for serverless.

Proxy rotation – Both tools accept a proxy URL at launch. For rotating sessions, use a sticky-session endpoint. It handles IP rotation transparently. That's cleaner than swapping proxy addresses in code between requests.

Testing frameworks – Selenium has deep integrations with TestNG, JUnit, and pytest. These integrations are the reason many enterprises use Selenium in the first place. Puppeteer works with Jest and Mocha, but it isn't a testing framework itself.

Data pipelines – Neither tool stores data. Both return raw HTML or extracted structures. From there, you'd pass that into a parser. Cheerio or jsdom in Node.js. Beautiful Soup or Scrapy in Python. Then into a database or warehouse. Our tutorial on JavaScript web scraping covers the Node.js pipeline in detail.

Running browsers in containers? Our headless Chrome glossary entry explains what's happening at the OS level.

Community, ecosystem, and documentation

The community behind a tool has real consequences for how well-supported your project will be over time. Here's where things stand in mid-2026.

Downloads and release activity

Puppeteer (npm) receives around 8.7M weekly downloads. The current release is v24.40, maintained by Google's Chrome team with a consistent weekly release cadence.

Selenium's JavaScript package (selenium-webdriver) gets around 2.1M weekly npm downloads – but that number understates Selenium's actual reach. Its Python package pulls roughly 50M downloads a month on PyPI. The Java and .NET bindings add significant volume on top. When you look across all ecosystems, Selenium's install base is substantially larger than the npm figure suggests.

Documentation quality

Puppeteer's API documentation is generated directly from the source code and is co-maintained with the Chrome DevTools team. It stays accurate with each release.

Selenium's documentation has historically been fragmented across language bindings. The main site has improved considerably since v4, and the core documentation is genuinely useful now. That said, Python-specific examples are often easier to find through community resources than through the official site.

Community and support

Selenium's community is the larger of the 2 by a significant margin. Two decades of QA adoption across enterprises has built up a lot. More Stack Overflow answers. More tutorials in every supported language. A deeper pool of third-party integrations. When you encounter an unusual problem, someone has likely run into it and written it up.

Puppeteer has a smaller but more technically focused community. Google's backing means browser-level issues are addressed quickly, and the issue tracker is well-maintained. Third-party tutorials, however, are thinner – particularly in languages other than JavaScript.

The Playwright factor

Both Puppeteer and Selenium have lost some developer mindshare to Playwright over the past few years. Playwright launched with an API that Puppeteer users found familiar. It added cross-browser support and built-in auto-wait from the start. For a full breakdown, see Playwright vs. Selenium in 2026. Want a wider view of available options? Our top 10 best web scraping tools post covers the full range.

Market trends and popularity

These figures reflect the state of things as of mid-2026.

npm weekly downloads – Puppeteer is around 8.7M, selenium-webdriver is around 2.1M, and Playwright is around 35M. The npm comparison flatters Playwright because it's a JavaScript-first project. Selenium's Python and Java usage doesn't appear in these numbers at all.

Google Trends – Selenium has held higher overall search volume for years. A significant portion of that reflects QA testing interest, not scraping. Puppeteer search interest peaked around 2020 to 2021 and has since held steady. Playwright's trend line has climbed consistently.

Stack Overflow – Selenium has significantly more tagged questions than Puppeteer, reflecting its longer history and broader adoption. Puppeteer's question volume has grown steadily in recent years, which typically indicates active new adoption rather than just legacy use.

Job market – Selenium remains the standard in QA automation job listings. Puppeteer and Playwright appear more frequently in data engineering, scraping, and developer tooling roles.

The overall picture – Selenium is still the default in enterprise QA, thanks to its language range and framework integrations. Puppeteer holds a clear niche for Chrome-specific Node.js scraping. Playwright is the fastest-growing of the 3. None of these tools is in real decline. Each serves a distinct enough set of needs that all 3 remain valid choices in 2026.

Choosing the right tool

Choose Puppeteer when:

- Your project is already in Node.js and adding another runtime isn't justified.

- You need direct CDP access for low-level browser control.

- The target site only needs to be scraped in Chrome.

- You want to extend with puppeteer-extra plugins for anti-detection work.

Choose Selenium when:

- Your team works in Python, Java, or another language Puppeteer doesn't support.

- You need Firefox or Safari rendering to access the right content.

- Distributed parallel scraping via Selenium Grid is a requirement.

- Scraping sits alongside a QA workflow that already uses Selenium.

Choose Playwright when:

- You need cross-browser support and want a modern async API.

- Built-in auto-wait and multiple isolated browser contexts are useful for your setup.

- You want the most actively developed of Puppeteer, Selenium, and Playwright. See Playwright vs. Selenium in 2026 for a full comparison.

Consider a managed API when:

- The target site actively fingerprints browser automation.

- You need geo-targeted IPs without managing a proxy pool yourself.

You'd rather send a URL and receive structured data than manage a browser fleet. Decodo's Web Scraping API handles JavaScript rendering, CAPTCHA solving, and IP rotation in one request.

Final thoughts

Puppeteer is the faster, simpler option for Chrome-only Node.js scraping. Selenium offers broader browser and language support at the cost of more setup complexity. The right choice depends on your team's language, the browsers you need, and how much anti-bot handling matters.

When browser automation becomes the main source of friction, a managed solution is worth considering. Blocked sessions, proxy management, and fingerprint updates all add up. At that point, continuing to tune browser settings is rarely the most productive approach.

About the author

Mykolas Juodis

Head of Marketing

Mykolas is a seasoned digital marketing professional with over a decade of experience, currently leading Marketing department in the web data gathering industry. His extensive background in digital marketing, combined with his deep understanding of proxies and web scraping technologies, allows him to bridge the gap between technical solutions and practical business applications.

Connect with Mykolas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.