Minimum Advertised Price Monitoring: How to Build an Automated MAP Tracker in Python

Minimum Advertised Price (MAP) violations don't announce themselves. One day, your authorized retailer lists your product at $299. The next, a competitor screenshots their $199 listing and sends it to your entire channel. Manufacturers, brand managers, and eCommerce teams are running automated data pipelines because the case for external data is clearest when the alternative is catching violations three weeks late. In this article, we’ll walk through what MAP monitoring is, the legal distinctions that matter, and how to build a production-ready automated tracker in Python.

Justinas Tamasevicius

Last updated: Mar 20, 2026

16 min read

What is minimum advertised price monitoring?

MAP is the lowest price a retailer is permitted to advertise for a product. It governs the displayed price on a product listing page, PPC ad, or promotional banner. A retailer can sell below MAP at the register or in a cart. They just can't advertise below it.

Brands communicate these policies in a couple of ways: as a unilateral policy (retailers agree by accepting product) or as a contractual term in the reseller agreement. The enforcement teeth differ between the 2, so the distinction matters before you write a single violation notice.

MAP governs only the advertised price a brand sets for its own products. Price-fixing, by contrast, controls the transaction price between competing parties. That distinction matters when you're drafting enforcement language, and it's why MAP policies have survived legal scrutiny in the US.

Geography matters too. The US framework doesn't travel well. In the EU, resale price maintenance laws treat advertised price restrictions far more strictly and, in many cases, prohibit them outright. If you're monitoring across regions, your compliance framework needs to account for the jurisdiction before it accounts for the price.

MAP vs. related concepts

MAP gets conflated with 3 other terms regularly enough to create real enforcement blind spots. Let's clear them up.

MSRP and MAP get confused constantly, and the confusion leads to weak enforcement. MSRP is a manufacturer's suggested retail price, a recommended selling price that retailers can advertise above or below freely. MAP sets a floor on advertising. A retailer ignoring your MSRP is making a positioning call, but a retailer advertising below your MAP is violating a policy you can enforce.

Price parity agreements work differently. They require a retailer to match prices across channels. If they list at $199 on their own site, they can't list at $249 on a marketplace. MAP only prohibits advertising below a fixed threshold on any channel. Conflating the 2 creates compliance gaps, especially when a retailer technically honors MAP but violates a separate parity clause in its reseller agreement.

Worth separating from both of those is general price tracking. It shares scraping infrastructure with MAP monitoring but serves a different function. Price tracking captures historical price movements across retailers for competitive intelligence. MAP monitoring has a single, compliance-oriented job: detect when an advertised price crosses a threshold and trigger a response. Building one doesn't automatically give you the other.

What counts as a MAP violation and what doesn't

MAP violations typically include product listing pages, Google Shopping ads, Amazon Sponsored Product listings, and promotional banners. These are the surfaces where a retailer's advertised price is publicly visible and attributable.

Plenty of pricing situations fall outside MAP scope:

- In-cart prices

- Membership-gated discounts like Amazon Prime

- Phone orders

- Bundled pricing where the individual product price isn't displayed

These carve-outs aren't universal though, so you have to confirm each one with legal counsel before building them into your compliance workflow.

Enforcement typically follows a tiered sequence: written warning first, then removal from the authorized reseller list, then supply restriction. Where MAP monitoring earns its place in that process is documentation. A timestamped, multi-cycle record of violations turns an enforcement conversation from a dispute into a paper trail. Retailers are far less likely to push back on a warning backed by 6 consecutive monitoring cycles of evidence than one backed by a screenshot someone happened to take.

Given how frequently prices change across major eCommerce platforms and how volatile pricing is across fashion and consumer categories, manual audits don't just scale badly. They don't scale at all.

Now that the compliance framework is clear, here's how to build the system that enforces it.

Setting up your MAP monitoring project

Prerequisites

Get these in place before writing a line of code:

- Python 3.9+, available from the official Python website

- Familiarity with pip, virtual environments, and running Python scripts

- Working knowledge of HTML structure and CSS or XPath selectors

- A list of target retailer URLs and the known MAP value for each monitored product

If you need a broader scraping foundation before continuing, our Python web scraping guide covers the essentials.

Finally, if you prefer simplicity, this full project is available for download.

Project setup

With prerequisites sorted, it’s time to set up your environment. First, create and activate a virtual environment:

Install dependencies:

After installing, download the browser binaries:

Create a project directory for the scraper and initialize the module files that will make up the application:

The project is split into 7 focused modules, each with a single responsibility:

Start by defining your product config in config.py. The examples below target web-scraping.dev/products, a realistic mock eCommerce catalog with 28 products, paginated listings, and product variants.

Crucially, each product detail page exposes 2 price fields that map directly to MAP monitoring concepts: .price > span for the current advertised price and .product-price-full for the original reference price. Product 1, Box of Chocolate Candy, is advertised at $9.99 against a reference price of $12.99, giving you a genuine violation to detect from the very first run.

The test serves prices in static HTML, so .price > span is all you need for the demo. RetailerB in the Running Shoes config uses XPath, demonstrating that selector format varies per retailer. Every major retailer structures its pricing elements differently, which is why per-retailer selectors are required. Most production retailers dynamically inject prices after the initial HTML loads, so a plain HTTP client returns the page shell without prices. That's handled in the Playwright section below.

Because the modules use relative imports (from .config import ...), run them from the project root using the -m flag rather than calling the file directly:

Running scraper.py directly will throw ImportError: attempted relative import with no known parent package. The -m flag tells Python to treat map_monitor as a package and resolve imports correctly.

With the project structure in place, the next step is building the component that actually collects the data.

Collecting pricing data: scraping product prices from retailer sites

Not all retailer pages are created equal. Some serve prices in plain HTML that any HTTP client can read. Others render prices dynamically via JavaScript, which breaks naive scrapers entirely. Here's how to handle both.

Scraping static pages with httpx and parsel

Most smaller retailers serve prices in static HTML. For these, httpx and parsel are all you need:

The function parse_price handles the full range of real-world price formats: integers, decimals, currency symbols, and comma-separated thousands. It takes the last numeric token in the string, so "Was $12.99 Now $9.99" correctly returns 9.99. If it returns None, log it immediately. A broken selector fails silently otherwise, and you'll miss violations without knowing why.

In fetch_price, the product_name is now included in the returned dict. This is required by downstream components like check_map_compliance and save_violation. Without it, the checker has a price and a retailer, but no product to associate the violation with.

The function scrape_all_products() is the entry point used by the scheduler. It iterates over every product defined in the config and aggregates results from all retailers into a single flat list. The __main__ block allows you to run python -m map_monitor.scraper directly, making it easy to verify selectors against live pages before running a full monitoring cycle.

Rate limiting and polite scraping

The asyncio.sleep(delay) call at the top of fetch_price is deliberate. Hitting small retailer sites with concurrent requests at full speed is a reliable way to get blocked or cause real server load. Set delay in your config and tune it per retailer if needed. Larger retailers can handle tighter spacing; smaller ones can't.

Log every failed fetch and every None price return. A single failed request in a monitoring cycle shouldn't trigger a false MAP violation, but a pattern of failures from the same retailer usually indicates that your selector is broken or that the site has changed its structure. Visibility into that distinction matters.

Handling JavaScript-rendered prices

The target website web-scraping.dev is server-rendered, so plain httpx is sufficient for the demo above. Many production retailer sites are not. They inject prices via JavaScript after the page shell loads, which means an httpx request returns HTML with no price data at all.

You can see this failure mode directly. This test site is a JS-rendered eCommerce playground built specifically to demonstrate this behavior. Fetch it with plain httpx, and the response body contains no products, no prices, and no usable data:

The selector returns an empty list because .product-price elements don't exist in the raw HTML. The page shell contains a single line telling the browser to enable JavaScript. The product grid, product names, and prices only appear after the browser executes the page's JavaScript. A headless browser that runs JavaScript returns the full product grid with prices. That's the gap httpx can't close.

Before building that scraper, there's a prerequisite that most MAP monitoring guides skip entirely.

Why residential proxies are essential for retailer scraping

Large retailers run bot detection at the network and fingerprint level. When requests come from a datacenter IP, the signature is immediate: consistent timing, no browser fingerprint, no session history. These sites fingerprint and block datacenter traffic at scale, often silently returning malformed pages or empty price containers rather than an outright error. That's the worst outcome for MAP monitoring: a scraper that appears to work but returns no prices.

Residential proxies route your requests through real ISP-assigned IPs tied to actual consumer devices. To a retailer's infrastructure, the traffic is indistinguishable from a customer browsing at home. That distinction is what keeps your scraper returning real prices rather than bot-deflection pages.

For a MAP tracker running on a schedule across multiple retailers, you also need IP rotation. Hitting the same product page from the same IP every hour will trigger rate limits even on residential IPs. Rotating sessions distribute that load so no single IP accumulates a suspicious request pattern.

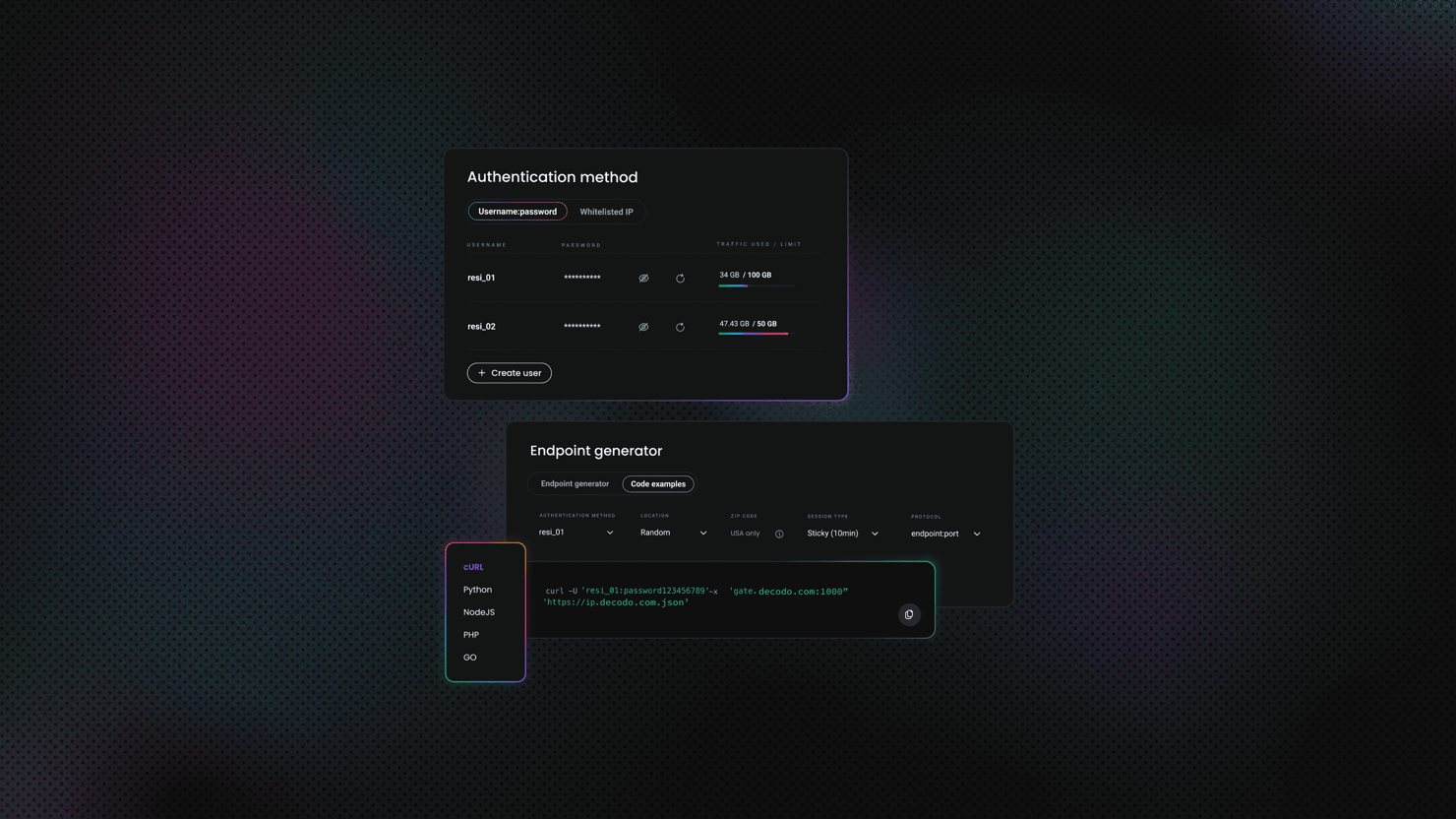

Decodo's residential proxy network covers 115M+ IPs across 195+ locations, with a 99.86% uptime and <0.6s response times. Here's how to get started:

- Create your account at the Decodo dashboard.

- Select a residential proxy plan, or start with a 3-day free trial.

- Set session type to Rotating for MAP monitoring workloads.

- Copy your proxy username, password, and endpoint.

MAP tracker with Playwright and residential proxies

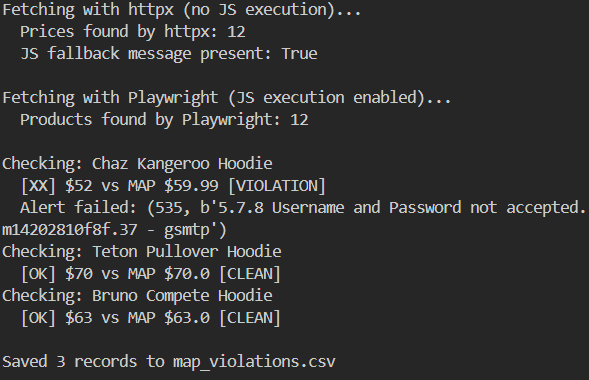

The script below targets scrapingcourse.com/javascript-rendering, which requires a browser to render its product grid. It confirms the JS-rendering problem firsthand: httpx returns nothing, Playwright returns prices.

Before running it, you need Decodo residential proxy credentials. Log into your Decodo dashboard, select Residential from the left sidebar, and open the Proxy setup tab. Your username is listed under the Authentication dropdown and your password sits next to it. The gateway is gate.decodo.com with your assigned port listed in the endpoint table below.

With those in hand, set them as environment variables before running the script:

Then add these two lines to the top of the script and the rest works without changes:

The products on this site are static demo data from a scraping tutorial platform, so prices don't fluctuate. The point is to show the mechanism before pointing the same pattern at a production retailer URL. Swap in your retailer URLs, MAP floors, and Decodo proxy credentials when you're ready to monitor real listings.

You’ll see something similar to this in your terminal:

Meanwhile, here's the CSV output:

The headless browser approach works, but it doesn't scale cleanly. Once you're monitoring 50+ retailer URLs, managing proxy rotation, request headers, browser rendering, and anti-bot bypass as separate concerns becomes a maintenance burden that grows faster than your retailer list does. Decodo's Web Scraping API consolidates all of that into a single API call. Less infrastructure babysitting, more compliance work.

Using Decodo's Web Scraping API as a drop-in replacement

Replace the httpx fetch in fetch_price with a call to the Decodo API:

This swap keeps your comparison and alerting logic identical, whether you're scraping static pages or JS-heavy retailer sites. Authentication uses Basic auth with a Base64-encoded username:password token, matching the Decodo API spec. The "headless": "html" parameter is what triggers JS rendering (the Advanced plan is required for this). The response HTML is at results[0]["content"].

For IP-based blocking on major retail domains, Decodo's residential proxy network routes requests through real consumer IPs rather than datacenter addresses, which is what gets flagged first on sites running Cloudflare or DataDome. Proxy rotation is handled automatically.

Selector variability across retailers

Every major retailer uses a different HTML structure for prices. There's no universal selector, and trying to build one creates fragile logic that breaks the moment any retailer updates their frontend. The per-retailer selector map in your config is the right approach. Use browser DevTools to inspect the price element on each target site before writing your selector, and re-validate after any retailer site redesign.

For broader eCommerce scraping patterns and how price elements are structured across major platforms, the product scraping guide is a useful reference. If your direct requests start returning blocks or empty responses on protected sites, the anti-scraping techniques guide covers exactly what's triggering the block and how to route around it.

With prices landing reliably, the next layer is where the compliance work actually happens.

Detecting MAP violations and triggering alerts

Scraped prices are just numbers until something compares them against a threshold, determines how serious the gap is, and notifies someone. You need a comparison layer that calculates violation severity, a storage layer that builds your enforcement paper trail, and a notification system that routes alerts based on the severity of the breach.

Price comparison logic

The comparison layer is the core of the monitor. It takes a scraped price, checks it against the MAP threshold, calculates how far below it sits, and returns a structured violation record if a breach is detected:

Percentage deviation is more actionable than a raw price difference. A $10 violation on a $50 product (20% below MAP) demands a different response than a $10 violation on a $500 product (2% below MAP). Severity tiers make that distinction automatic.

Persisting violation history

Every violation the monitor detects needs to be written to persistent storage. Without it, you can't identify repeat offenders, track whether violations resolve between monitoring cycles, or build the documented evidence trail that makes enforcement conversations stick:

Both storage backends are in one module. The functions save_violation and load_active_violations handle the JSON Lines path for development and small-scale deployments. For production, init_db and save_violation_db use SQLite, offering zero external dependencies, queryability, and durability across restarts. The __main__ block exercises both so you can confirm the storage layer is working before wiring it into the main loop.

The Python data persistence guide covers CSV and Excel output patterns if you need those alongside the database.

Once your storage layer is in place, the next problem is making sure the right people find out about violations fast enough to act on them.

Building a multi-channel notification system

Logging a warning to stdout when a violation fires is fine for local testing. In production, violations need to reach the right person through the right channel, at the right severity level.

Deduplication

The routing logic above handles where alerts go. What it doesn't handle yet is how often. Without deduplication, your monitor fires a fresh alert for the same violation on every single run. The fix is straightforward: only alert when a violation is first detected, and alert again only when it resolves.

For webhook-based delivery integrations, the webhooks guide covers the patterns in more depth.

The alerting layer is done. The last piece is making sure it runs without anyone having to press a button.

Automating MAP monitoring: scheduling, workflows, and continuous operation

A monitor that runs once manually isn't a monitor, it's a script. After building the scraping and alerting logic so far, we need to turn it into a system that runs continuously, recovers from failures, and doesn't require anyone to remember to start it.

In-process scheduling with asyncio

The simplest scheduler is a loop:

This works fine for development and low-frequency monitoring, but it won't survive process restarts, so don't rely on it in production.

Production-grade scheduling with APScheduler

APScheduler lets you configure different cadences per product, add persistent job storage so schedules survive restarts, and log execution metadata for visibility into what ran and when.

The SQLAlchemyJobStore is what makes this production-grade. Job state is written to jobs.db, so if the process restarts, the scheduler picks up where it left off rather than losing its schedule entirely.

System-level scheduling with cron

When you want monitoring to survive process crashes and integrate with system logging, OS-level cron is the more reliable choice than an in-process scheduler.

Add a crontab entry to run every 2 hours:

The >> operator appends output to a log file instead of overwriting it. The 2>&1 part routes stderr into the same file, so errors from failed scraping runs are captured alongside normal output. If something breaks overnight, that log is your first place to check.

Integrating with no-code automation platforms

For teams that'd rather own the workflow than maintain Python scripts, MAP monitoring integrates cleanly into n8n. You can trigger the monitor on a schedule, pull price data via Decodo's Web Scraping API (which handles proxy rotation and JS rendering inside the workflow), and route violation data to Slack or email without writing Python.

This approach makes sense for smaller teams or when a non-technical stakeholder needs to own the monitoring cadence. The n8n web scraping workflow guide walks through the setup.

When things break (and they will)

Automated runs fail silently if you don't build failure handling in from the start. A network timeout, a changed HTML structure, or a retailer-side block will all produce a None price without raising an exception unless you explicitly handle it.

Add an exponential backoff wrapper around HTTP requests:

Both utilities live in utils.py. The function with_retry wraps any async fetch call with exponential backoff, catching TimeoutException and HTTPStatusError specifically so non-transient errors still surface immediately. The function log_run_health appends a structured record to health_log.jsonl after every cycle.

Configure alert thresholds so a single scraping failure doesn't trigger a false MAP violation. If price is None due to a fetch error, skip the violation check for that retailer and log the error instead. For a full breakdown of retry strategies in Python, including backoff configuration and per-exception handling, the Python requests retry guide covers the patterns in depth.

The scheduler keeps the monitor running. The next challenge is keeping it running reliably when the retailer list grows.

Scaling MAP monitoring: handling multiple retailers, protected sites, and large catalogues

5 test URLs is a proof of concept. 200 products across 40 retailers is a job. Let's look at the concurrency, anti-bot, and rendering challenges that only surface at scale, and how to handle each without rebuilding your core logic.

Concurrency and throughput

Async requests scale well until you hit rate limits. Add a semaphore to cap simultaneous connections:

Both functions live in scaling.py. Use scrape_catalogue alone for catalogues under 100 products where memory isn't a concern. Switch to scrape_in_batches when you need per-batch progress visibility or when a full concurrent run risks exhausting available memory mid-cycle.

Anti-bot challenges on major retail sites

Large retailers run bot detection at the IP, fingerprint, and behavioral level. Cloudflare, DataDome, and PerimeterX are the most common systems you'll encounter. User-agent rotation and request headers are table stakes, but they're not enough on their own against any of them. Sending a datacenter IP to a Cloudflare-protected retailer page is roughly equivalent to showing up to a neighbourhood barbecue in a hazmat suit. Technically present, immediately flagged.

Residential proxies are significantly more effective than datacenter proxies for retailer scraping because they carry legitimate ISP fingerprints that match real consumer traffic. Datacenter IPs are trivial to fingerprint and block at scale. Decodo's residential proxies offer 115M+ real IPs across 195+ locations, which also support geo-specific price monitoring for brands that sell across multiple regions.

Integrate Decodo residential proxies directly into your httpx client:

For the most heavily protected sites, Decodo's Site Unblocker handles fingerprint-level bypass without requiring you to build and maintain custom middleware. For a full walkthrough of proxy integration patterns in Python requests, the proxies in Python guide covers configuration, rotation, and authentication in depth.

If requests are already getting blocked, the IP ban troubleshooting guide walks through the most common causes and how to route around each one.

Handling JavaScript-heavy retail pages

Headless browsers like Playwright and Selenium are reliable for JS-rendered prices, but they come with a real cost: 10 to 50x slower per page than a direct HTTP request. At 5 retailers, that's tolerable. At 40, it compounds into monitoring cycles that take hours rather than minutes.

Running this in production means managing a Chromium installation, browser process lifecycle, and memory consumption across concurrent scraping tasks. That's a non-trivial operational surface on top of the scraping logic itself.

Decodo's Web Scraping API handles JS rendering in the cloud and returns fully rendered HTML directly, removing that entire layer. For teams that need the headless browser approach regardless, the Selenium web scraping guide covers the full implementation.

Monitoring for MAP violations in search and ad listings

Product detail pages aren't the only surface where MAP violations appear. Google Shopping ads, Amazon Sponsored Products, and retailer site search results all display advertised prices, and all of them are enforceable under a MAP policy.

Extending your monitor to cover these surfaces means scraping structured data from search result pages rather than individual product pages. The HTML structure and selectors differ significantly from product pages, but once you've extracted the price, the comparison and alerting logic is identical to what's already built. The main additional complexity is identifying which listing belongs to which authorized retailer when multiple sellers appear in the same search result.

The infrastructure is built. Here's how to keep it from quietly breaking.

How production MAP monitors quietly fail (and how to stop them)

Building a MAP monitor that works in a test environment is the easy part. Keeping it accurate, reliable, and legally defensible in production is where most implementations develop blind spots. This section covers the operational habits, false alert traps, and legal considerations that separate a monitor you can trust from one that quietly fails.

Operational best practices

- Maintain a selector registry. A broken selector is the leading cause of monitoring blind spots. Document every selector, the retailer it targets, and the date it was last validated. When a scraping run returns an unexpectedly high rate of None prices, a selector change is the first thing to check.

- Log everything. Every scraping run should produce a timestamped record of the target URL, extracted price, and outcome. This creates an audit trail you can use in enforcement conversations: "We detected and documented this violation at 14:23 UTC on March 3rd. Here are 6 consecutive monitoring cycles showing the price remained below MAP."

- Test selectors against live pages before each scheduled cycle. Add a pre-flight validation step that checks each selector returns a non-null result before committing to a full monitoring run.

- Use a staging environment. Validate changes to scraping logic against live pages before deploying. A selector fix that works in testing can fail in production if the retailer serves different HTML to different user agents.

Avoiding false positives and false negatives

Not every price below MAP is a violation worth acting on. Flash sales, bundle pricing, and marketplace third-party sellers all create noise that triggers alerts on legitimate pricing situations. These adjustments reduce that noise significantly.

- Add an observation window. Only flag a violation if the price stays below MAP for 2 consecutive monitoring cycles. A single data point might be a temporary sale, a scraping glitch, or a price update mid-cycle.

- Define "advertised price" per retailer type. For marketplace retailers where third-party sellers set their own prices, decide upfront whether you're monitoring the buy box price, the lowest listed price, or only first-party listings. Build that definition into your selector logic.

- Account for membership prices. Amazon Prime pricing, Costco member prices, and similar gated prices typically fall outside MAP scope. If your selector can inadvertently capture these, add a check.

When to consider a managed solution

The DIY route makes sense until it doesn't. Build the scraping infrastructure yourself when you have the engineering capacity, a contained list of retailers, and the time to maintain it. The tipping point usually arrives when selector maintenance, proxy infrastructure, and scheduling failures are generating more support work than the compliance program itself. At that point, the engineering cost has outgrown the business value of owning the stack.

Decodo's Web Scraping API removes the infrastructure layer and gives your team reliable access to retailer pages even under bot protection, so MAP compliance logic stays the focus rather than HTTP plumbing.

Final thoughts

Effective MAP monitoring comes down to 4 components working together: a scraper that reliably extracts advertised prices, a comparison layer that calculates violation severity, an alert system that routes notifications to the right people, and a scheduler that runs the whole thing continuously without human intervention.

Start with a manageable set of high-priority retailers and expand from there. The selector registry is your most important operational asset: keep it current, test it before each cycle, and it'll serve you well at scale.

The full code for this guide is structured to extend cleanly. Adding a new retailer means adding 3 fields to your config. Adding a new alert channel means adding a routing condition in alerts.py. The architecture doesn't fight you as your monitoring scope grows.

Build it right once. After that, it's just a cron job and a Slack channel.

About the author

Justinas Tamasevicius

Director of Engineering

Justinas Tamaševičius is Director of Engineering with over two decades of expertise in software development. What started as a self-taught passion during his school years has evolved into a distinguished career spanning backend engineering, system architecture, and infrastructure development.

Connect with Justinas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.