The Ultimate Guide to Scraping eCommerce Websites: Tools, Techniques, and Best Practices

Manual eCommerce data collection breaks because the data doesn’t stay stable. Prices change daily, products disappear and reappear under the same URL, and even mid-sized stores list tens of thousands of SKUs. On top of that, much of the content is rendered with JavaScript, layouts shift due to constant A/B testing, and anti-bot systems detect repeated automated access. This guide shows you how to analyze a target site and choose the right extraction approach.

Vytautas Savickas

Last updated: Feb 20, 2026

12 min read

TL;DR

- eCommerce scraping keeps product data current at scale (prices, stock, catalogs)

- It's harder than typical scraping because of JavaScript rendering, constant layout changes, and anti-bot defenses

- Successful scrapers focus on data location, tool choice, and reliability under the changes

- Common production failures include IP blocking, broken selectors, rendering gaps, and unmonitored scraper downtime.

Understanding eCommerce website structure

Before you write code, you need to spot where the product data actually comes from.

Start by comparing what the server returned with what the browser constructed. The DOM in the Elements panel reflects the browser's parsed view, not proof that the data existed in the original HTML.

On category pages, products usually appear as repeating "cards." Find one card and identify the smallest container that reliably represents a single product. That container becomes your anchor.

Then look for structured data. Many stores embed product details using JSON-LD in a <script type="application/ld+json"> block. When it's present, it often gives you cleaner fields like name, price, currency, and availability than scraping visible text.

Selector strategies

Once you see the structure, you think in selectors. A selector is how you tell your scraper which elements to extract.

- CSS selectors are your default because they're readable and map directly to the DOM

- XPath is useful when you need conditional selection or traversal that CSS can't express cleanly. If your selector depends on long nested paths or unstable class names, it will break the next time the site runs an A/B test.

If you want a deeper comparison, our XPath vs. CSS selectors guide walks through the trade-offs in detail.

Detecting JavaScript-rendered content

Confirm whether the data exists in the initial HTML at all. The fast check is simple: compare "View page source" with what you see in the Elements panel. If prices or titles are missing in the source but visible in the browser, the data is being injected after load.

Inspect network requests for XHR and fetch calls by opening DevTools Network tab, reloading the page, and filtering only Fetch/XHR requests. Then focus on the requests triggered after the initial page load that return JSON or use endpoints like /api/, /products, /search, or GraphQL routes. Modern frontends often load product data as JSON and render it client-side. If you can identify that endpoint, scraping the JSON response is usually more stable than parsing rendered HTML.

Pagination and URL patterns

Look at how products are spread across pages. eCommerce sites use a few common pagination patterns:

- Some rely on query parameters like ?page=2 or &offset=48

- Others encode pagination in the path, such as /laptops/page/2

- Modern stores often use infinite scroll or a "Load More" button, which hides pagination behind JavaScript calls

Filters and categories often reveal how the backend expects parameters like brand, price range, or availability. These patterns let you generate URLs programmatically instead of clicking through pages manually.

Infinite scroll deserves special attention. Even when it looks complex, it's often backed by a simple API that returns the next batch of products as JSON. Finding that request in the Network tab can save you a lot of work.

Tools and frameworks for eCommerce scraping

At this point, the real question is which tool category fits a specific target without adding unnecessary complexity.

Python libraries for static content

If the page delivers product data in the initial HTML response, lightweight libraries are usually enough:

- Requests paired with Beautiful Soup is the classic setup. You fetch a page, parse the HTML, and extract elements with CSS selectors. It's easy to reason about and fast to prototype. On the other hand, it can't see anything rendered by JavaScript.

- lxml focuses on parsing speed. When you're processing large volumes of HTML or dealing with deeply nested documents, it outperforms more forgiving parsers. It shines in batch jobs where parsing time becomes a bottleneck.

- httpx is a modern HTTP client with first-class async support. It's useful when you need concurrency without jumping straight to a full framework.

Browser automation tools

When product data is rendered client-side, you need a real browser:

- Selenium supports multiple browsers and has a huge ecosystem. It's reliable, but heavy. You pay for that reliability with slower execution and more infrastructure overhead.

- Playwright is newer, faster, handles modern JavaScript frameworks better, and includes auto-waiting for elements by default. For eCommerce sites built as single-page applications, it's often the more predictable choice.

- Puppeteer fills a similar role in the Node.js ecosystem, with tight integration into Chrome DevTools.

Full-featured frameworks

If you're collecting large catalogs on a schedule, you'll feel the limits of "one script per site" quickly. That's where Scrapy helps. It's built for crawls that run repeatedly and need scheduling, concurrency, retries, pipelines, and structured exports.

However, Scrapy doesn't render JavaScript by default. If you need both Scrapy's crawl engine and browser rendering, you can integrate a headless browser – for example, via scrapy-playwright – without throwing away the rest of your workflow.

Managed scraping solutions

If you don't want to run proxies, browsers, and retry logic yourself, a web scraping API can handle the access layer and return HTML or extracted data as real-time and on-demand results. You're trading some flexibility for operational predictability.

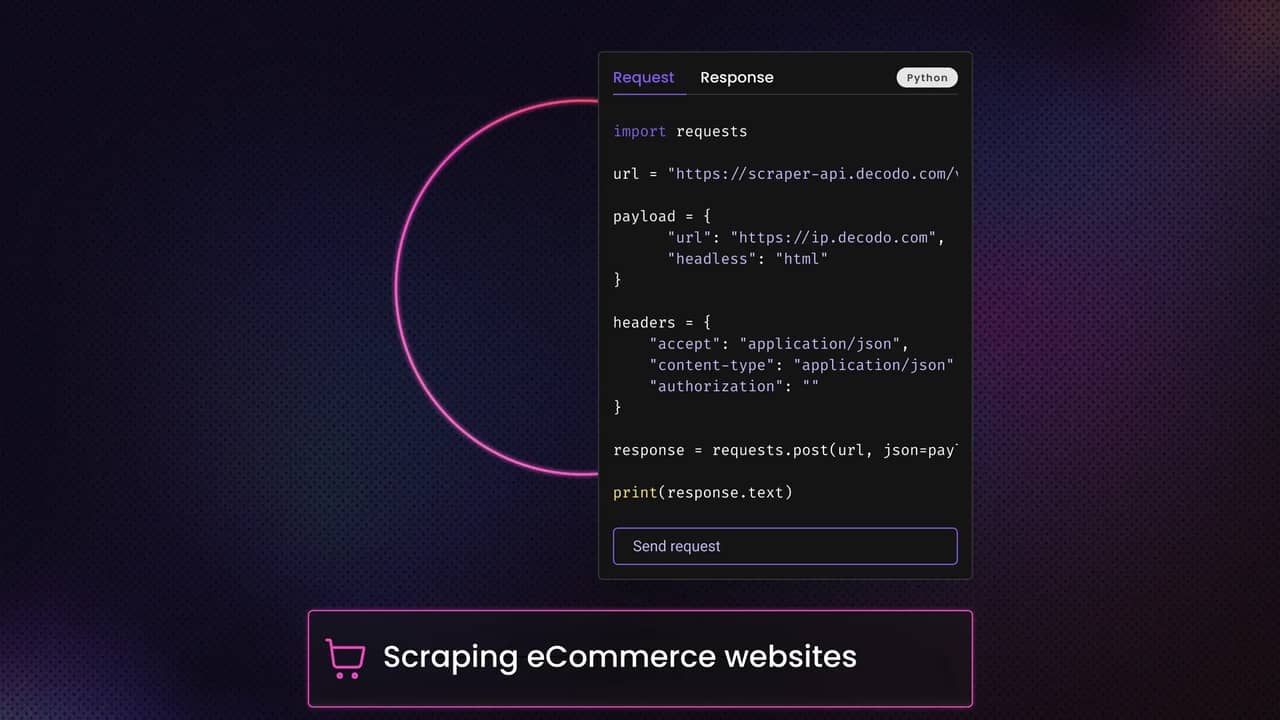

This is where Decodo's Web Scraping API fits. It's the umbrella layer that handles the heavy lifting, and it includes purpose-built endpoints for specific workloads.

For eCommerce, that includes Decodo's eCommerce Scraper API, which is effectively the eCommerce-focused part of the broader Web Scraping API – tuned for product pages, listings, and the kinds of patterns you see in retail targets.

Managed services make sense when targets are heavily protected, when uptime matters, or when your team can't afford to babysit broken scrapers.

When you evaluate a provider, focus on what you can measure: target coverage for the sites you care about, success rates over time (not a one-day test), and pricing that matches your request volume and freshness requirements.

Decision framework

Scenario

Recommended tool

Quick, one-time scrape of static pages

Requests + Beautiful Soup

JavaScript-heavy single-page apps

Playwright or Selenium

Large-scale, ongoing data collection

Scrapy or Decodo Web Scraping API

Protected sites with anti-bot measures

Decodo Web Scraping API

No coding required

Decodo Scraping Templates

Building your first eCommerce scraper: A practical walkthrough

This example prioritizes clarity over implementation details or scale. It's a naive scraper by design. The goal is to show how the pieces fit together before real-world constraints complicate everything.

You'll scrape an Amazon category listing page - https://www.amazon.com/Earbud-In-Ear-Headphones/b/node=12097478011.

This is a listing page, not a product page. That changes what you extract. You're looking for product cards, then pulling a few fields from each card.

Environment setup

You need a basic Python setup and a few well-known libraries. Create a virtual environment and install the essentials.

You'll use:

- Requests for HTTP

- BeautifulSoup 4 for parsing

- lxml as the parser backend

A minimal project structure is enough.

Step 1: Fetching the page

You send a basic request with realistic headers. On Amazon, headers matter even for a baseline run.

Step 2: Parsing HTML content

You parse the returned HTML with BeautifulSoup 4 using the lxml parser.

Step 3: Extracting product data

Amazon listing pages usually contain many "result items." The most practical anchor is a result container with a data-asin attribute. That's often the product identifier in listings.

You'll extract:

- name

- price (when present)

- rating (when present)

- review count (when present)

- product URL

- ASIN

Step 4: Saving the result

For a first run, JSON Lines is a clean output format because each line is a complete record.

What to expect in real runs

On Amazon listing pages, some fields will be missing. Prices may not be present for every card. Rating and review count can be absent. Some cards are not products at all (sponsored modules, banners, layout experiments), which is why filtering matters.

If you want the more realistic version of this workflow, including how to handle Amazon's variability and extraction pitfalls across listings and product pages, the "How to scrape Amazon product data" guide is a great starting point.

Handling pagination and multi-page scraping

In practice, pagination scraping breaks in predictable ways. This section focuses on how pagination behaves on eCommerce sites and how you handle it in code.

Recognizing the pagination pattern

The first step is recognizing which pattern you're dealing with. Most eCommerce sites fall into a small set of categories:

- Query-based pagination is the simplest. Page numbers or offsets appear as query parameters like ?page=1 or ?offset=48. Changing the number in the URL returns a different slice of products.

- Path-based pagination encodes the page in the URL path, such as /laptops/page/2. These are easy to generate programmatically once you see the pattern.

- Cursor-based pagination shows up when the frontend talks to an API. Instead of page numbers, requests include a cursor token returned by the previous response. This is common in modern backends because it scales better than numeric pages.

- Infinite scroll and "Load More" buttons hide pagination behind JavaScript. The browser still requests data in chunks, but you don't see page numbers. The data is usually fetched via an API that accepts an offset, cursor, or batch size.

You identify the pattern by watching network traffic while paging through products. The URL structure tells you how to proceed.

Implementation strategies

Following "Next" links

You look for a "Next" link or button and extract its URL. After each request, you check whether that link exists. When it disappears or becomes disabled, you've reached the last page.

This approach adapts automatically when catalogs grow or shrink, but it depends on stable pagination markup.

URL construction

If pagination parameters are predictable, URL generation is faster than parsing pagination controls.

Generate URLs in a loop and stop when responses no longer contain product cards. This avoids scraping UI elements entirely and is easy to parallelize.

If the site exposes total result counts in HTML or JSON, you can estimate an upper bound by dividing by products per page. Treat that as a hint, not a guarantee. Filters, experiments, and backend changes can invalidate the math.

Handling infinite scroll

Infinite scroll is often the simplest to scrape once you find the underlying request.

As you scroll, the browser triggers background requests that return JSON with the next batch of products. These requests usually include parameters like offset, cursor, or pageToken.

You don't need to simulate scrolling if you can reproduce those requests directly. You call the same endpoint, update the parameters, and stop when the response is empty or a flag indicates the end.

When there's no clear API, browser automation may be the only way to trigger loading. In that case, you scroll incrementally, wait for new items to appear, and keep track of how many products you've seen to avoid loops.

Why eCommerce scrapers fail in production

Most eCommerce scrapers fail because production conditions are different from local tests. This section describes the symptoms you'll see and the underlying causes.

Dynamic content and JavaScript rendering

Your scraper fetches a page successfully, but prices, availability, or ratings are empty. Locally, everything looked fine in the browser. In production, the HTML responses don't contain the data you expect.

This happens when product data is rendered dynamically by JavaScript after the initial HTML load. Basic HTTP clients only see the server-rendered markup. From their point of view, the data never arrives.

You can usually detect this by comparing the page source to the inspected DOM or by observing background requests in the Network tab. In production, this mismatch leads to partial records and silent data loss rather than hard errors.

Another symptom is inconsistent results. The same URL returns different content depending on timing, cookies, or client headers. JavaScript-heavy sites often rely on client-side state, which makes responses less deterministic.

Anti-scraping measures

After a few hundred or a few thousand requests, you start seeing slower responses, unexpected redirects, empty product lists, or pages that look like category pages but contain no real products.

That's a common pattern on protected eCommerce targets. Instead of returning a clean block page, the site returns responses that technically succeed but are designed to waste your time or poison your output.

Sometimes you'll get a verification page. Sometimes you'll get a "soft block" where the markup loads, but the data is missing. Either way, your scraper keeps running and your dataset quietly stops being trustworthy.

CAPTCHAs and verification challenges

Different eCommerce platforms use different challenge types. Some are interactive. Others are invisible and triggered by behavioral scores. In both cases, the scraper sees either a challenge page or an unexpected response format.

In production, CAPTCHAs often appear mid-run rather than at the start. That's what makes them dangerous. You collect partial datasets without realizing that later pages were never scraped.

Strategies for effective and responsible scraping

This section focuses on how you design scrapers that keep working under real-world conditions.

Build selectors you can maintain

Selectors fail more often than requests. eCommerce frontends change constantly, often through small experiments that don't alter visible behavior but do alter markup.

Prefer attributes meant to carry meaning, such as data-* attributes, over purely stylistic classes. When possible, use multiple selector fallbacks.

Rotating proxies for scale

Rotating proxies distribute traffic across multiple IPs, reducing the footprint of any single source.

Different proxy types behave differently:

- Datacenter IPs are fast and cheap, but easier to identify

- Residential IPs map to real devices and networks, which makes them harder to distinguish from organic traffic

For eCommerce sites that closely monitor access patterns, Decodo residential proxies are often necessary to maintain consistent access.

User-Agent and header rotation

A static User-Agent repeated thousands of times stands out quickly. Rotating User-Agents across realistic browser and operating system combinations reduces that signal.

Headers should be coherent. User-Agent, Accept headers, language preferences, and encoding options should make sense together. Random combinations often look worse than no rotation at all.

Including a referrer header when navigating between pages helps maintain natural request flows, especially on category-to-product transitions.

Request timing and patterns

Add variability to delays to make traffic patterns less uniform. Even small randomness reduces correlation across requests.

New scraping sessions that immediately send high request volumes attract attention. Gradually increase scraping volume to look more like normal browsing behavior.

Session management

Many eCommerce sites rely on session state. Cookies track preferences, location, and browsing flow. Ignore them, and responses become inconsistent.

Maintain sessions across requests to keep behavior coherent. For some targets, sticky IPs paired with persistent cookies are necessary to avoid resets between pages.

Cleaning, validating, and saving scraped data

Start by normalizing text – remove whitespace, line breaks, invisible characters. HTML entities and special characters should be decoded early so downstream logic works with plain text.

Prices need special attention. eCommerce sites mix currency symbols, thousands separators, and locale-specific decimal formats. A value like "$1,299.99" or "1.299,99 €" must be converted into a numeric representation you can compare and aggregate. This step is easy to get wrong if you assume a single format.

Data validation

Validation is how you detect that your scraper is "working," but output quality is dropping. For example:

- Prices should be numeric

- Ratings should fall within expected ranges

- Dates should parse cleanly

When types don't match expectations, you flag the record instead of forcing it through.

Range validation catches subtle errors. A price of 0.99 might be valid for accessories, but suspicious for a laptop. Upper and lower bounds help detect parsing mistakes or layout changes.

Cross-field validation gives you additional context. A discounted price higher than the original price is a data error, not a business insight.

Handling missing and inconsistent data

Missing fields are normal in eCommerce. New products might not have ratings. Out-of-stock items might hide prices. Represent absence as None, not as 0 or an empty string, so downstream logic can distinguish "missing" from "real."

When you can't extract a field, record why. A simple missing_fields array or data_quality flag makes debugging faster than scanning logs. If you ever impute values, like carrying forward the last known price, store the imputed value separately and mark it as imputed.

Export formats

- For pipelines, JSON Lines is a strong default because each line is one product record, and optional fields stay optional

- CSV is fine for flat exports, but it gets painful once you add variants, multiple prices, and metadata

- If you need history, deduplication, and "latest versus previous" queries, store data in a database and treat scraping as an append-only event stream. You'll thank yourself when you need to explain why a price changed on a specific date.

If you want deeper guidance on storage patterns and formats, check out our "How to save your scraped data" guide for more details.

Legal and ethical considerations

This section focuses on how teams typically reason about risk and responsibility when scraping eCommerce sites.

Understanding robots.txt

robots.txt signals which parts of a site operators prefer automated agents to avoid. It does not enforce anything technically.

Reading it helps you understand where operators draw boundaries. Respecting those signals is a sign of good faith and lowers the likelihood of conflict when access patterns are reviewed.

eCommerce sites often block cart, search, or account-related paths while leaving category and product pages open. Treat that distinction as intentional.

Terms of service considerations

Terms of service define how a site expects its content to be used. Violating them is not the same as unlawful behavior, but it still carries consequences.

For commercial projects, teams assess risk based on data criticality, visibility, and potential impact. Scraping publicly visible product data is evaluated differently from extracting user-generated or account-bound content.

When scraping becomes business-critical, teams often seek explicit permission or alternative access channels rather than relying on ambiguity.

Legal landscape overview

The legal environment around web scraping varies by jurisdiction and context.

In the US, cases involving the Computer Fraud and Abuse Act have shaped how courts view access to public websites. In the EU, GDPR affects how personal data can be collected and processed.

The important distinction is between public data and protected content. Pages that require authentication or expose personal information carry different expectations and risks.

For specific use cases, especially commercial ones, consulting a legal professional is standard practice.

Ethical scraping practices

Scraping at reasonable rates reduces the chance of disrupting normal site operations. Identifying your scraper through a custom User-Agent with contact information increases transparency. Avoiding the collection of personal data without a clear basis protects both users and your project.

When sites provide opt-out mechanisms or alternative data access, respecting them helps maintain a healthier ecosystem. Ethical scraping considers the impact on the site's business, not just what's technically possible.

When not to scrape

Login-required or subscription-only content is a common one. Personal user data, especially reviews tied to identifiable individuals, carries additional risk. Content explicitly marked as copyrighted or restricted should be treated with caution.

If a site has clearly and repeatedly denied automated access, continuing to scrape usually creates more problems than value.

Responsible eCommerce scraping balances technical capability with restraint. Long-term access depends as much on judgment as on code.

Scaling up: From scripts to production

Scaling an eCommerce scraper is about making it cheaper to maintain and predictable enough that other people can depend on it.

When DIY scraping hits its limits

Your first scaling wall is maintenance. eCommerce targets change constantly, and the failure you see most often is a silent degradation. A selector still matches, but it matches the wrong thing. A price field goes missing for 20% of products. A category page starts returning empty product cards. If you don't catch that fast, you ship bad data with high confidence.

The second wall is operational overhead. Scheduling, retries, logging, alerting, storage, and backfills aren't difficult individually. Together, they become a second product. The moment multiple sites and categories are involved, "just update the selector" turns into a recurring workflow: detect the change, reproduce it, patch extraction, validate output, deploy, and monitor.

The hidden wall is an opportunity cost. Every hour spent chasing extraction drift is an hour not spent building features your users care about.

Deciding whether to outsource the scraping infrastructure layer

Managed solutions exist because scraping can get pretty operationally expensive. You either pay in engineering time, on-call load, and maintenance cycles, or you pay a provider to absorb part of that volatility. The right choice depends on how expensive downtime is for your use case and how many targets you need to keep stable at the same time.

If you consider a managed approach, evaluate it like any other production dependency. Does pricing match your request volume? And do you get enough control to keep your own validation and storage model intact?

A great example is Decodo's eCommerce Scraping API, which can scrape all major eCommerce platforms for you, while Site Unblocker helps you improve access reliability on protected sites.

Hybrid approaches that make economic sense

Hybrid setups are common because not every target deserves the same investment:

- You keep DIY scraping for simpler sites where breakage is rare and fixes are cheap

- You use a managed layer for targets where maintenance cost is predictably high, or where reliability matters because the dataset feeds decisions

You can also split by workflow:

- Use your own crawler for discovery and URL collection

- Switch to structured extraction only for product pages that matter

Or separate by reliability requirements:

- stable workflows for high-impact categories

- lighter coverage for everything else

The key is that your downstream pipeline shouldn't care how you collected the data. Whether it came from a script, a browser run, or an API, it should land in the same schema, go through the same validation, and produce the same quality signals.

Scaling to production is mostly an organizational decision. The more people rely on the output, the more you need predictable maintenance costs, monitoring, and accountability.

Final thoughts

eCommerce scraping works easily at small scale, but production scale exposes the real constraints: JavaScript-rendered data, constant layout changes, anti-bot defenses, and silent data degradation. Reliable systems start by identifying where data actually originates, choosing tools that match rendering complexity and protection levels, and designing for change rather than stability. There is no universal best approach. Static sites reward simplicity, dynamic and protected targets demand more infrastructure. The right choice depends on scale, criticality, and how much operational ownership you are prepared to carry.

About the author

Vytautas Savickas

Former CEO

Vytautas Savickas led Decodo as CEO for the better part of a decade, bringing 15+ years of management experience to the role. His background in scaling startups, B2B SaaS, and product management pushed Decodo's growth in ways that left a real mark.

Outside the 9-to-5, Vytautas follows MMA matches and attends motorsport events.

Connect with Vytautas via LinkedIn.

All information on Decodo Blog is provided on an as is basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Decodo Blog or any third-party websites that may belinked therein.