Target Scraper API

Collect real-time product data at scale with our Target scraper API* to get data points like pricing, inventory, reviews, minus IP blocks and CAPTCHAs.

* This scraper is now a part of the Web Scraping API.

125M+

IPs worldwide

99.99%

success rate

200

requests per second

100+

ready-made templates

Free

starter plan

Be ahead of the Target product scraping game

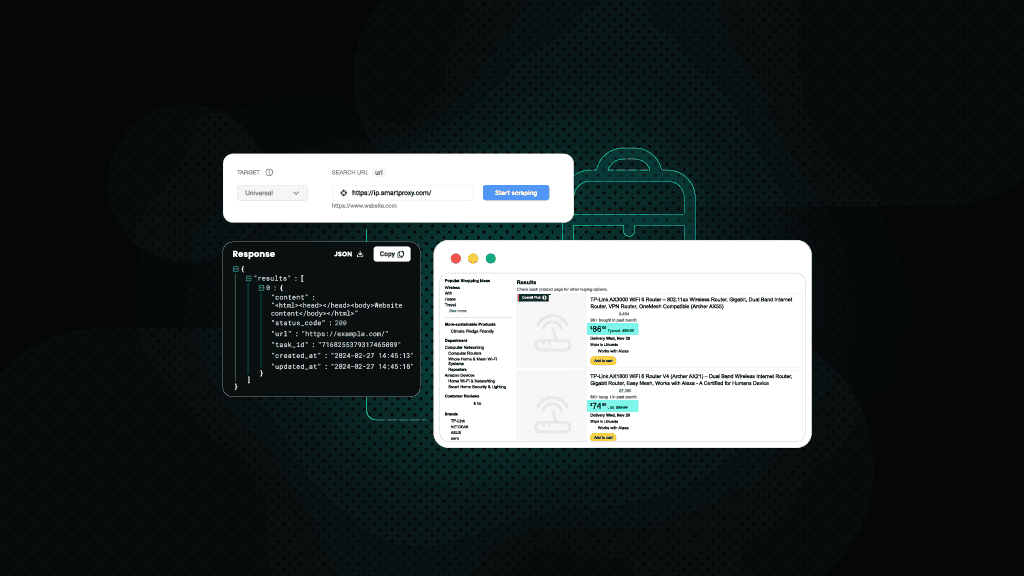

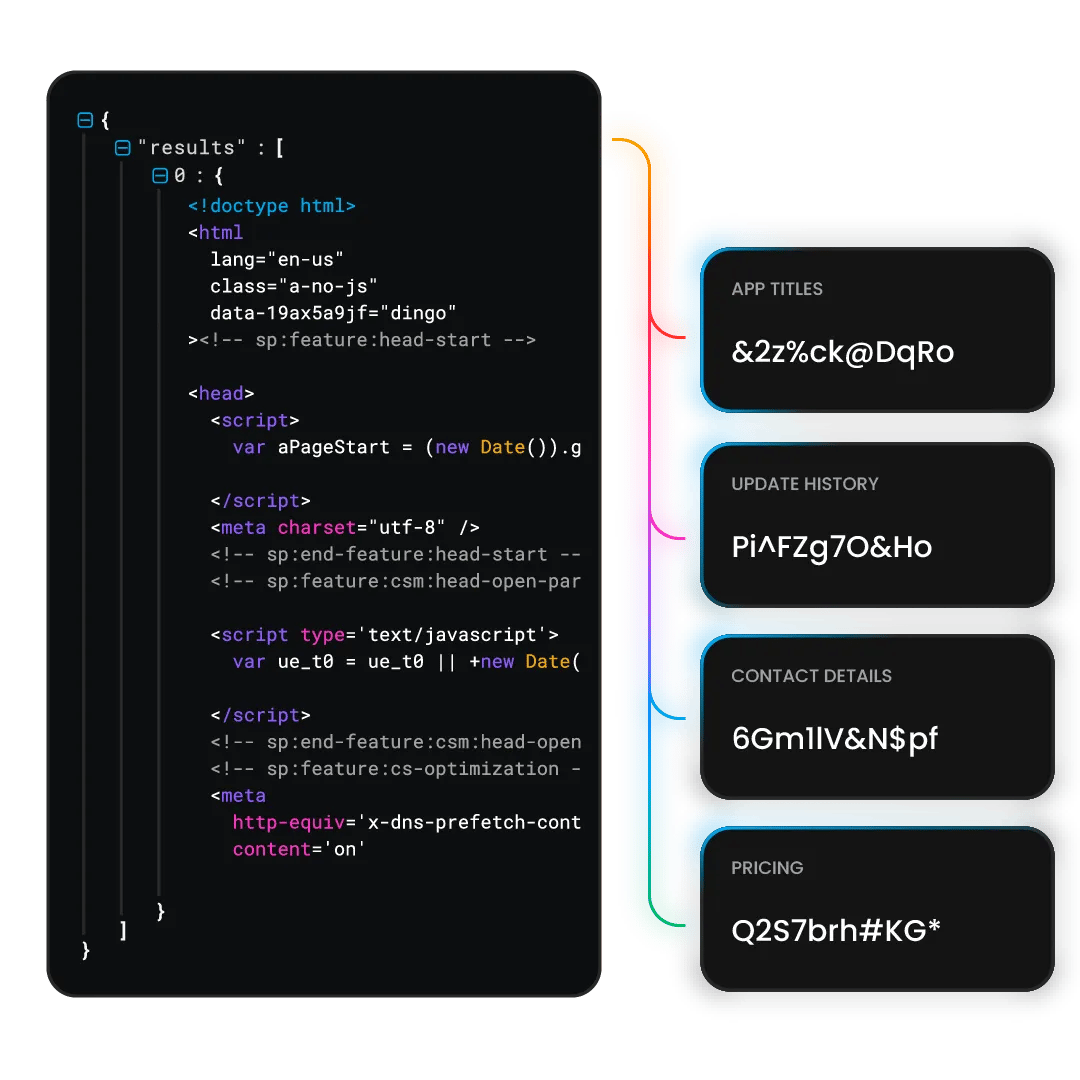

Extract data from Target

eCommerce Scraping API is a powerful data collector that combines a web scraper, a data parser, and a pool of 125M+ residential, mobile, ISP, and datacenter proxies. That’s why you can perform Target product data scraping in an instant. Here are some of the key data points you can extract with it:

- Product details

- Pricing data

- Inventory status

- Customer reviews

- Promotions and deals

What is a Target scraper?

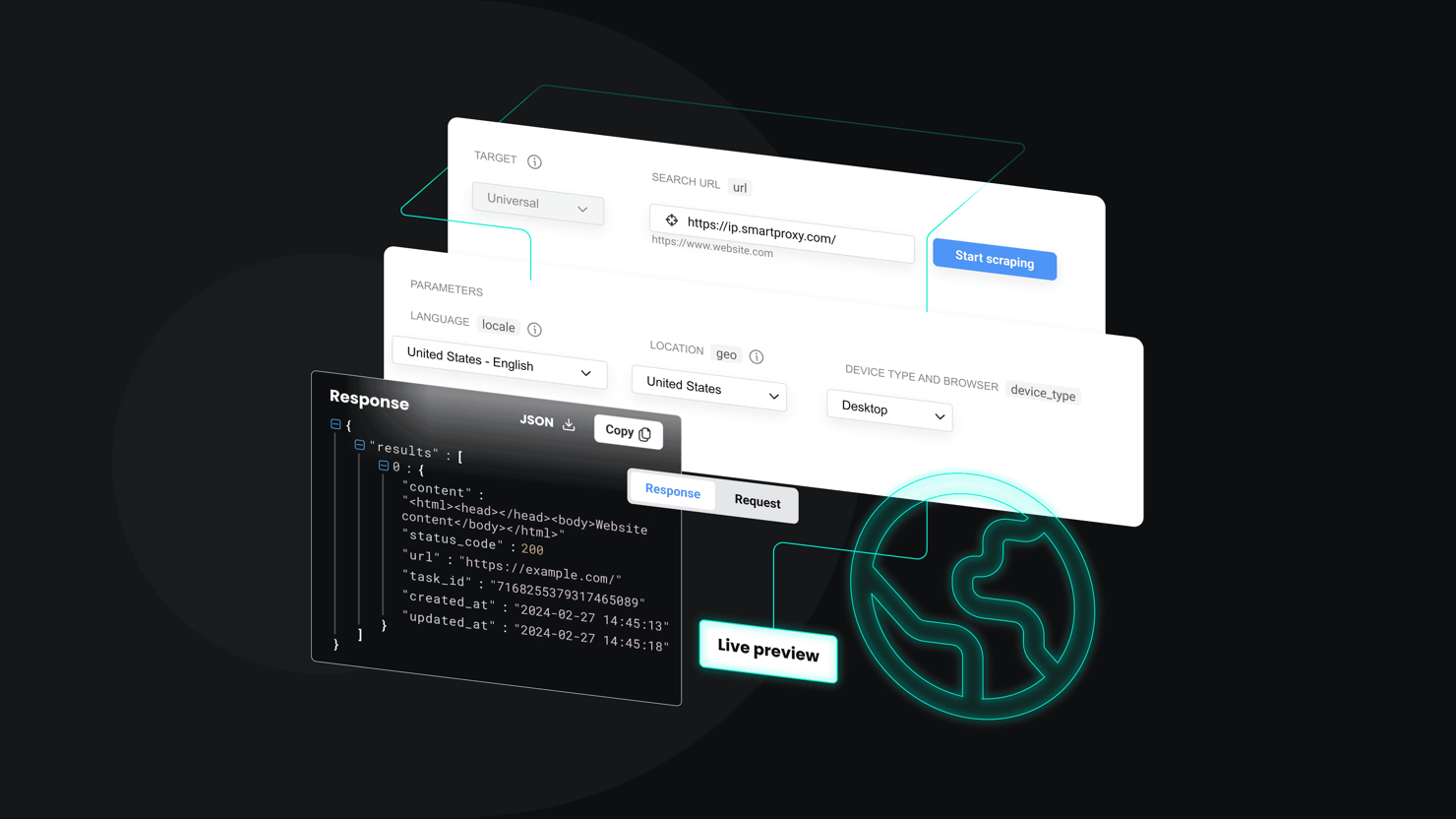

A Target scraper is a tool that extracts data from the Target website. With our Target scraper API, you can send a single API request and receive the data you need in HTML, JSON, or CSV format. Even if a request fails, we’ll automatically retry until the data is delivered. You'll only pay for successful requests.

Designed by our experienced developers, this tool offers you a range of handy features:

Built-in scraper and parser

JavaScript rendering

Easy API integration

195+ geo-locations, including country-, state-, and city-level targeting

No CAPTCHAs or IP blocks

Scrape Target product data with Python, Node.js, or cURL

Our Target scraper API supports all popular programming languages for hassle-free integration with your business tools.

Target scraper API is full of awesomeness

Scrape Target data with ease using our powerful API. From flexible output options to built-in proxy integration, we ensure seamless data collection without blocks or CAPTCHAs.

Flexible output options

Select from HTML, JSON, or parsed table results to suit your specific scraping needs.

100% success

Pay only for successfully retrieved results from your Target queries.

Real-time or on-demand results

Choose when you need the data – collect real-time results now or schedule scraping tasks for later.

Advanced anti-bot measures

Leverage integrated browser fingerprints to avoid detection and CAPTCHAs.

Easy integration

Integrate our APIs into your workflows with straightforward quick start guides and code examples.

Proxy integration

Leave CAPTCHAs, IP blocks, and geo-restrictions behind with 125M+ IPs under the scraping API hood.

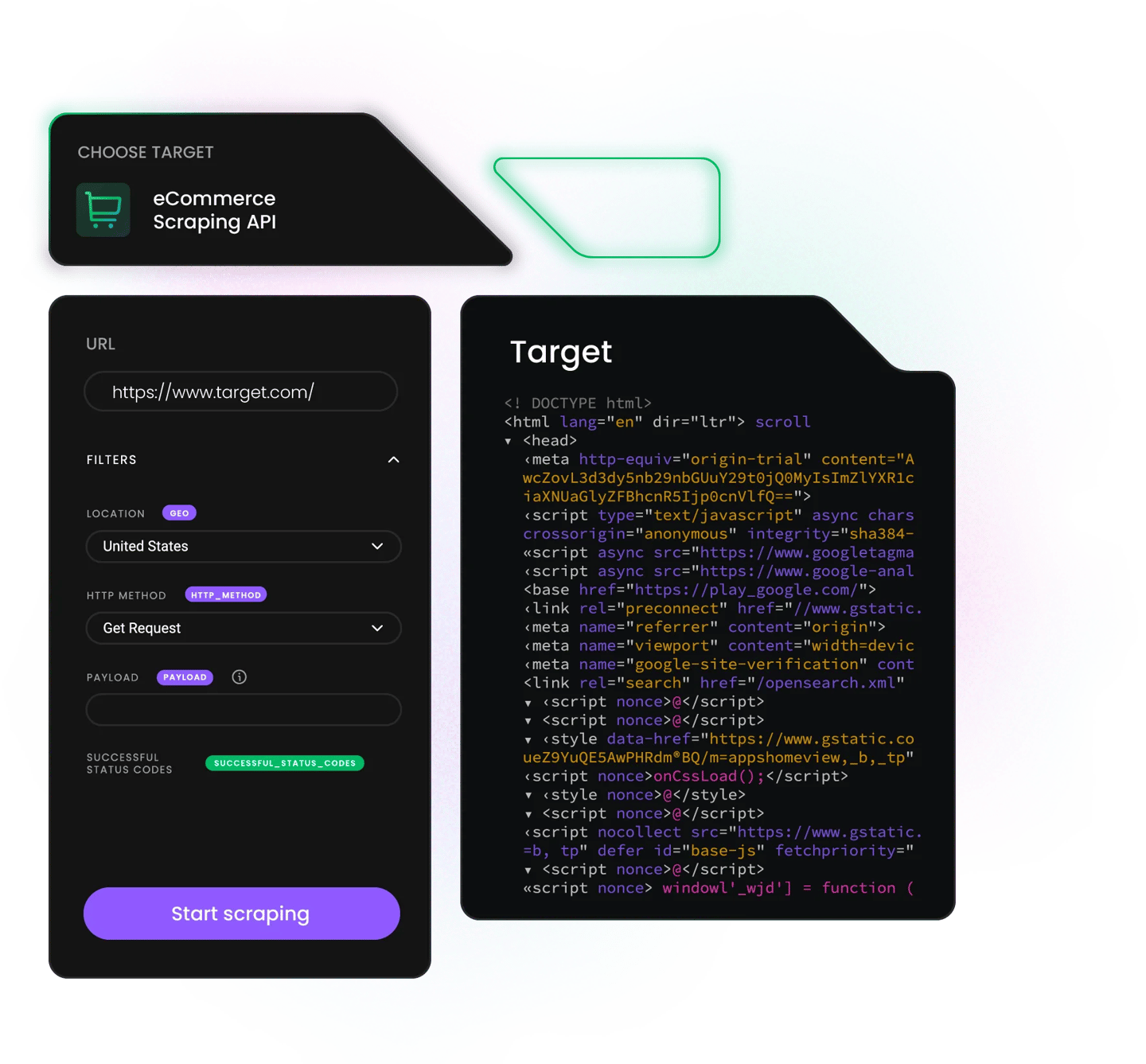

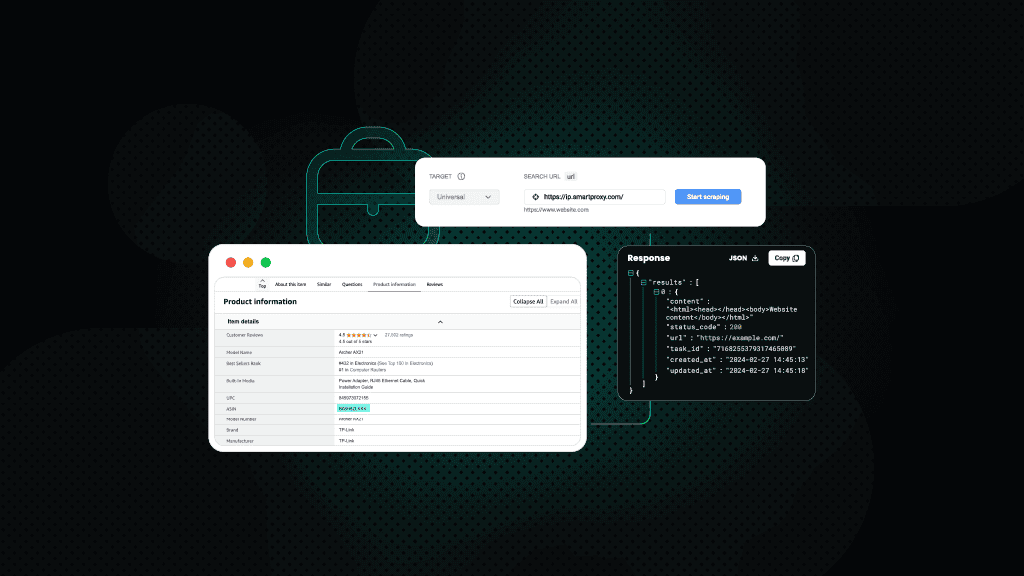

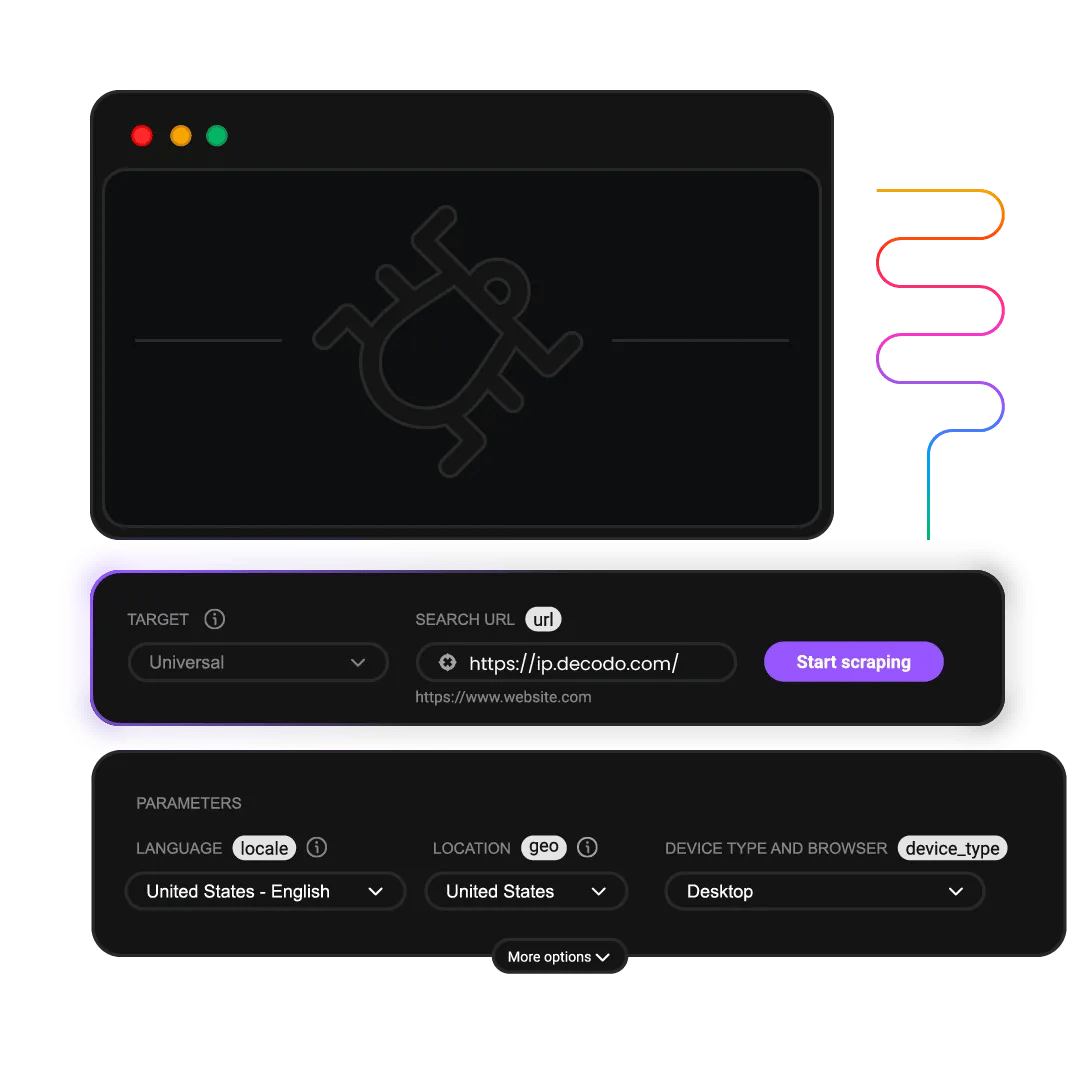

API Playground

Send your first test request using our interactive API Playground in the dashboard.

What does Web Scraping API cost?

Choose a plan based on your scraping volume. All plans include the same powerful features – you only pay for what you use. Start with the free plan to test before committing.

Plan prices

+VAT / Billed monthly

Rate limit

All prices shown are per 1K req.

Plan price

$0

+VAT / Billed monthly

Request type

Price per 1k req.

2K req.

$0.50

1K req.

$0.75

1K req.

$1.00

667 req.

$1.50

Rate limit

10 req/s

Plan price

$19

+VAT / Billed monthly

Request type

Price per 1k req.

38K req.

$0.50

25K req.

$0.75

19K req.

$1.00

12K req.

$1.50

Rate limit

10 req/s

Plan price

$49

+VAT / Billed monthly

Request type

Price per 1k req.

163K req.

$0.30

75K req.

$0.65

54K req.

$0.90

39K req.

$1.25

Rate limit

25 req/s

Plan price

$99

+VAT / Billed monthly

Request type

Price per 1k req.

707K req.

$0.14

165K req.

$0.60

116K req.

$0.85

82K req.

$1.20

Rate limit

50 req/s

Need more?

Request type

Price per 1k req.

Custom

Custom

Custom

Custom

Rate limit

Custom

For low-security sites and simple access

For accessing guarded or sensitive pages

With each plan, you access:

99.99% success rate

Results in HTML, JSON, CSV, XHR or PNG

MCP server

JavaScript rendering

AI integrations

100+ pre-built templates

Supports search, pagination, and filtering

LLM-ready markdown format

24/7 tech support

14-day money-back

SSL Secure Payment

Your information is protected by 256-bit SSL

What people are saying about us

We're thrilled to have the support of our 130K+ clients and the industry's best

Attentive service

The professional expertise of the Decodo solution has significantly boosted our business growth while enhancing overall efficiency and effectiveness.

N

Novabeyond

Easy to get things done

Decodo provides great service with a simple setup and friendly support team.

R

RoiDynamic

A key to our work

Decodo enables us to develop and test applications in varied environments while supporting precise data collection for research and audience profiling.

C

Cybereg

Trusted by:

Decodo blog

Build knowledge on our solutions and improve your workflows with step-by-step guides, expert tips, and developer articles.

Most recent

How to Scrape Websites with PowerShell: A Complete Guide

Justinas Tamasevicius

Last updated: May 04, 2026

12 min read

Frequently asked questions

Do you provide dedicated support for Target scraping?

Yes, we offer dedicated support for Target scraping, including 24/7 tech support for fast troubleshooting of any potential issues you might face when collecting publicly available data. Users with bigger subscriptions also get a dedicated account manager who offers technical guidance, implementation support, and tips on scaling your scraping operations efficiently.

Do I need coding skills to use the Target scraper API?

No, you don’t necessarily need coding skills to use our Web Scraping API. You can conveniently collect data using our pre-made scraping templates for various Target queries. With a single request, you can get data from various Target product and search pages. For more advanced use cases, basic coding knowledge (e.g., Python or JavaScript) can help with customization and automation.

We also offer detailed documentation, quick start guides, and code examples, making it easy for non-developers to follow along. And if you face any challenges while scraping Target, our 24/7 tech support is available via LiveChat.

Is using a Target scraper legal?

Scraping publicly available data from Target is legal, especially when used responsibly and in compliance with applicable laws and website terms. We encourage consulting legal counsel for compliance in specific jurisdictions.

Can I schedule recurring Target data extractions?

Yes, our Web Scraping API supports task scheduling, allowing you to automate data extractions. You can configure scraping jobs to run daily, weekly, or at custom intervals, keeping your data consistently up to date. This feature is handy for ongoing price monitoring or inventory tracking.

Can I customize what data fields I extract from Target?

Absolutely! The Target scraper API allows you to define exactly what data fields to extract, including product names, SKUs, prices, images, descriptions, availability, and ratings. This means you can tailor your extraction to your unique business needs without collecting unnecessary information.

How reliable is Decodo’s Target scraper API?

Decodo’s Target scraper API is built for enterprise-grade reliability, with a guaranteed 99.99% uptime and 100% success rate. Our platform offers built-in error handling, smart retries, and dynamic IP rotation, ensuring consistent and accurate data delivery.

Target Scraper API for Your Data Needs

Gain access to real-time data at any scale without worrying about proxy setup or blocks.

14-day money-back option