Data Collection

The process of data collection is vital in all kinds of industries. It helps businesses learn about the market, know their customers better and adapt to their needs. Data collection can be automated by scraping a set target. It’s extra useful for analyzing business competition, records, trends, and other data.

14-day money-back option

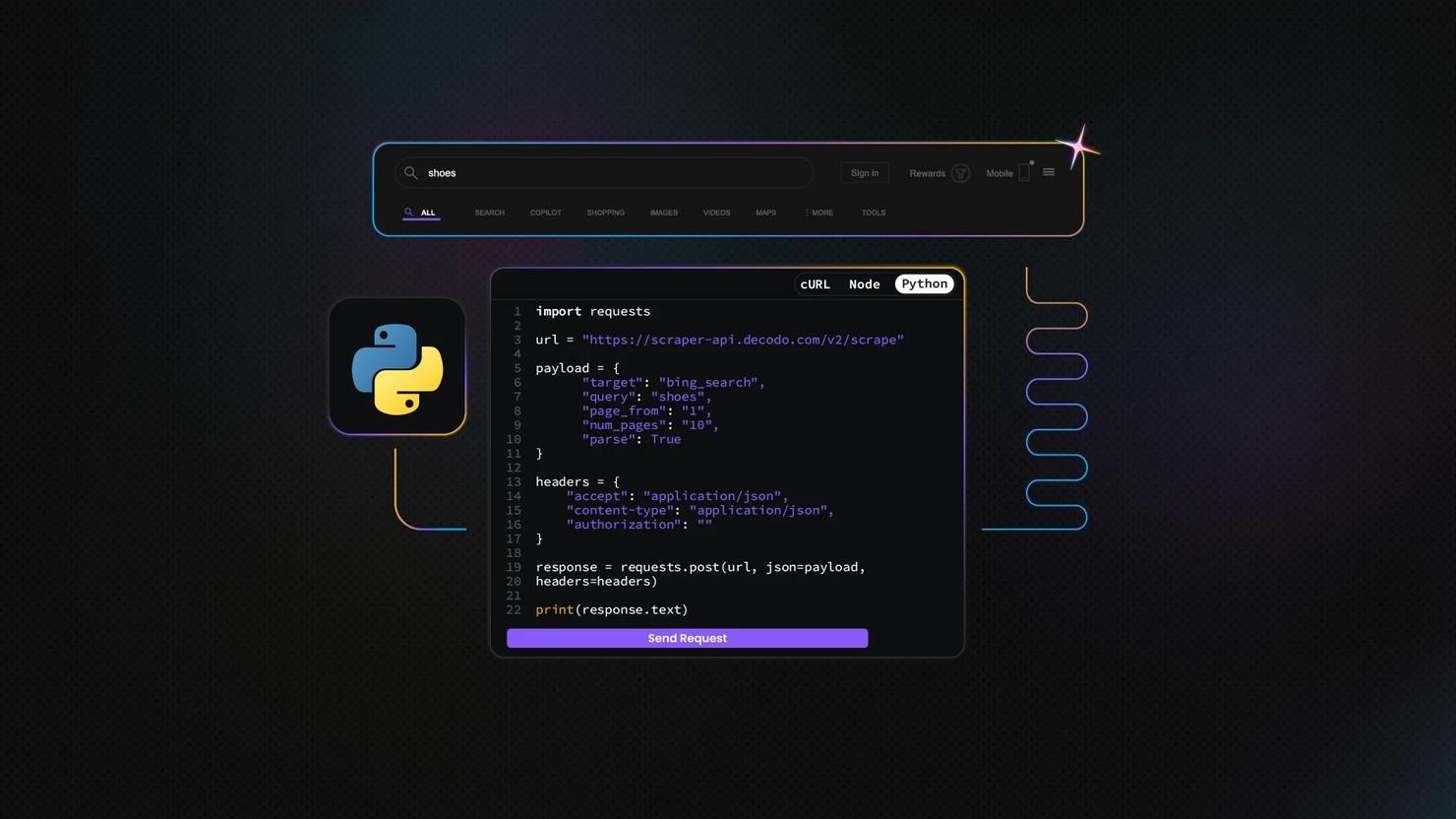

How to Scrape Bing Search with Python

Web scraping is the art of extracting data from websites, and it's become a go-to tool for developers, data analysts, and startup teams. While Google gets most of the spotlight, scraping Bing search results can be a smart move, especially for regional insights or less saturated SERPs. In this guide, we'll show you how to scrape Bing using Python with tools like Requests, Beautiful Soup, and Playwright.

Zilvinas Tamulis

Last updated: May 16, 2025

12 min read

How to Scrape Google Scholar With Python

Google Scholar is a free search engine for academic articles, books, and research papers. If you're gathering academic data for research, analysis, or application development, this blog post will give you a reliable foundation. In this guide, you'll learn how to scrape Google Scholar with Python, set up proxies to avoid IP bans, build a working scraper, and explore advanced tips for scaling your data collection.

Dominykas Niaura

Last updated: May 12, 2025

10 min read

Proxy for Scraping Amazon: The Ultimate Guide

Scraping Amazon without proxies leads to IP bans, CAPTCHAs, and rate limits, making data collection nearly impossible. Proxies are essential for bypassing these defenses and accessing vital pricing and product data. This guide explains why scraping Amazon is challenging, how proxies can help, and which types of proxies are most effective for reliable, large-scale Amazon data extraction.

Dominykas Niaura

Last updated: May 05, 2025

5 min read

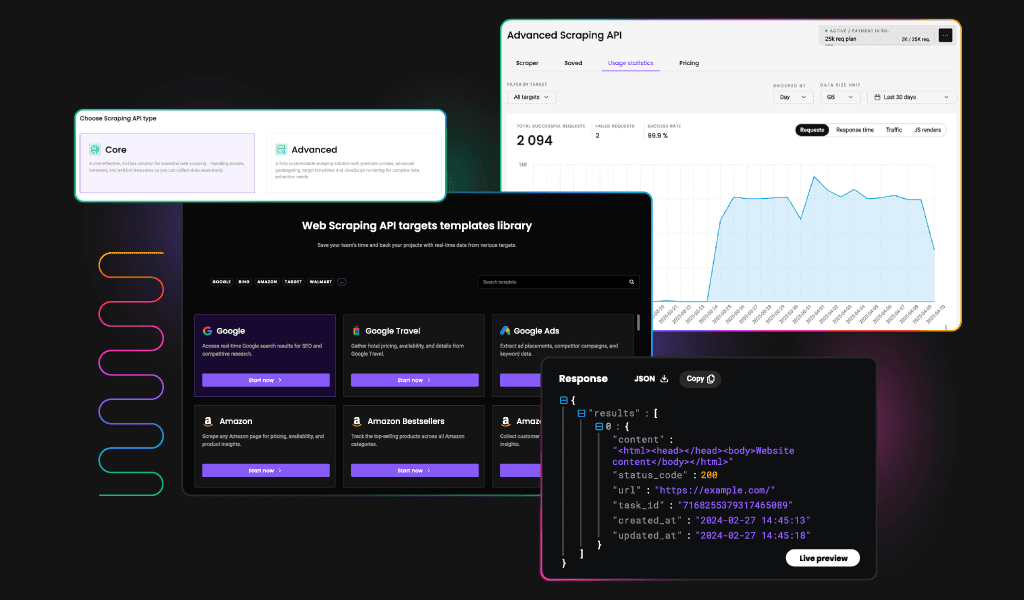

New Web Scraping API: One API for All Your Scraping Needs

Web scraping should be simple. Yet, choosing the right solution often feels like a challenge – different APIs for different targets, multiple subscriptions, and unnecessary complexity. That’s why we’re introducing a more convenient way to collect data from various targets – our four scraping APIs are becoming one, more powerful than ever, Web Scraping API. Now, you can collect data from all targets – eCommerce, SERPs, social media, and web, with one unified API.

Gabriele Vitke

Last updated: Apr 07, 2025

4 min read

How to Scrape Amazon Prices Using Excel

If you’re here, you already know Amazon constantly tweaks product prices. The eCommerce giant makes around 2.5 million price changes daily, resulting in the average item seeing new pricing roughly every ten minutes. For sellers, marketers, and savvy shoppers, that creates both a challenge and an opportunity.

This comprehensive guide walks you through proven methods – from Excel's built-in tools to powerful scraping APIs that can simplify your Amazon price monitoring workflow.

Zilvinas Tamulis

Last updated: Mar 31, 2025

8 min read

Web Scraping in R: Beginner's Guide

As a data scientist, you’re already using R for data analysis and visualization. But what if you could also conveniently use it to gather data directly from websites? With the R programming language, you can seamlessly scrape static pages, HTML tables, and even dynamic content. Let’s explore how you can take your data collection to the next level!

Zilvinas Tamulis

Last updated: Mar 27, 2025

5 min read

Scraping Amazon Product Data Using Python: Step-by-Step Guide

This comprehensive guide will teach you how to scrape Amazon product data using Python. Whether you’re an eCommerce professional, researcher, or developer, you’ll learn to create a solution to extract valuable insights from Amazon’s marketplace. By following this guide, you’ll acquire practical knowledge on setting up your scraping environment, overcoming common challenges, and efficiently collecting the needed data.

Zilvinas Tamulis

Last updated: Mar 27, 2025

15 min read

Beautiful Soup Web Scraping: How to Parse Scraped HTML with Python

Web scraping with Python is a powerful technique for extracting valuable data from the web, enabling automation, analysis, and integration across various domains. Using libraries like Beautiful Soup and Requests, developers can efficiently parse HTML and XML documents, transforming unstructured web data into structured formats for further use. This guide explores essential tools and techniques to navigate the vast web and extract meaningful insights effortlessly.

Zilvinas Tamulis

Last updated: Mar 25, 2025

14 min read

Understanding Proxy Errors: Causes, Solutions, and How to Fix Them

Proxy errors like 404, 407, or 503 can be frustrating roadblocks – but they’re also valuable clues. These HTTP status codes point to specific issues in the communication between your browser, proxy, and the target server. Whether it's a client-side misconfiguration or a server-side block, understanding these errors is the first step to resolving them. In this guide, we’ll break down the most common proxy errors and walk you through practical solutions to fix them fast.

Kipras Kalzanauskas

Last updated: Mar 25, 2025

7 min read

10 Creative Web Scraping Ideas for Beginners

They say you’ll never have time to read all the books or watch all the movies in your entire lifetime – but what if you could at least gather all their titles, ratings, and reviews in seconds? That’s the magic of web scraping: automating the impossible, collecting large amounts of data, and uncovering hidden insights from all across the internet. In this article, we’ll explore valuable web scraping ideas that you can create even with little to no experience – completely free of charge.

Zilvinas Tamulis

Last updated: Mar 20, 2025

12 min read

Amazon Price Scraping with Google Sheets

Amazon’s massive product ecosystem makes it a goldmine for price tracking, competitive analysis, and market research. This guide covers methods for Amazon price scraping, from small-scale tracking to enterprise-grade solutions, plus how to import data into Google Sheets for real-time analysis. Whether you’re hunting deals or analyzing eCommerce trends, we’ve got you covered.

Lukas Mikelionis

Last updated: Mar 14, 2025

9 min read

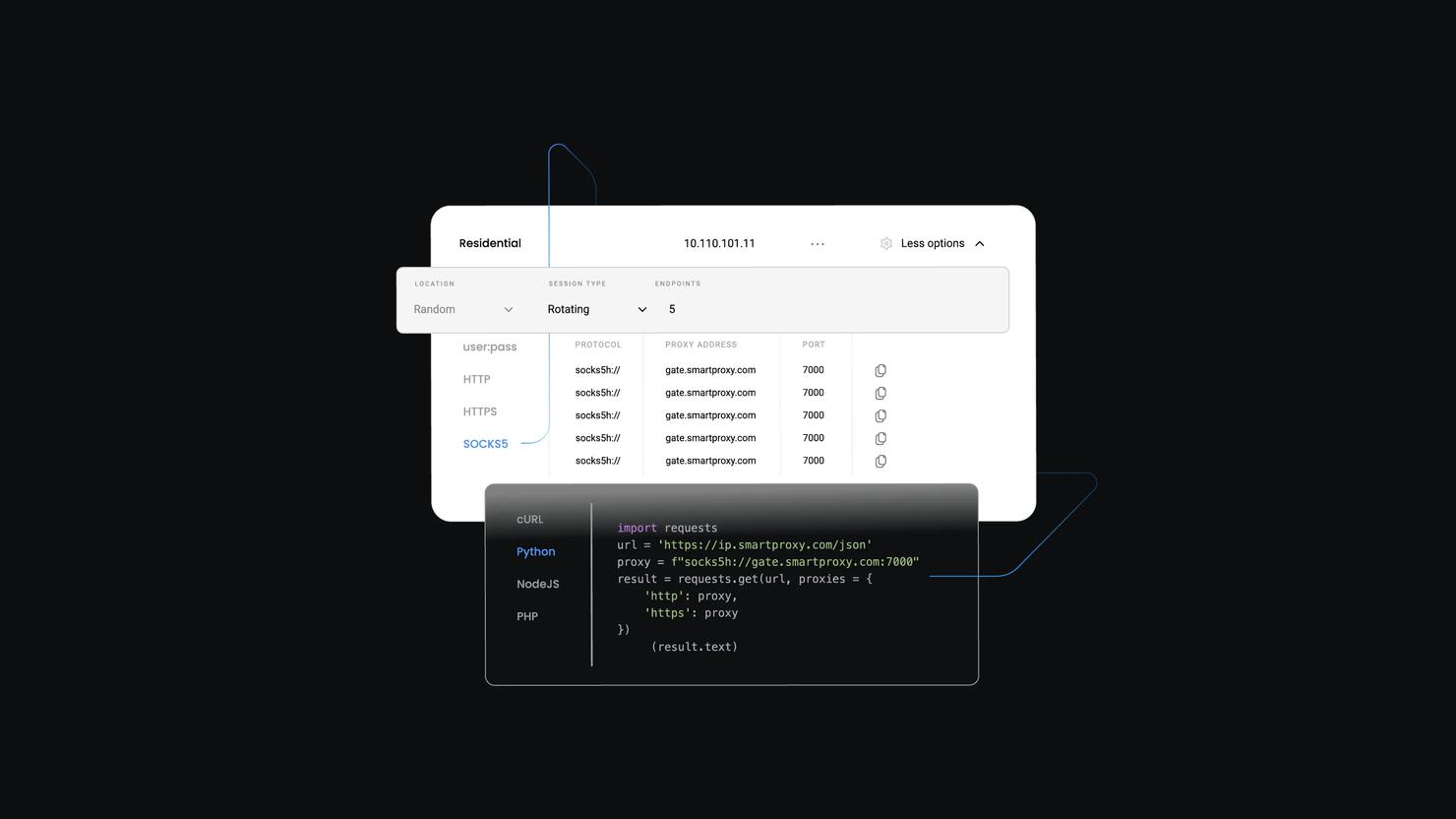

SOCKS Proxy Explained: Definition, Benefits & Use Cases

A proxy protocol is a set of rules that govern how internet traffic is intercepted, routed, and processed between clients and servers. When it comes to widely used proxy protocols, HTTP often steals the spotlight, leaving SOCKS proxies overlooked despite their flexibility. To help you decide if SOCKS proxies are right for your needs, this blog post will break down what they are, explain how they differ from other proxy types, explore their benefits and drawbacks, and look at the most common use cases.

Dominykas Niaura

Last updated: Mar 14, 2025

10 min read

How to Scrape Google News With Python

Keeping up with everything happening around the world can feel overwhelming. With countless news sites competing for your attention using catchy headlines, it’s hard to find what you need among celebrity tea and what the Kardashians were up to this week. Fortunately, there’s a handy tool called Google News that makes it easier to stay informed by helping you filter out the noise and focus on essential information. Let’s explore how you can use Google News together with Python to get the key updates delivered right to you.

Zilvinas Tamulis

Last updated: Mar 13, 2025

15 min read

How AI Secretly Gathers Data and What They're Not Telling You

Artificial Intelligence powers everything from chatbots to complex data analysis tools. But behind the sleek interfaces and impressive capabilities lies a hidden process – the petabytes of data collected. We sat down with our CEO, Vytautas Savickas, to discuss the AI revolution and how data is being collected to fuel various tools.

Benediktas Kazlauskas

Last updated: Mar 12, 2025

6 min read

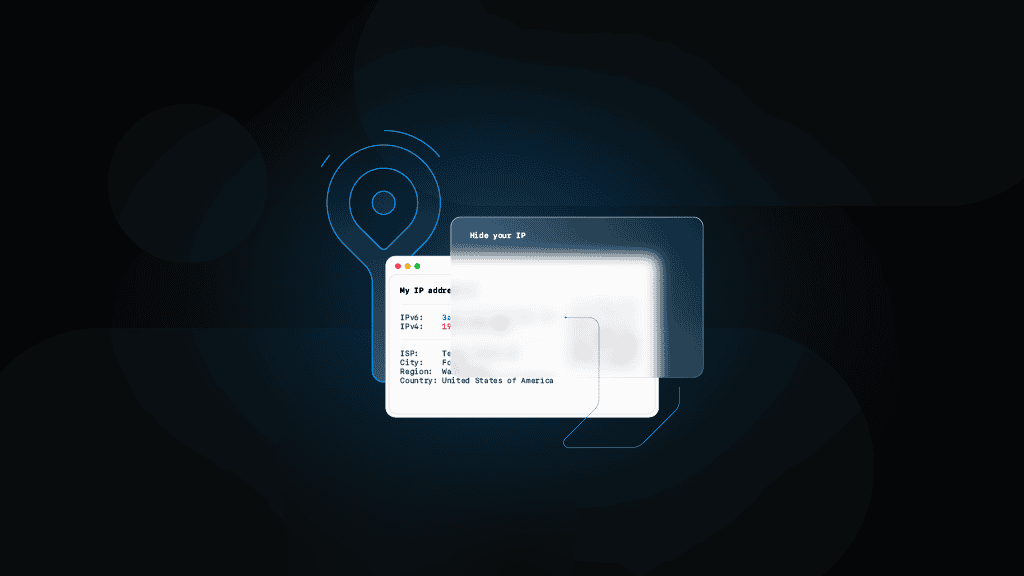

How to Hide Your IP Address: Top 5 Ways

Because the internet never forgets and every click leaves a digital footprint, hiding your IP address is sometimes essential. Staying anonymous enhances data security and grants access to geo-restricted content, though it comes with a few trade-offs. This guide covers 5 effective ways to hide your IP and protect your online privacy.

Vilius Sakutis

Last updated: Mar 07, 2025

7 min read

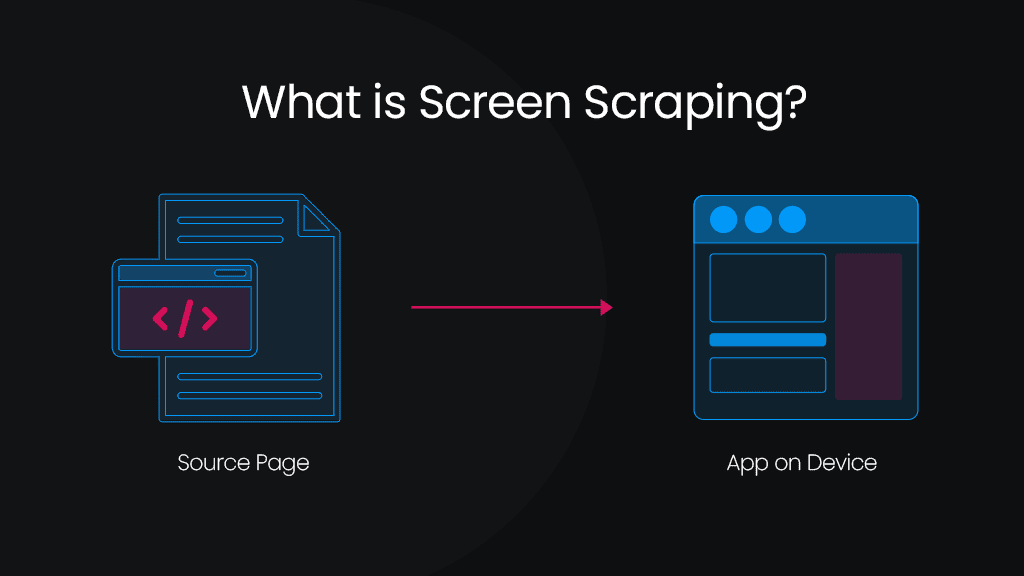

What is Screen Scraping? Definition & Use Cases

Screen scraping is a valuable technique for extracting data from websites and applications when structured access methods, such as APIs, are unavailable. It enables businesses and developers to gather information for market research, automation, and system integration. Unlike traditional web scraping, which directly extracts structured data from HTML, screen scraping retrieves content from a website’s graphical interface, making it useful for capturing dynamic or visually rendered data. When combined with residential proxies, screen scraping becomes even more effective by bypassing IP restrictions and anti-bot measures, ensuring uninterrupted web data extraction and collection.

Vilius Sakutis

Last updated: Mar 06, 2025

5 min read

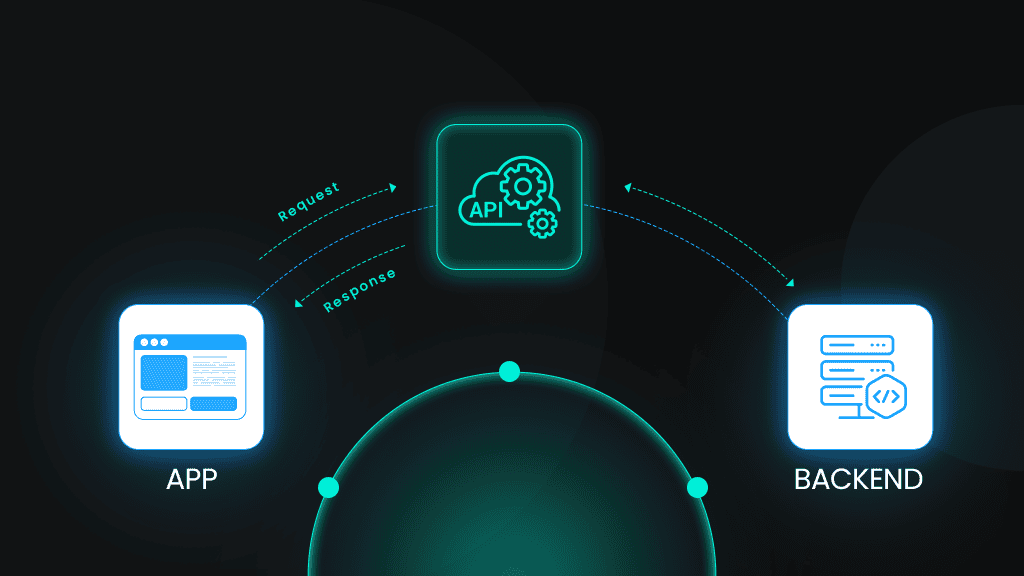

What is an API?

An application programming interface (API) works like a messenger. It allows different software systems to communicate without developers having to build custom links for every connection. For instance, one service might supply map data to a mobile app, while another handles payment processing for online transactions. In these times, that demands seamless integration, and APIs play a vital role. They automate tasks, enable large-scale data collection, and support sophisticated functions like web scraping and proxy management. By bridging diverse platforms and streamlining data exchange, they help businesses stay competitive and reduce the complexity of managing multiple, often inconsistent endpoints.

Kotryna Ragaišytė

Last updated: Mar 06, 2025

6 min read

Web Scraping with Cheerio and Node.js: A Comprehensive Guide

Scraping static web pages can be challenging, but Cheerio makes it fast and efficient. Cheerio is a lightweight Node.js library that parses and manipulates HTML using a syntax similar to jQuery. This guide covers key concepts, practical code examples, and essential techniques to help you extract web data with ease—no matter your experience level.

Zilvinas Tamulis

Last updated: Mar 04, 2025

6 min read