Data Collection

The process of data collection is vital in all kinds of industries. It helps businesses learn about the market, know their customers better and adapt to their needs. Data collection can be automated by scraping a set target. It’s extra useful for analyzing business competition, records, trends, and other data.

14-day money-back option

How to Use Wget With a Proxy: Configuration, Authentication, and Troubleshooting

Lukas Mikelionis

Last updated: Mar 17, 2026

15 min read

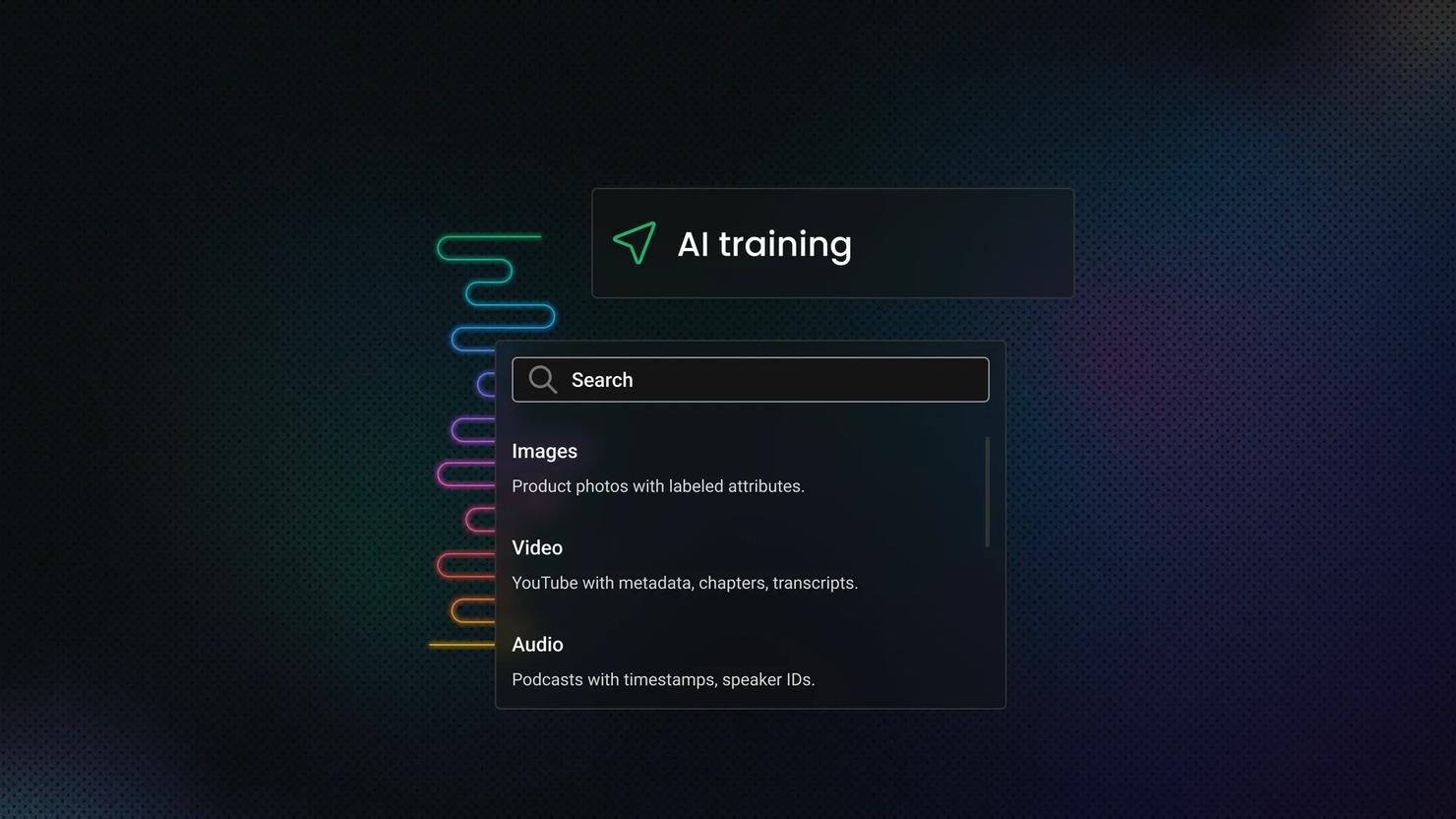

Scraping Multimedia Data for AI Training: Images, Video, Audio

Images, video, and audio are harder to collect and clean than text, and much less useful without context. Multimedia scraping helps you collect media, preserve the metadata that gives it meaning, and turn scattered files into training-ready datasets. The hard part is treating each media type differently from the start.

Vytautas Savickas

Last updated: Mar 13, 2026

8 min read

Scraping Yelp: A Step-by-Step Tutorial

Yelp doesn't make scraping easy. The data you need is spread across multiple backend systems (no single endpoint gives you everything), and standard HTTP libraries get blocked before the first response. This guide covers every extraction method with Python, including the TLS impersonation and anti-bot techniques you need to avoid blocks at scale.

Justinas Tamasevicius

Last updated: Mar 12, 2026

15 min read

Concurrency vs. Parallelism: Key Differences and When To Use Each

A bootstrapped data operation found that their web scrapers crawled to a halt as they tried to scale from 100 to 10,000 URLs. This is a common challenge with sequential processing and exactly why understanding concurrency vs parallelism is key to building efficient, scalable systems. This guide explains both concepts, their key differences, and limitations, so you can quickly decide the best mechanism for your project.

Justinas Tamasevicius

Last updated: Mar 10, 2026

10 min read

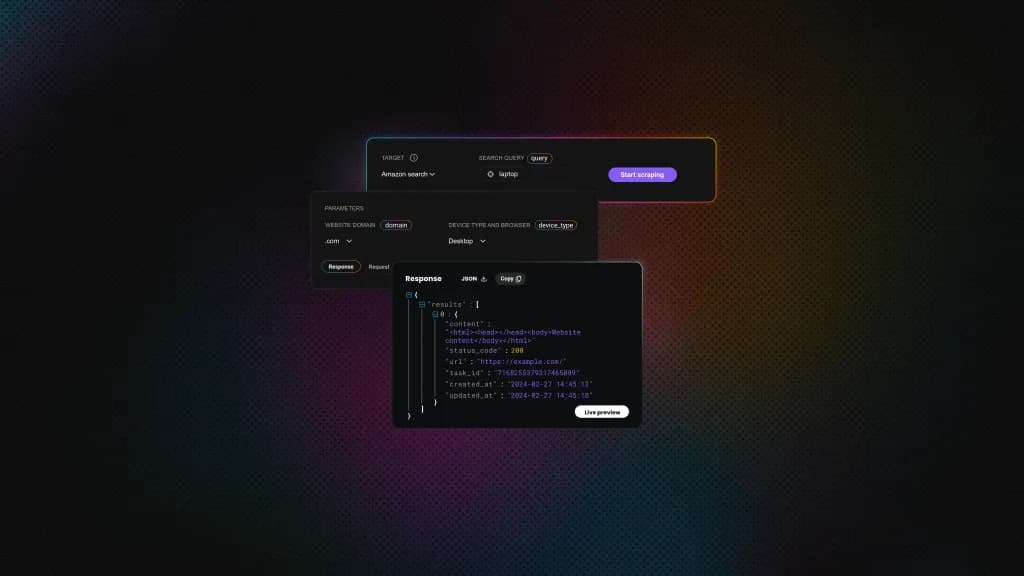

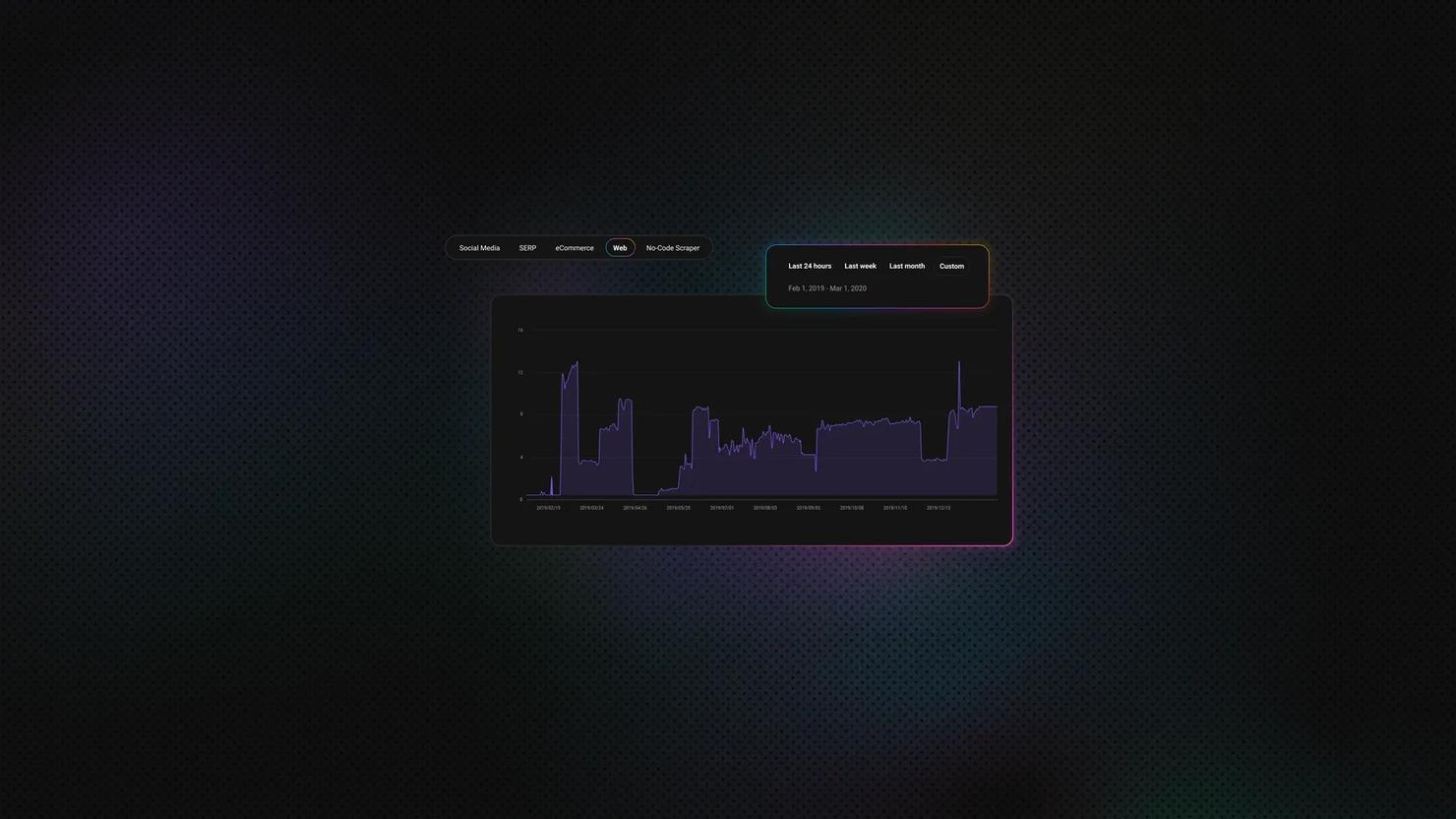

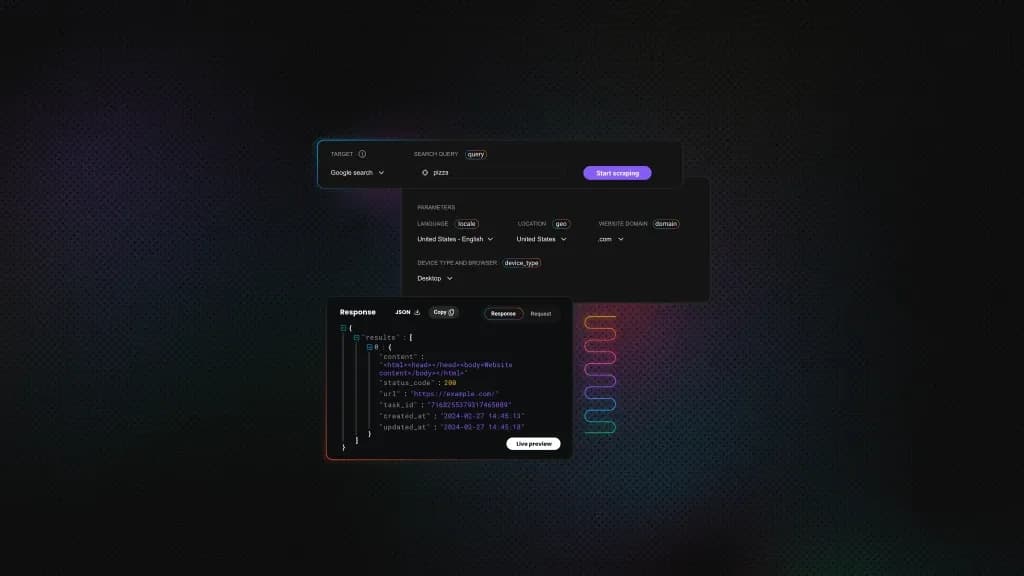

New Scraping API: Scraping that Adapts to Your Targets

Most scraping APIs treat every request the same – maximum power, maximum cost. But real workloads are mixed: simple HTML pages, JavaScript-heavy targets, and protected sites that need premium proxies. If your pipeline covers all three, you’re paying worst-case prices on every request. We built a scraping API that matches cost to complexity, one request at a time.

Gabriele Vitke

Last updated: Mar 09, 2026

4 min read

How To Use a Proxy With HttpClient in C#: From Setup to Production

Lukas Mikelionis

Last updated: Mar 04, 2026

8 min read

HTTPX vs. Requests vs. AIOHTTP: How to Choose the Right Python HTTP Client

Requests, HTTPX, and AIOHTTP all make HTTP requests, but they differ in how they handle concurrency. Requests is synchronous and has been the default since 2011. HTTPX gives you both sync and async with HTTP/2 support. AIOHTTP is async-only and faster at high concurrency, but has a steeper learning curve. The right choice depends on your async model, whether you need WebSockets or HTTP/2, and how much code you're willing to rewrite. This article covers architecture, performance data, proxy setup, migration paths, and common mistakes in production scraping setups.

Justinas Tamasevicius

Last updated: Mar 03, 2026

12 min read

Python Web Crawlers: Guide to Building, Scaling, and Maintaining Crawlers

TL;DR: A web crawler is a program that systematically navigates the web by following links from page to page. Python is the go-to language for building crawlers thanks to libraries like Requests, Beautiful Soup, and Scrapy. This guide covers everything from your first 50-line crawler to a production-grade Scrapy setup with proxy integration, JavaScript rendering, and distributed architecture. If you've ever had to collect data from hundreds or thousands of pages and done it manually, this is for you.

Justinas Tamasevicius

Last updated: Mar 02, 2026

10 min read

Mastering Scrapy for Scalable Python Web Scraping: A Practical Guide

Scrapy is a powerful web scraping framework available in Python. Its asynchronous architecture makes it faster than sequential scrapers built with Requests or Beautiful Soup, and it includes everything needed for production-ready scraping: spiders, items, pipelines, throttling, retries, data export, and middleware. In this guide, you'll learn how to set up Scrapy, build and customize spiders, handle pagination, structure and store data, extend Scrapy with middlewares and proxies, and apply best practices for scraping at scale.

Dominykas Niaura

Last updated: Mar 02, 2026

10 min read

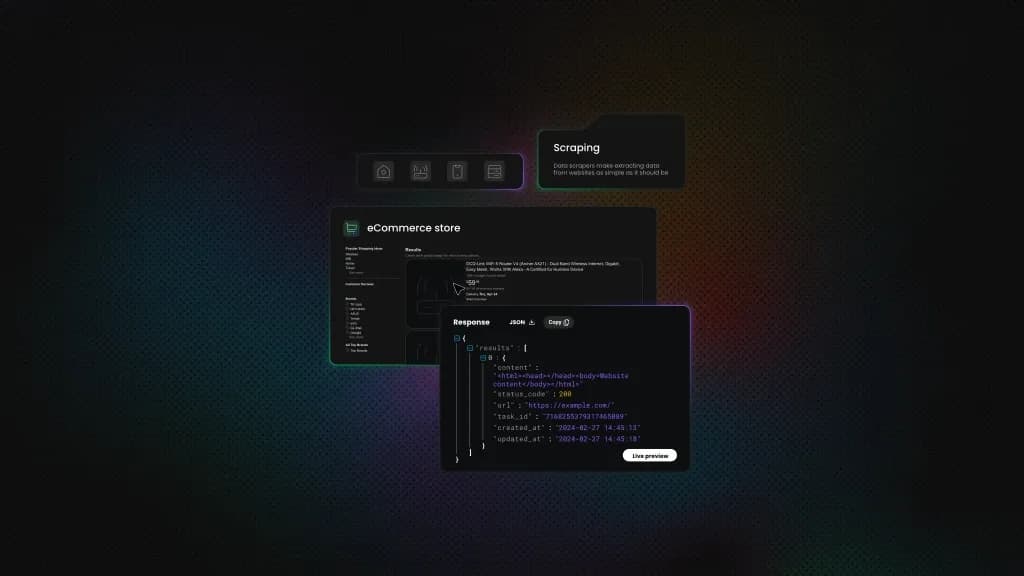

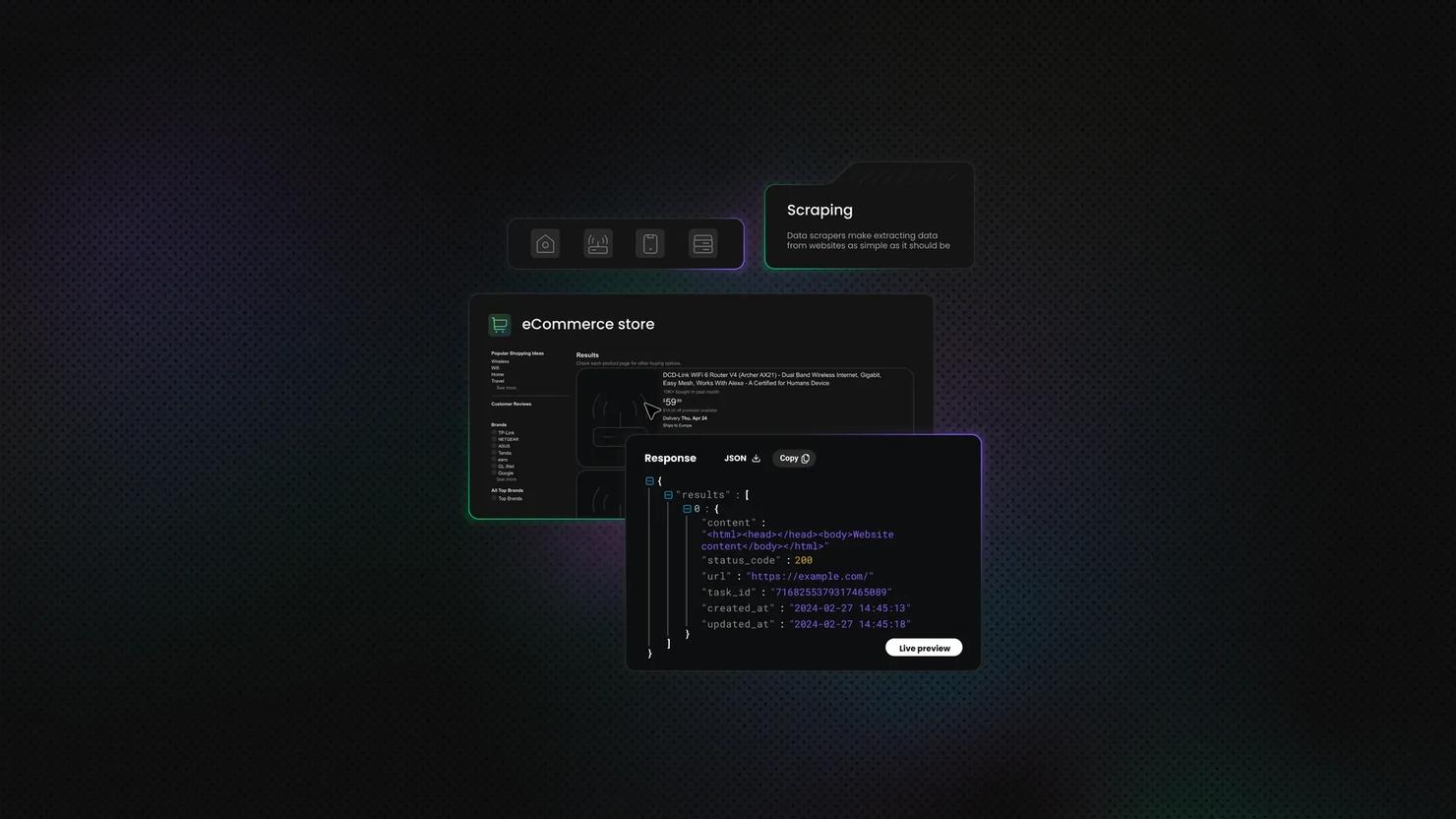

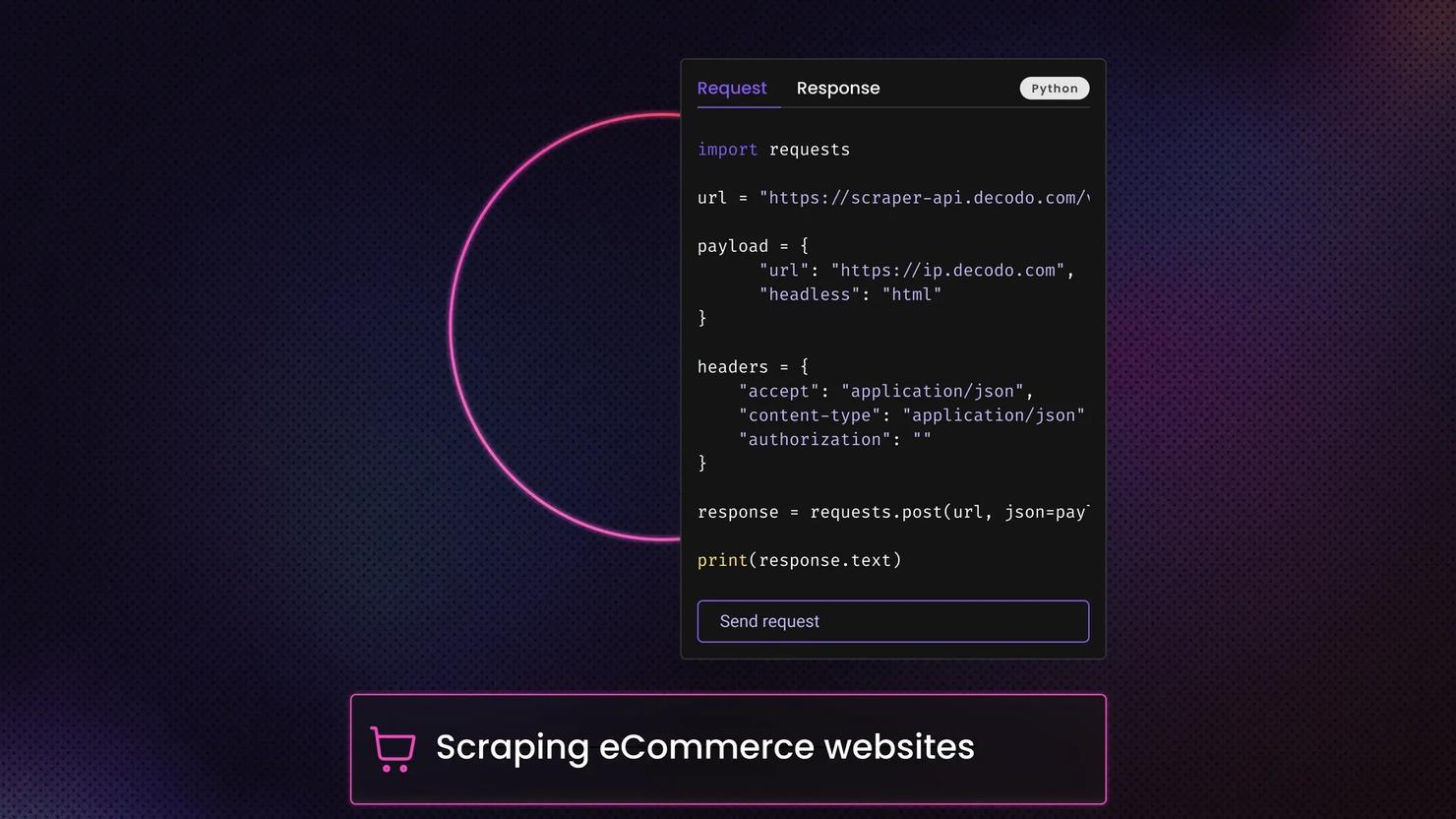

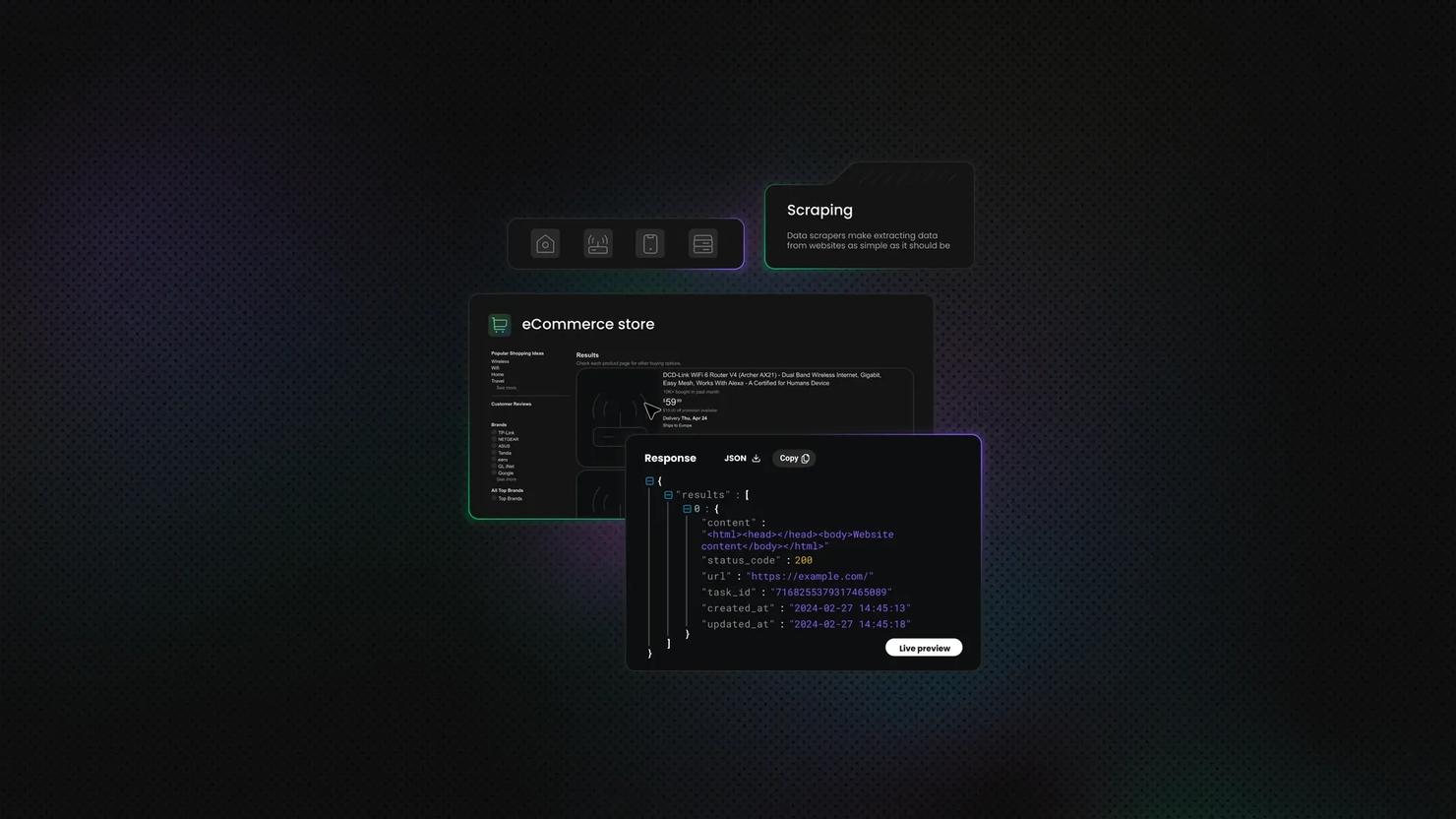

The Ultimate Guide to Scraping eCommerce Websites: Tools, Techniques, and Best Practices

Manual eCommerce data collection breaks because the data doesn’t stay stable. Prices change daily, products disappear and reappear under the same URL, and even mid-sized stores list tens of thousands of SKUs. On top of that, much of the content is rendered with JavaScript, layouts shift due to constant A/B testing, and anti-bot systems detect repeated automated access. This guide shows you how to analyze a target site and choose the right extraction approach.

Vytautas Savickas

Last updated: Feb 20, 2026

12 min read

Complete Guide to Web Scraping With OpenClaw and Decodo

Zilvinas Tamulis

Last updated: Feb 19, 2026

10 min read

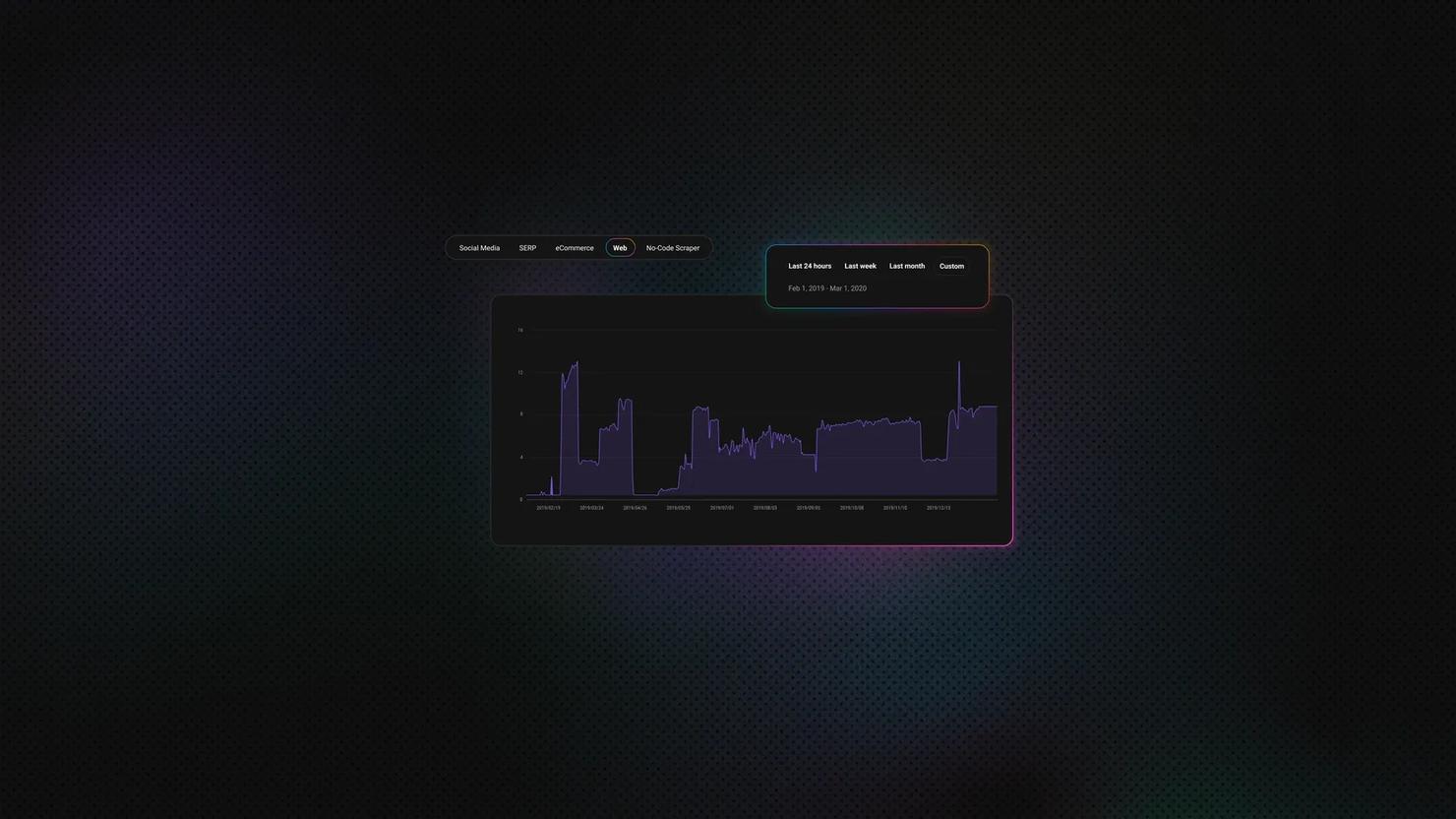

Web Scraping at Scale Explained

Justinas Tamasevicius

Last updated: Feb 18, 2026

10 min read

How To Find All URLs on a Domain

Justinas Tamasevicius

Last updated: Feb 09, 2026

16 min read

What is Data Scraping? Definition and Best Techniques (2026)

The data scraping tools market is growing significantly, valued at approximately $875.46M in 2026. The market is projected to grow more due to the increasing demand for real-time data collection across various industries.

Vytautas Savickas

Last updated: Jan 30, 2026

6 min read

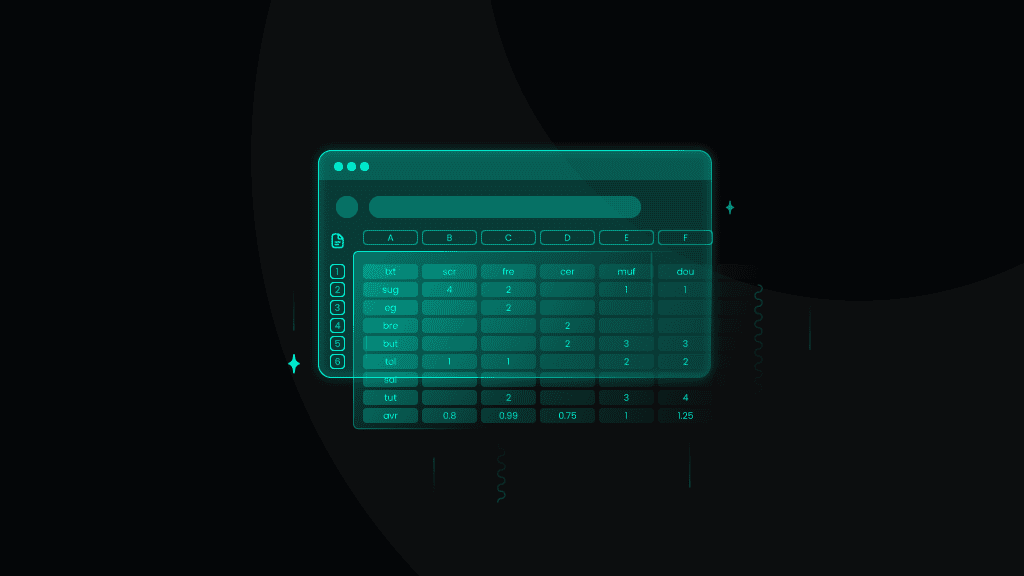

Master VBA Web Scraping for Excel: A 2026 Guide

Excel is an incredibly powerful data management and analysis tool. But did you know that it can also automatically retrieve data for you? In this article, we’ll explore Excel's many features and its integration with Visual Basic for Applications (VBA) to effectively scrape and parse data from the web.

Zilvinas Tamulis

Last updated: Jan 29, 2026

7 min read

How to Scrape Etsy in 2026

Etsy is a global marketplace with millions of handmade, vintage, and unique products across every category imaginable. Scraping Etsy listings gives you access to valuable market data – competitor pricing, trending products, seller performance, and customer sentiment. In this guide, we'll show you how to scrape Etsy using Python, Playwright, and residential proxies to extract product titles, prices, ratings, shop names, and URLs from any Etsy search or category page.

Dominykas Niaura

Last updated: Jan 22, 2026

10 min read

How to Run Python Code in Terminal

The terminal might seem intimidating at first, but it's one of the most powerful tools for Python development. The terminal gives you direct control over your Python environment for such tasks as running scripts, managing packages, or debugging code. In this guide, we'll walk you through everything you need to know about using Python in the terminal, from basic commands to advanced troubleshooting techniques.

Dominykas Niaura

Last updated: Jan 20, 2026

10 min read

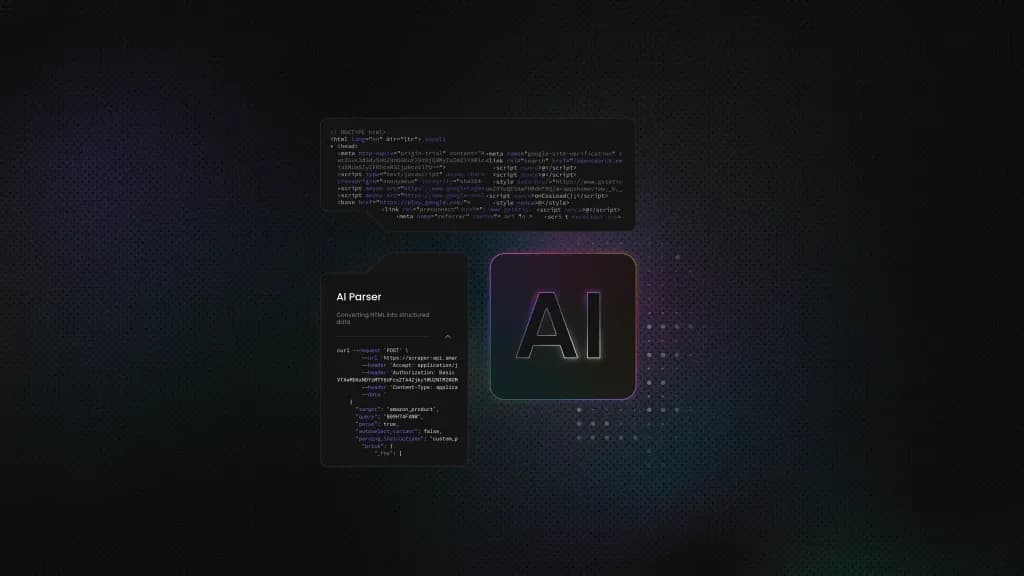

How to Leverage ChatGPT for Effective Web Scraping

Artificial intelligence is transforming various fields, ushering in new possibilities for automation and efficiency. As one of the leading AI tools, ChatGPT can be especially helpful in the realm of data collection, where it serves as a powerful ally in extracting and parsing information. So, in this blog post, we provide a step-by-step guide to using ChatGPT for web scraping. Additionally, we explore the limitations of using ChatGPT for this purpose and offer an alternative method for scraping the web.

Dominykas Niaura

Last updated: Jan 20, 2026

8 min read