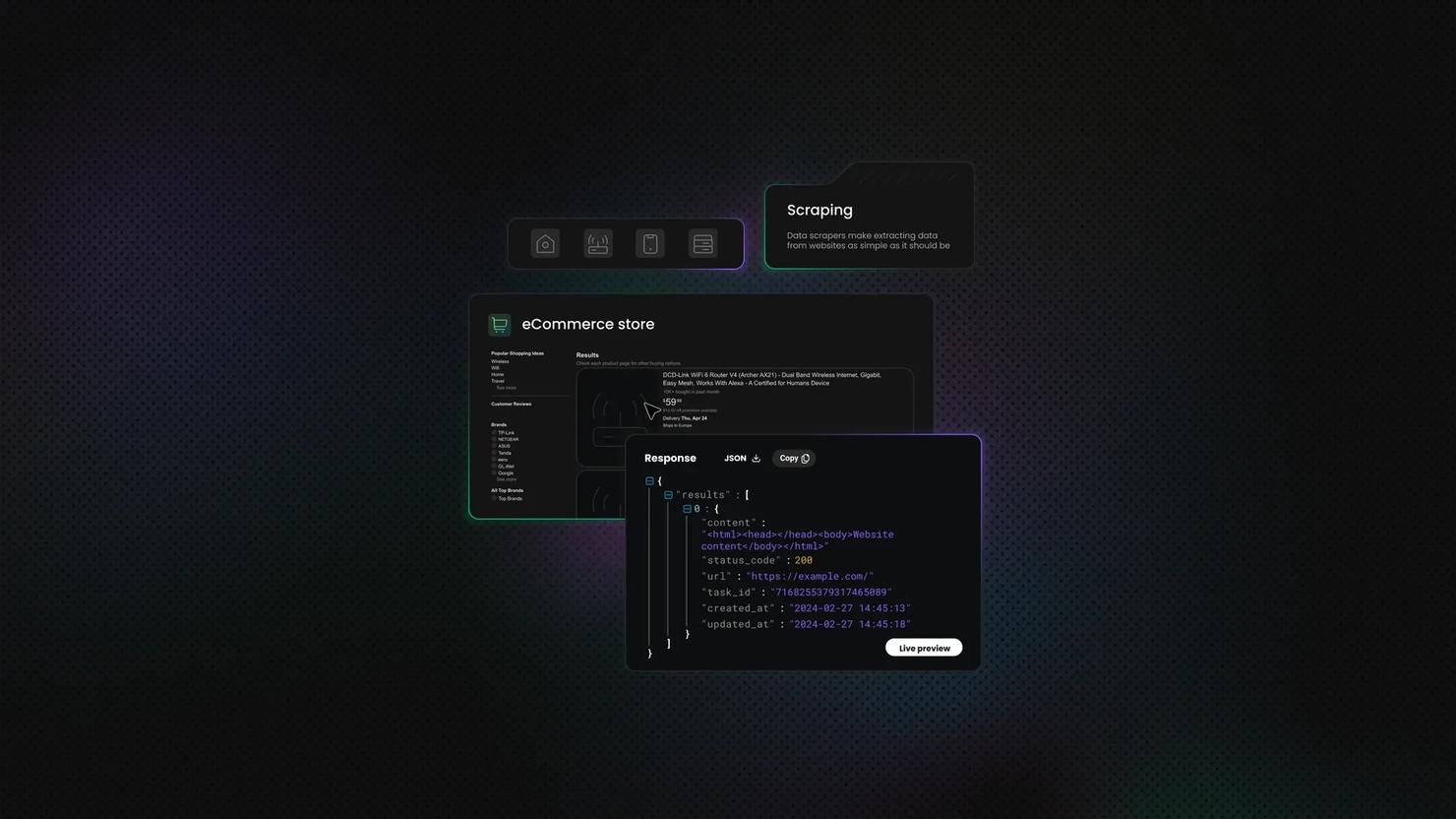

Data Collection

The process of data collection is vital in all kinds of industries. It helps businesses learn about the market, know their customers better and adapt to their needs. Data collection can be automated by scraping a set target. It’s extra useful for analyzing business competition, records, trends, and other data.

14-day money-back option

How To Scrape Websites With Dynamic Content Using Python

You've mastered static HTML scraping, but now you're staring at a site where Requests + Beautiful Soup returns nothing but an empty <div> and <script> tags. Welcome to JavaScript-rendered content, where you get the material after the initial request. In this guide, we'll tackle dynamic sites using Python and Selenium (plus a Beautiful Soup alternative).

Justinas Tamasevicius

Last updated: Dec 16, 2025

12 min read

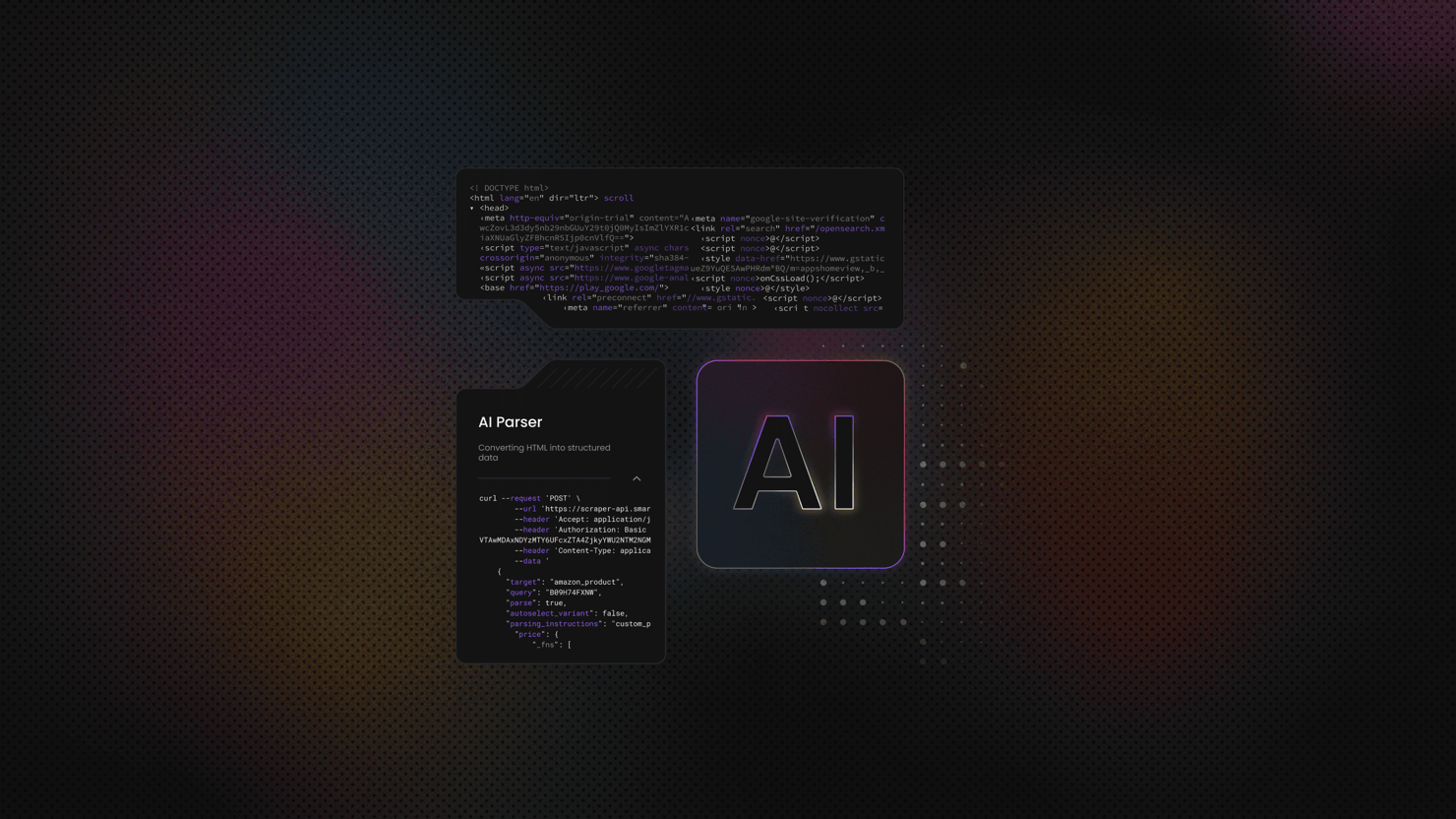

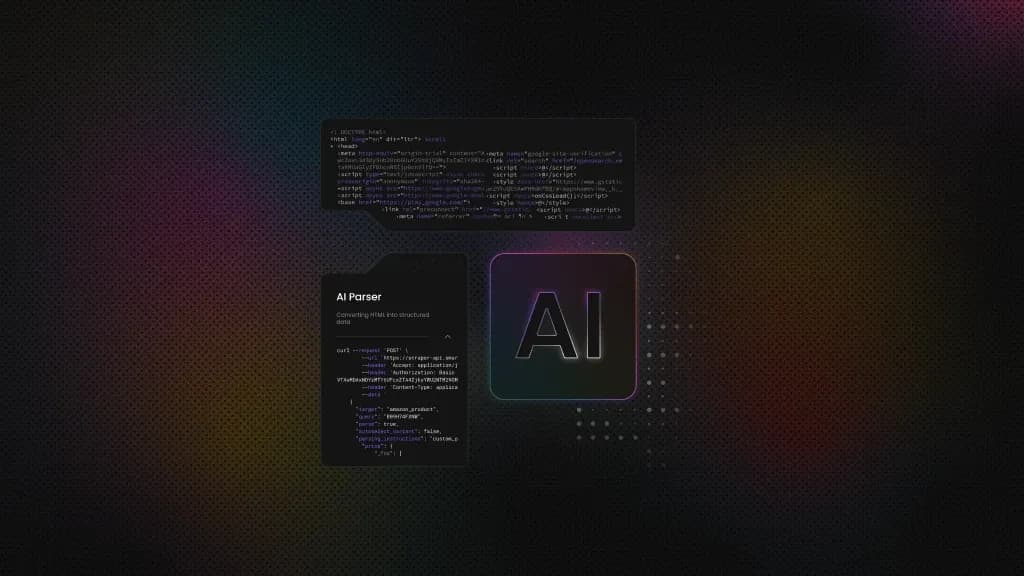

What Is AI Scraping? A Complete Guide

AI web scraping is the process of extracting data from web pages with the help of machine learning and large language models. It uses them to read a web page the same way humans do, by understanding its meaning. The problem with traditional scrapers is that they tend to stop working when the HTML structure is inconsistent or incomplete. In these cases, AI helps scrapers to quickly adapt and find the right information. Sometimes, even a single misplaced tag can ruin your whole web scraping run. AI solves that by shifting focus to the meaning of the content rather than relying on rigid rules to define what data to scrape. That's why AI web scraping is becoming a practical choice for many projects.

Vytautas Savickas

Last updated: Dec 15, 2025

10 min read

How to Scrape SoundCloud for AI Training: Step-By-Step Tutorial

SoundCloud is a mother lode for AI training data, with millions of audio tracks spanning every genre and style imaginable. In this guide, we’ll show you how to tap into that library using Node.js, with the help of proxies. You’ll get hands-on code examples and learn how to collect audio data for three key AI use cases: music generation, audio enhancement, and voice training.

Dominykas Niaura

Last updated: Dec 15, 2025

10 min read

Web Scraping with Ruby: A Simple Step-by-Step Guide

Web scraping with Ruby might not be the first language that comes to mind for data extraction – Python usually steals the spotlight here. However, Ruby's elegant syntax and powerful gems make it surprisingly effective. This guide walks you through building Ruby scrapers from your first HTTP request to production-ready systems that handle JavaScript rendering, proxy rotation, and anti-bot measures. We'll cover essential tools like HTTParty and Nokogiri, show practical code examples, and teach you how to avoid blocks and scale safely.

Zilvinas Tamulis

Last updated: Dec 12, 2025

15 min read

Real Estate Data Scraping: Ultimate Guide

Real estate web scraping has become an essential way to collect up-to-date property data from platforms like Zillow, Realtor.com, Redfin, Rightmove, and Idealista without manual effort. Automated extraction helps individuals and businesses track prices, compare neighborhoods, and monitor supply trends with higher accuracy. In this guide, you'll get a practical overview of the tools, methods, and considerations involved in working with real estate listings as structured data for analysis, research, and everyday business use.

Dominykas Niaura

Last updated: Dec 10, 2025

8 min read

How to Do Web Scraping with curl: Full Tutorial

Web scraping is a great way to automate the extraction of data from websites, and curl is one of the simplest tools to get started with. This command-line utility lets you fetch web pages, send requests, and handle responses without writing complex code. It's lightweight, pre-installed on most systems, and perfect for quick scraping tasks. Let's dive into everything you need to know.

Zilvinas Tamulis

Last updated: Dec 02, 2025

16 min read

Web Scraping With Java: The Complete Guide

Web scraping is the process of automating page requests, parsing the HTML, and extracting structured data from public websites. While Python often gets all the attention, Java is a serious contender for professional web scraping because it's reliable, fast, and built for scale. Its mature ecosystem with libraries like Jsoup, Selenium, Playwright, and HttpClient gives you the control and performance you need for large-scale web scraping projects.

Justinas Tamasevicius

Last updated: Nov 26, 2025

10 min read

How to Scrape Nasdaq Data: A Complete Guide Using Python and Alternatives

Nasdaq offers a wealth of stock prices, news, and market reports. Manually collecting this data is a Sisyphean task, since new information appears constantly. Savvy investors, analysts, and traders turn to web scraping instead, automating data gathering to power more intelligent analysis and trading strategies. This guide walks you through building a Nasdaq scraper with Python, browser automation, APIs, and proxies to extract both real-time and historical market data.

Zilvinas Tamulis

Last updated: Nov 21, 2025

14 min read

How to Scrape Images From Any Website With Python

If you need a bunch of images and the thought of saving them one by one already feels tedious, you're not alone. This can be especially draining when you're preparing a dataset for a machine learning project. The good news is that web scraping makes the whole process faster and far more manageable by letting you collect large quantities of images in just a few steps. In this blog post, we'll walk you through a straightforward way to grab images from a static website. We'll use Python, a few handy libraries, and proxies to keep things running smoothly.

Dominykas Niaura

Last updated: Nov 20, 2025

10 min read

Guide to Web Scraping Airbnb: Methods, Challenges, and Best Practices

Web scraping Airbnb (a global platform for short-term rentals and experiences) involves automatically extracting data from listings to reveal insights unavailable through the platform itself. It's useful for analyzing markets, tracking competitors, or even planning personal trips. Yet, Airbnb's anti-scraping defenses and dynamic design make it a technically demanding task. This guide will teach you how to scrape Airbnb listings successfully using Python.

Dominykas Niaura

Last updated: Nov 17, 2025

10 min read

How to Scrape Hotel Listings: Unlocking the Secrets

Scraping hotel listings is a powerful tool for gathering comprehensive data on accommodations, prices, and availability from various online sources. Whether you're looking to compare rates, analyze market trends, or create a personalized travel plan, scraping allows you to efficiently compile the information you need. In this article, we'll explain how to scrape hotel listings, ensuring you can leverage this data to its fullest potential.

Vilius Sakutis

Last updated: Nov 13, 2025

5 min read

How to Web Scrape a Table with Python: a Complete Guide

HTML tables are one of the most common ways websites organize data – financial reports, product listings, sports scores, population statistics. But this data is locked in the webpage's layout. To use it, you need to extract it. This guide will show you how to do it using Python, starting with simple static tables and working up to complex dynamic ones.

Justinas Tamasevicius

Last updated: Nov 10, 2025

9 min read

Mastering Web Scraping Pagination: Techniques, Challenges, and Python Solutions

Pagination is the system websites use to split large datasets across multiple pages for faster loading and better navigation. In web scraping, handling pagination is essential to capture complete datasets rather than just the first page of results. This guide explains what pagination is, the challenges it creates, and how to handle it efficiently with Python.

Dominykas Niaura

Last updated: Oct 28, 2025

10 min read

How to Scrape Craigslist with Python: Jobs, Housing, and For Sale Data

Craigslist is known as a valuable source of classified data across jobs, housing, and marketplace items for sale. However, scraping Craigslist presents challenges like CAPTCHAs, IP blocks, and anti-bot measures. This guide walks you through three Python scripts for extracting housing, job, and for sale item listings while handling these obstacles effectively with proxies or a scraper API.

Dominykas Niaura

Last updated: Oct 27, 2025

10 min read

Scraping Google Trends: Methods, Tools, and Best Practices

While using Google Trends, you can discover the search interest rates for specific keywords during specific time frames in specific regions and analyze the popularity of Search keywords over time. This makes it possible to see how popular a topic is over time and across regions, without exposing sensitive search data. In this guide, we'll explain the kinds of data available from Google Trends, compare scraping techniques, and demonstrate two methods of gathering Google Trends data.

Kipras Kalzanauskas

Last updated: Oct 27, 2025

10 min read

How to Build Production-Ready RAG with LlamaIndex and Web Scraping (2026 Guide)

Production RAG fails when it relies on static knowledge that goes stale. This guide shows you how to build RAG systems that scrape live web data, integrate with LlamaIndex, and actually survive production. You'll learn to architect resilient scraping pipelines, optimize vector storage for millions of documents, and deploy systems that deliver real-time intelligence at scale.

Zilvinas Tamulis

Last updated: Oct 24, 2025

16 min read

Google Removes num=100 Parameter: Impact on Search and Data Collection

In September 2025, Google officially discontinued the num=100 parameter. If you're an SEO professional, data analyst, or someone who prefers viewing all results at once, you've likely already felt the impact on your workflows. In this article, we'll explain what changed, why Google likely made this move, who it affects, and most importantly, how to adapt.

Kotryna Ragaišytė

Last updated: Oct 23, 2025

6 min read

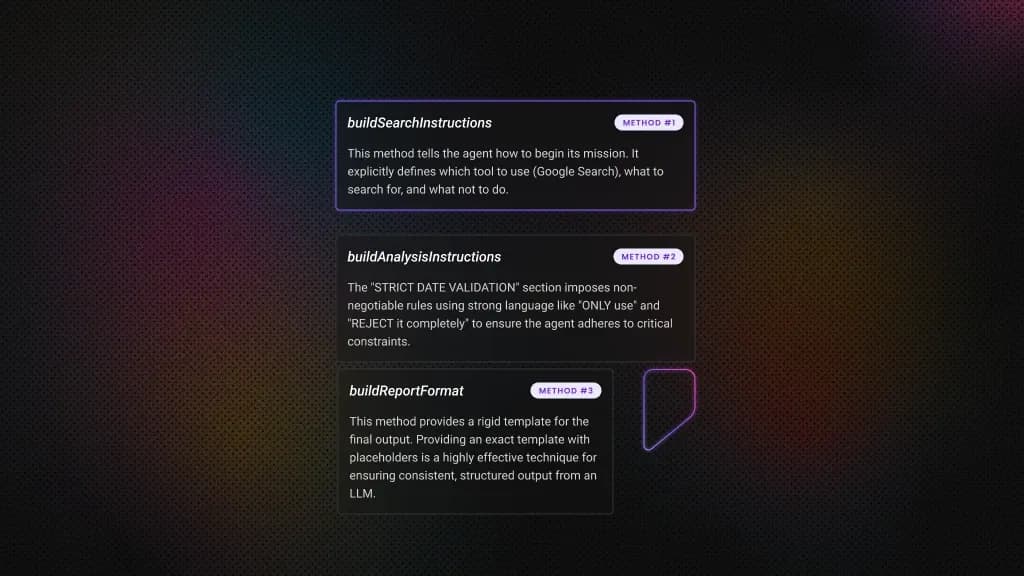

End-to-End AI Workflows with LangChain and Web Scraping API

AI has evolved from programs that just follow rules to systems that can learn and make decisions. Businesses that understand this shift can leverage AI to tackle complex challenges, moving beyond simple task automation. In this guide, we'll walk you through how to connect modern AI tools with live web data to create an automated system that achieves a specific goal. This will give you a solid foundation for building even more sophisticated autonomous applications.

Vytautas Savickas

Last updated: Oct 22, 2025

11 min read